Introduction

Vector Database Platforms are specialized databases that store and process vector embeddings, numeric representations of unstructured data such as text, images, audio, and video. They enable AI-powered applications to perform semantic search, similarity matching, recommendation systems, and high-dimensional analytics. Unlike traditional relational or NoSQL databases, vector databases are optimized for nearest neighbor search and low-latency retrieval of high-dimensional embeddings.

These platforms are increasingly critical in for AI-driven applications, including large language models (LLMs), image recognition, recommendation engines, knowledge graphs, and real-time analytics. Typical use cases include semantic search across large document collections, real-time product recommendations, anomaly detection in streaming data, image similarity search, and personalized content delivery. Buyers evaluating vector databases should consider scalability, query performance, embedding support, indexing strategies, integration with AI/ML frameworks, high availability, cloud compatibility, security, and total cost of ownership.

Best for: AI engineers, data scientists, ML developers, and enterprises building AI-powered search and recommendation systems.

Not ideal for: Traditional transactional workloads or applications with strictly structured data where RDBMS or NoSQL databases suffice.

Key Trends in Vector Database Platforms

- AI-assisted indexing and query optimization for large-scale embeddings

- Fully managed, cloud-native vector databases

- Multi-cloud and hybrid deployment support

- Integration with LLM pipelines, embeddings frameworks, and ML Ops

- Horizontal scaling, sharding, and GPU acceleration

- Support for multiple embedding types: text, image, audio, video

- Real-time search and similarity computations

- Flexible subscription and usage-based pricing models

- Containerized deployments and Kubernetes integration

- Enhanced security, encryption, and compliance features

How We Selected These Tools

- Market adoption and mindshare among AI developers and enterprises

- Feature completeness, including indexing, search, and performance optimizations

- Reliability and latency benchmarks for high-dimensional similarity search

- Security posture and compliance with industry standards

- Integration ecosystem with ML frameworks, cloud platforms, and analytics

- Customer fit across SMB, mid-market, and enterprise organizations

- Support quality, documentation, and community engagement

- Total cost of ownership and flexible pricing models

- Ease of deployment, administration, and operational management

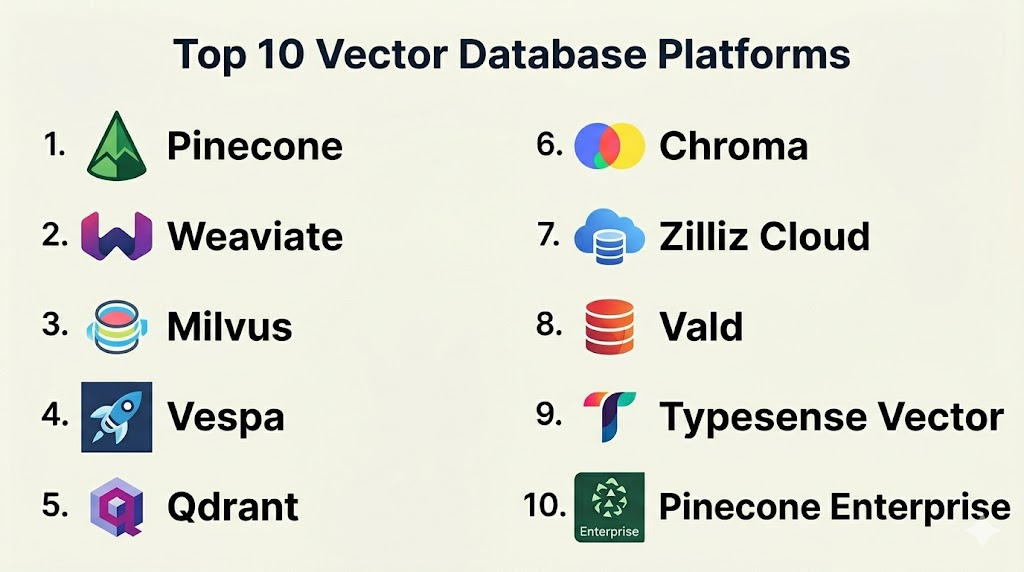

Top 10 Vector Database Platforms

#1 — Pinecone

Short description: Pinecone is a fully managed vector database optimized for high-performance similarity search and embedding storage. Suitable for AI-powered search, recommendation engines, and real-time analytics.

Key Features

- Real-time vector similarity search

- Automatic indexing and sharding

- Horizontal scaling and high availability

- Integration with AI/ML frameworks

- Cloud-native managed service

- REST and gRPC APIs

Pros

- Fully managed, low operational overhead

- High query performance and scalability

Cons

- AWS-focused deployment

- Advanced customization may be limited

Platforms / Deployment

- Cloud

- Web API

Security & Compliance

- TLS encryption, API key-based access

- SOC 2, GDPR

Integrations & Ecosystem

- TensorFlow, PyTorch, Hugging Face

- Python SDK, REST API

- Cloud analytics and monitoring tools

Support & Community

Enterprise support, documentation, active user community

#2 — Weaviate

Short description: Weaviate is an open-source vector search engine with integrated ML models, supporting semantic search, knowledge graphs, and AI applications.

Key Features

- Graph and vector data models

- Semantic search using embeddings

- Multi-tenancy and replication

- RESTful API and GraphQL

- Hybrid search combining structured and unstructured data

Pros

- Open-source flexibility

- Integrated AI models for embeddings

Cons

- Self-hosted deployments require operational expertise

- Scaling clusters may require tuning

Platforms / Deployment

- Linux / macOS / Cloud

- Cloud / Self-hosted / Hybrid

Security & Compliance

- TLS, RBAC, authentication

- Not publicly stated

Integrations & Ecosystem

- Hugging Face, TensorFlow, PyTorch

- Cloud platforms and container orchestration

- REST and GraphQL APIs

Support & Community

Open-source community, optional enterprise subscription

#3 — Milvus

Short description: Milvus is an open-source vector database optimized for similarity search at scale. It is used for AI-driven image, video, and text retrieval applications.

Key Features

- High-dimensional vector indexing

- GPU acceleration for query performance

- Multi-cloud deployment support

- Horizontal scaling and sharding

- AI/ML framework integration

Pros

- Extremely fast vector search

- Scales for large embedding workloads

Cons

- Setup for distributed GPU clusters can be complex

- Documentation may be complex for beginners

Platforms / Deployment

- Linux / Cloud

- Cloud / Self-hosted / Hybrid

Security & Compliance

- TLS, RBAC

- Not publicly stated

Integrations & Ecosystem

- TensorFlow, PyTorch, Hugging Face

- Kubernetes, Docker

- Python SDK and REST API

Support & Community

Open-source community, enterprise support optional

#4 — Vespa

Short description: Vespa is a big data serving engine with vector search capabilities, supporting real-time search, recommendation, and personalization for large-scale applications.

Key Features

- Vector and textual search in one engine

- Real-time streaming updates

- Horizontal scaling for high throughput

- REST API and Java SDK

- ML model integration

Pros

- High throughput for production workloads

- Flexible search combining structured and vector data

Cons

- Complex deployment

- Self-hosted scaling requires expertise

Platforms / Deployment

- Linux / Cloud

- Cloud / Self-hosted / Hybrid

Security & Compliance

- TLS, RBAC

- Not publicly stated

Integrations & Ecosystem

- ML frameworks for embeddings

- Monitoring and analytics pipelines

- REST API and Java SDK

Support & Community

Enterprise support, documentation, open-source community

#5 — Qdrant

Short description: Qdrant is a vector search engine designed for AI applications, with real-time search, filtering, and high-dimensional vector support.

Key Features

- Real-time similarity search

- Filters on metadata for hybrid queries

- High-dimensional vector indexing

- Horizontal scalability

- REST API and gRPC support

Pros

- Easy to deploy and manage

- Efficient for embedding search

Cons

- Cloud-managed offering is limited

- Enterprise features require subscription

Platforms / Deployment

- Linux / Cloud

- Cloud / Self-hosted / Hybrid

Security & Compliance

- TLS, authentication

- Not publicly stated

Integrations & Ecosystem

- Python SDK, REST API

- TensorFlow, PyTorch integration

- Cloud monitoring tools

Support & Community

Open-source community, enterprise subscription

#6 — Chroma

Short description: Chroma is an open-source vector database for embeddings storage and similarity search, optimized for LLM and AI applications.

Key Features

- Embeddings storage and retrieval

- Real-time similarity search

- Integration with LLM frameworks

- Cloud and self-hosted deployments

- Python SDK

Pros

- Flexible, open-source

- Optimized for AI/LLM workloads

Cons

- Limited enterprise support

- Scaling requires configuration

Platforms / Deployment

- Linux / Cloud

- Cloud / Self-hosted / Hybrid

Security & Compliance

- TLS, RBAC

- Not publicly stated

Integrations & Ecosystem

- LangChain, Hugging Face, PyTorch

- REST API and SDK

- Cloud provider integration

Support & Community

Open-source community, basic commercial support

#7 — Zilliz Cloud

Short description: Zilliz Cloud offers managed Milvus services for vector search, enabling large-scale AI search and recommendation without managing infrastructure.

Key Features

- Fully managed Milvus

- Auto-scaling and sharding

- GPU acceleration

- High availability and backups

- ML framework integration

Pros

- Fully managed, low operational overhead

- Scalable for enterprise AI workloads

Cons

- Limited cloud provider options

- Vendor lock-in risk

Platforms / Deployment

- Cloud

- Managed service

Security & Compliance

- TLS, RBAC

- SOC 2, GDPR

Integrations & Ecosystem

- Python SDK, REST API

- TensorFlow, PyTorch, Hugging Face

- Cloud observability tools

Support & Community

Enterprise support, documentation, community forums

#8 — Vald

Short description: Vald is an open-source vector database built on Kubernetes, designed for cloud-native AI search with high-dimensional vectors.

Key Features

- Kubernetes-native deployment

- Auto-scaling and clustering

- High-dimensional vector indexing

- Integration with ML frameworks

- Real-time similarity search

Pros

- Cloud-native and scalable

- Kubernetes integration simplifies operations

Cons

- Requires Kubernetes expertise

- Self-hosted configuration can be complex

Platforms / Deployment

- Linux / Cloud

- Cloud / Self-hosted / Hybrid

Security & Compliance

- TLS, authentication

- Not publicly stated

Integrations & Ecosystem

- TensorFlow, PyTorch

- REST API and SDK

- Kubernetes monitoring tools

Support & Community

Open-source community, documentation

#9 — Typesense Vector

Short description: Typesense Vector extends Typesense for fast vector similarity search alongside structured search, suitable for AI-powered search applications.

Key Features

- Vector indexing alongside traditional search

- Hybrid queries combining metadata filters

- Real-time search performance

- Cloud and self-hosted deployment

- REST API support

Pros

- Fast hybrid search

- Simple deployment

Cons

- Smaller ecosystem

- Limited enterprise features

Platforms / Deployment

- Linux / Cloud

- Cloud / Self-hosted / Hybrid

Security & Compliance

- TLS, authentication

- Not publicly stated

Integrations & Ecosystem

- Python SDK, REST API

- ML embedding frameworks

- Cloud services

Support & Community

Open-source community, commercial support available

#10 — Pinecone Enterprise

Short description: Pinecone Enterprise offers managed vector database with advanced enterprise features for large-scale AI search and recommendation.

Key Features

- Fully managed enterprise-grade service

- Low-latency vector search

- High availability and clustering

- Secure and compliant

- Integration with embeddings pipelines and LLMs

Pros

- Enterprise-ready

- High performance and reliability

Cons

- Cloud-only

- Advanced enterprise features require subscription

Platforms / Deployment

- Cloud

- Managed service

Security & Compliance

- TLS, RBAC, audit logging

- SOC 2, ISO 27001, GDPR, HIPAA

Integrations & Ecosystem

- Python SDK, REST API

- TensorFlow, PyTorch, Hugging Face

- Cloud observability and logging

Support & Community

Enterprise support, documentation, forums

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Pinecone | Managed vector search | Cloud | Cloud | Fully managed, low-latency | N/A |

| Weaviate | Semantic search & knowledge graphs | Linux, macOS, Cloud | Cloud / Self-hosted / Hybrid | Integrated ML models | N/A |

| Milvus | Large-scale embeddings | Linux, Cloud | Cloud / Self-hosted / Hybrid | GPU-accelerated search | N/A |

| Vespa | Big data AI search | Linux, Cloud | Cloud / Self-hosted / Hybrid | Combined vector & textual search | N/A |

| Qdrant | Real-time vector search | Linux, Cloud | Cloud / Self-hosted / Hybrid | Metadata filters | N/A |

| Chroma | AI/LLM embeddings | Linux, Cloud | Cloud / Self-hosted / Hybrid | LLM-focused vector DB | N/A |

| Zilliz Cloud | Managed Milvus | Cloud | Cloud | Fully managed GPU search | N/A |

| Vald | Cloud-native AI search | Linux, Cloud | Cloud / Self-hosted / Hybrid | Kubernetes-native deployment | N/A |

| Typesense Vector | Hybrid search | Linux, Cloud | Cloud / Self-hosted / Hybrid | Vector + structured search | N/A |

| Pinecone Enterprise | Enterprise AI | Cloud | Cloud | Enterprise-grade vector search | N/A |

Evaluation & Scoring of Vector Database Platforms

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Pinecone | 9 | 8 | 9 | 9 | 9 | 8 | 7 | 8.5 |

| Weaviate | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.7 |

| Milvus | 9 | 7 | 8 | 8 | 9 | 7 | 7 | 8.1 |

| Vespa | 8 | 7 | 7 | 8 | 8 | 7 | 6 | 7.5 |

| Qdrant | 8 | 8 | 7 | 8 | 8 | 7 | 7 | 7.7 |

| Chroma | 8 | 8 | 7 | 7 | 8 | 7 | 7 | 7.6 |

| Zilliz Cloud | 9 | 8 | 8 | 9 | 9 | 8 | 7 | 8.4 |

| Vald | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7.6 |

| Typesense Vector | 7 | 8 | 7 | 7 | 7 | 7 | 7 | 7.2 |

| Pinecone Enterprise | 9 | 8 | 8 | 9 | 9 | 8 | 7 | 8.4 |

Interpretation: Higher scores indicate stronger overall capability for enterprise AI and vector search workloads. Lower scores may still serve smaller-scale or specialized deployments.

Which Vector Database Platforms Tool Is Right for You?

Solo / Freelancer

- Chroma, Qdrant, or Weaviate are ideal for small AI/ML projects with manageable data scale.

SMB

- Pinecone, Milvus, and Typesense Vector provide balance between operational simplicity and performance.

Mid-Market

- Zilliz Cloud, Vespa, and Milvus are suitable for production-scale AI search with high-dimensional embeddings.

Enterprise

- Pinecone Enterprise, Zilliz Cloud, and Vespa deliver multi-region, high-availability, and secure deployments for critical AI applications.

Budget vs Premium

- Open-source: Milvus, Weaviate, Chroma, Vald

- Premium: Pinecone Enterprise, Zilliz Cloud, Vespa

Feature Depth vs Ease of Use

- Milvus, Vespa, and Pinecone Enterprise offer deep functionality but require expertise

- Qdrant, Chroma, and Typesense Vector are easier to deploy

Integrations & Scalability

- Cloud-native services integrate with AI/ML pipelines, DevOps, and monitoring platforms

- Kubernetes-ready solutions (Vald, Milvus) offer horizontal scalability

Security & Compliance Needs

- Enterprise editions provide robust encryption, access control, and regulatory compliance

- Open-source versions may require additional security configuration

Frequently Asked Questions (FAQs)

1. What is a vector database?

A vector database stores and indexes embeddings, which are numeric representations of unstructured data, enabling semantic search and similarity search for AI applications.

2. How does it differ from NoSQL?

NoSQL handles unstructured data but is not optimized for high-dimensional embeddings or similarity search, whereas vector databases are purpose-built for AI/ML workloads.

3. Are vector databases cloud-native?

Many, including Pinecone, Zilliz Cloud, and Typesense Vector, offer cloud-managed services, while open-source alternatives can be deployed on-premises or in Kubernetes.

4. Can vector databases support LLMs?

Yes, they store embeddings generated by LLMs and enable semantic search, retrieval, and similarity matching.

5. Are these databases secure?

Enterprise-grade versions include TLS encryption, RBAC, audit logging, and compliance with SOC 2, ISO 27001, and GDPR.

6. Which workloads are best suited?

Semantic search, recommendation engines, anomaly detection, real-time AI analytics, and high-dimensional data querying.

7. Do they support GPU acceleration?

Some platforms, like Milvus and Zilliz Cloud, leverage GPUs for fast high-dimensional similarity search.

8. How scalable are vector databases?

They are horizontally scalable, supporting millions to billions of embeddings with clustering and sharding.

9. Are open-source vector databases reliable?

Yes, when deployed with proper monitoring, replication, and cloud or Kubernetes orchestration.

10. How do I choose the right vector database?

Evaluate data scale, AI use case, embedding types, latency requirements, cloud adoption, and operational expertise. Pilot testing is recommended.

Conclusion

Vector Database Platforms are crucial for modern AI and machine learning applications, enabling semantic search, similarity matching, recommendation engines, and high-dimensional analytics. Open-source platforms like Milvus, Weaviate, Chroma, and Vald are suitable for flexible and experimental AI workloads. Cloud-managed solutions such as Pinecone, Zilliz Cloud, and Pinecone Enterprise simplify operations, scale horizontally, and support enterprise-grade AI workloads. When choosing a vector database, organizations should consider data volume, embedding types, latency, integrations, and security requirements. Shortlisting two or three platforms, testing embeddings workflows, and validating performance and scalability ensures the right choice for your AI applications.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals