Introduction

LLM Orchestration Frameworks are specialized platforms designed to manage, coordinate, and optimize large language models across various applications. They simplify workflows involving multiple LLMs, enable automated chaining of tasks, and integrate seamlessly with APIs, data sources, and operational pipelines. These frameworks help organizations leverage LLMs efficiently while maintaining control over outputs, performance, and compliance.

LLM orchestration has become critical as businesses increasingly adopt generative AI solutions for customer support, content generation, decision-making, and automation. With multiple LLMs often deployed simultaneously, managing their interactions, scaling workloads, and monitoring performance can be complex. These frameworks reduce operational overhead, accelerate deployment, and improve model reliability.

Real-world use cases include:

- Automating customer service chat flows across multiple languages and domains

- Generating personalized marketing content at scale

- Coordinating multi-step data analysis and summarization tasks

- Powering AI-driven coding assistants and knowledge retrieval

- Orchestrating multimodal AI tasks combining text, image, and structured data

Key criteria for evaluating LLM orchestration frameworks:

- Ease of integration with multiple LLMs and APIs

- Workflow orchestration and chaining capabilities

- Performance monitoring and logging

- Security and compliance features

- Scalability and high availability

- Model versioning and governance

- Extensibility and community support

- Pricing and total cost of ownership

Best for: AI teams, developers, enterprises deploying generative AI workflows, organizations with multiple LLMs or multimodal AI needs

Not ideal for: Companies with single-model use cases, small-scale experiments, or minimal orchestration requirements

Key Trends in LLM Orchestration Frameworks

- Growing adoption of multi-LLM orchestration for complex workflows

- Integration of generative AI with RPA (Robotic Process Automation) tools

- Advanced monitoring and drift detection for LLM outputs

- Hybrid deployment options combining cloud and on-premises AI

- Open-source orchestration frameworks gaining traction

- AI-driven optimization of prompt selection and model routing

- Compliance and audit-ready logging for enterprise use

- Standardization of APIs for seamless LLM interoperability

- Increasing focus on cost-efficiency and usage optimization

- AI orchestration frameworks expanding to multimodal AI

How We Selected These Tools (Methodology)

- Evaluated market adoption and community engagement

- Assessed feature completeness including workflow automation and LLM integration

- Reviewed reliability and performance signals from user reports and benchmarks

- Examined security features and compliance capabilities

- Considered integration options with other AI tools, APIs, and data sources

- Analyzed customer fit across enterprises, SMBs, and developer-focused users

- Evaluated scalability and support for high-volume LLM orchestration

- Reviewed extensibility, including custom module and plugin support

- Prioritized frameworks with active development and robust documentation

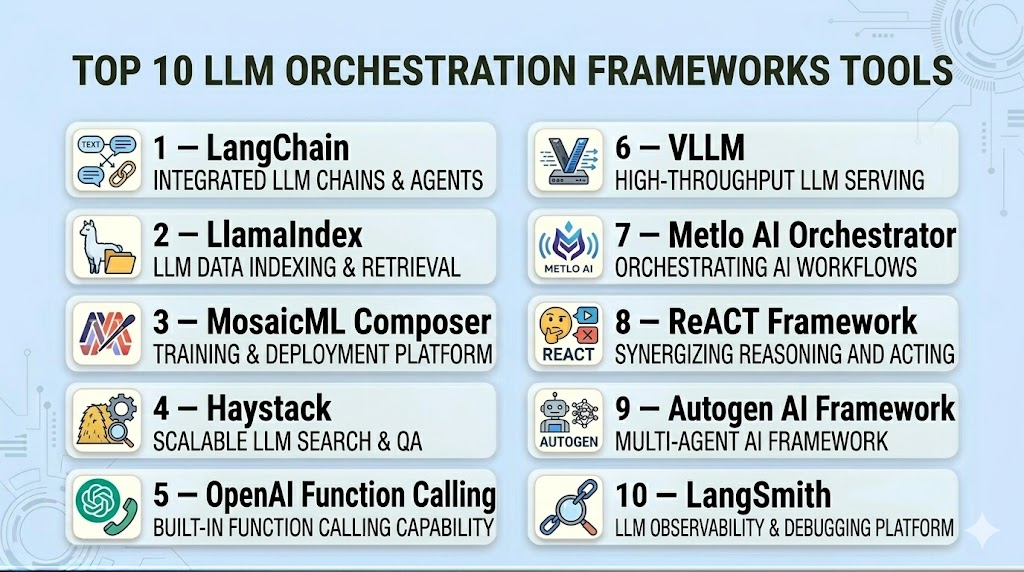

Top 10 LLM Orchestration Frameworks Tools

#1 — LangChain

Short description: LangChain enables developers to build applications using LLMs with modular components. It supports chaining, memory management, and integration with APIs, databases, and external tools, making it ideal for complex AI workflows.

Key Features

- Multi-LLM orchestration and chaining

- API and database integrations

- Memory management for conversational AI

- Prompt templates and reusable workflows

- Modular architecture with extensibility

- Logging and monitoring of model outputs

Pros

- Flexible and developer-friendly

- Strong community support

- Extensive integration capabilities

Cons

- Requires programming knowledge

- Can be complex for simple use cases

Platforms / Deployment

- Web / Windows / macOS / Linux

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Supports integrations with:

- OpenAI API

- Azure OpenAI

- Pinecone, Weaviate

- SQL and NoSQL databases

Support & Community

- Comprehensive documentation and tutorials

- Active community forums and GitHub support

#2 — LlamaIndex

Short description: LlamaIndex specializes in structuring and connecting data for LLM applications. It allows orchestration of knowledge retrieval and prompt engineering to deliver contextual AI outputs efficiently.

Key Features

- Knowledge graph and index building

- Multi-source data ingestion

- Retrieval-augmented generation (RAG)

- Integration with LangChain and LLMs

- Query optimization and prompt management

Pros

- Strong data orchestration capabilities

- Supports RAG workflows efficiently

Cons

- Focused primarily on data-rich applications

- Limited UI for non-technical users

Platforms / Deployment

- Web / Linux / macOS

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Integrates with:

- LangChain

- OpenAI

- Pinecone

- ChromaDB

Support & Community

- Developer-focused documentation

- GitHub community and sample projects

#3 — MosaicML Composer

Short description: Composer focuses on scaling and orchestrating LLM training and deployment. Ideal for teams managing multiple models across production environments.

Key Features

- Model orchestration for training and inference

- Scalable deployment pipelines

- Integration with popular ML frameworks

- Logging and performance monitoring

- Customizable training loops

Pros

- High scalability

- Performance monitoring included

Cons

- Geared toward technical users

- Learning curve for orchestration features

Platforms / Deployment

- Linux / macOS

- Cloud / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Integrates with:

- PyTorch

- Hugging Face Transformers

- Kubernetes

- Cloud ML platforms

Support & Community

- Extensive docs for developers

- Community support on GitHub

#4 — Haystack

Short description: Haystack enables orchestration of LLMs for question-answering, retrieval, and summarization workflows. It is ideal for knowledge-heavy applications requiring robust data connectivity.

Key Features

- RAG pipelines for LLMs

- Document and vector store integrations

- Multi-LLM orchestration

- API endpoints and REST interface

- Logging and analytics

Pros

- Optimized for search and QA tasks

- Flexible integration with data sources

Cons

- Requires configuration for production deployment

- Limited support for non-text workflows

Platforms / Deployment

- Web / Linux / macOS

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Supports:

- Elasticsearch

- Weaviate

- Pinecone

- OpenAI

Support & Community

- Active developer forums

- Tutorials and example repositories

#5 — OpenAI Function Calling

Short description: OpenAI Function Calling allows orchestration of LLM prompts and structured outputs by connecting LLM responses to external functions and APIs, enabling automated multi-step workflows.

Key Features

- Structured output management

- API integration and workflow automation

- Multi-turn conversation orchestration

- Prompt engineering support

Pros

- Seamless LLM API orchestration

- Simplifies automation of complex tasks

Cons

- Dependent on OpenAI API availability

- Limited to function-centric workflows

Platforms / Deployment

- Web / Cloud API

- Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Direct API integration

- Webhooks

- Supports custom serverless functions

Support & Community

- Extensive API documentation

- Community examples and SDKs

#6 — VLLM

Short description: VLLM provides high-performance orchestration for large-scale LLM inference. It focuses on efficiency and memory optimization for deploying multiple models.

Key Features

- Low-latency LLM orchestration

- Memory-efficient scheduling

- Multi-tenant inference

- Integration with AI frameworks

Pros

- Optimized for large LLMs

- Efficient resource usage

Cons

- Technical setup required

- Limited to inference workloads

Platforms / Deployment

- Linux / Cloud

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- PyTorch

- Hugging Face models

- Kubernetes

Support & Community

- Developer-focused documentation

- GitHub community support

#7 — Metlo AI Orchestrator

Short description: Metlo AI Orchestrator specializes in connecting LLMs to enterprise systems for workflow automation and knowledge management. Suitable for multi-step AI workflows.

Key Features

- Enterprise workflow orchestration

- Multi-model management

- Data source integration

- Logging and analytics

Pros

- Enterprise-ready

- Scales to multiple AI workflows

Cons

- More expensive for small teams

- Limited open-source tooling

Platforms / Deployment

- Web / Linux / Windows

- Cloud / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- REST APIs

- CRM and ERP systems

- Python SDK

Support & Community

- Enterprise support plans

- Technical documentation

#8 — ReACT Framework

Short description: ReACT Framework provides orchestration for LLM reasoning, action chaining, and tool integration. Ideal for autonomous AI agent workflows.

Key Features

- LLM reasoning chains

- Tool and API integration

- Action planning and execution

- Prompt orchestration

Pros

- Powerful agent orchestration

- Supports autonomous workflows

Cons

- Limited UI

- Developer-centric setup

Platforms / Deployment

- Linux / macOS

- Self-hosted / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python SDK

- API integration

- External tool connectors

Support & Community

- Developer documentation

- GitHub examples

#9 — Autogen AI Framework

Short description: Autogen allows orchestration of multi-step LLM workflows with memory management and tool integration, designed for agent-driven AI applications.

Key Features

- Multi-agent orchestration

- Tool integration

- Memory and state tracking

- Workflow templates

Pros

- Facilitates complex AI workflows

- Supports agent collaboration

Cons

- Limited enterprise support

- Learning curve for setup

Platforms / Deployment

- Linux / macOS

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- OpenAI

- APIs for external tools

- Python SDK

Support & Community

- Developer documentation

- Community examples

#10 — LangSmith

Short description: LangSmith provides orchestration and monitoring for LLMs, focusing on building, tracking, and testing multi-step workflows for enterprise applications.

Key Features

- Workflow orchestration

- LLM performance monitoring

- Multi-model support

- Logging and analytics

Pros

- Enterprise-grade monitoring

- Multi-model orchestration

Cons

- Primarily enterprise-focused

- Higher cost for small teams

Platforms / Deployment

- Web / Linux / macOS

- Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- APIs and SDK

- LLM integrations

- External data connectors

Support & Community

- Enterprise support

- Documentation and tutorials

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangChain | Developer workflows | Web, Windows, macOS, Linux | Cloud, Self-hosted | Multi-LLM orchestration | N/A |

| LlamaIndex | Knowledge-driven apps | Web, Linux, macOS | Cloud, Self-hosted | Data indexing & RAG | N/A |

| MosaicML Composer | Model training orchestration | Linux, macOS | Cloud, Hybrid | Scalable training & inference | N/A |

| Haystack | QA & search | Web, Linux, macOS | Cloud, Self-hosted | RAG pipelines | N/A |

| OpenAI Function Calling | API-driven workflows | Web | Cloud | Structured output automation | N/A |

| VLLM | High-performance inference | Linux | Cloud, Self-hosted | Low-latency orchestration | N/A |

| Metlo AI Orchestrator | Enterprise AI workflows | Web, Linux, Windows | Cloud, Hybrid | Enterprise workflow orchestration | N/A |

| ReACT Framework | Autonomous AI agents | Linux, macOS | Self-hosted, Cloud | LLM reasoning & action chaining | N/A |

| Autogen AI Framework | Multi-agent orchestration | Linux, macOS | Cloud, Self-hosted | Multi-step agent workflows | N/A |

| LangSmith | Enterprise monitoring | Web, Linux, macOS | Cloud | LLM workflow tracking | N/A |

Evaluation & Scoring of LLM Orchestration Frameworks

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 8 | 9 | 7 | 8 | 8 | 9 | 8.4 |

| LlamaIndex | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.7 |

| MosaicML Composer | 9 | 7 | 8 | 7 | 9 | 7 | 8 | 8.1 |

| Haystack | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.7 |

| OpenAI Function Calling | 7 | 8 | 7 | 7 | 7 | 7 | 8 | 7.4 |

| VLLM | 8 | 7 | 7 | 7 | 9 | 7 | 8 | 7.9 |

| Metlo AI Orchestrator | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7.6 |

| ReACT Framework | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

| Autogen AI Framework | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

| LangSmith | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7.6 |

Scoring is comparative across frameworks, considering features, ease, integrations, security, performance, support, and value.

Which LLM Orchestration Framework Tool Is Right for You?

Solo / Freelancer

- Focus on lightweight, open-source orchestration frameworks like LangChain or LlamaIndex. Ideal for experimental workflows and small-scale multi-model projects.

SMB

- Tools like OpenAI Function Calling or ReACT Framework provide manageable orchestration with API integrations and moderate scalability.

Mid-Market

- MosaicML Composer or VLLM offers scaling, workflow automation, and multi-model orchestration suitable for mid-size teams handling multiple AI workflows.

Enterprise

- Metlo AI Orchestrator and LangSmith provide enterprise-grade orchestration, monitoring, and compliance features, supporting large-scale, mission-critical LLM deployments.

Budget vs Premium

- Open-source frameworks and API-driven solutions are cost-effective for small teams. Enterprise frameworks offer comprehensive orchestration, monitoring, and support at a higher price point.

Feature Depth vs Ease of Use

- Developers needing deep orchestration features may prefer LangChain, MosaicML Composer, or ReACT. Teams seeking ease of setup may lean toward OpenAI Function Calling or LangSmith.

Integrations & Scalability

- Evaluate frameworks supporting your existing data, APIs, and model workflows. Enterprise frameworks offer robust scaling, while open-source solutions may require additional engineering for large deployments.

Security & Compliance Needs

- Enterprises handling sensitive data should prioritize frameworks with compliance features and audit logging. Open-source frameworks may need additional security configurations.

Frequently Asked Questions (FAQs)

1. What are LLM orchestration frameworks used for?

They manage workflows, chain multiple LLMs, and automate tasks, enabling scalable and reliable AI operations.

2. Can these frameworks integrate multiple LLM providers?

Yes, most support integration with multiple APIs like OpenAI, Hugging Face, and custom models.

3. Are these frameworks suitable for small teams?

Yes, open-source and lightweight frameworks like LangChain are ideal for freelancers and SMBs.

4. Do they offer monitoring features?

Enterprise frameworks like LangSmith provide monitoring, logging, and analytics for performance tracking.

5. How do they handle multimodal AI?

Frameworks such as LangChain and MosaicML Composer can orchestrate workflows combining text, image, and structured data.

6. Are open-source frameworks secure?

Security depends on deployment configuration; enterprise frameworks offer more built-in compliance and audit features.

7. Can they automate customer-facing AI tasks?

Yes, frameworks can automate chatbots, content generation, and multi-step AI workflows.

8. How steep is the learning curve?

Open-source frameworks require programming knowledge; enterprise solutions may offer simplified UIs.

9. Can frameworks reduce LLM usage costs?

Yes, orchestration frameworks optimize model calls, caching, and routing to reduce computational overhead.

10. What alternatives exist?

For simple tasks, direct API usage without orchestration is an alternative. For multi-step workflows, orchestration frameworks are recommended.

Conclusion

LLM orchestration frameworks are critical for managing complex, multi-LLM workflows efficiently. Choosing the right framework depends on your team size, workflow complexity, integration requirements, and security needs. Open-source tools provide flexibility and cost-efficiency, while enterprise-grade platforms offer scalability, monitoring, and compliance features. Evaluating features, integrations, ease of use, and support will help organizations select the framework that optimizes their AI operations and accelerates deployment. Start by shortlisting tools, running pilots, and validating integrations and security before full-scale implementation.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals