Introduction

Natural Language Processing (NLP) Toolkits are software libraries and platforms that allow developers and data scientists to process, analyze, and understand human language. By combining computational linguistics with machine learning, these toolkits provide capabilities such as text tokenization, entity recognition, sentiment analysis, language modeling, and semantic understanding. In, NLP has become a cornerstone of AI-driven applications in areas like customer service automation, document processing, content moderation, and AI chatbots.

Use cases include automated customer support with chatbots, sentiment and emotion analysis from social media, information extraction from documents and legal contracts, real-time language translation, and question-answering systems in enterprise knowledge management. Buyers evaluating NLP toolkits should consider criteria such as: model accuracy, language and dialect support, scalability, deployment options, integration with AI/ML pipelines, real-time versus batch processing, API availability, community support, pricing, and compliance with privacy regulations.

Best for: Data scientists, NLP engineers, AI researchers, enterprise development teams, and organizations building AI-driven language applications.

Not ideal for: Teams with minimal text processing needs or who require only basic keyword extraction without deep NLP capabilities.

Key Trends in NLP Toolkits

- Integration of transformer-based models for higher accuracy

- Support for multiple languages, dialects, and domain-specific vocabularies

- Pretrained models for tasks such as summarization, sentiment analysis, and translation

- Hybrid deployment: cloud, on-premises, and edge processing

- Automated model optimization and tuning

- Support for large-scale text analytics and streaming data

- Interoperability with MLOps pipelines and AI platforms

- Subscription and open-source licensing models

- Enhanced privacy-preserving NLP for sensitive data

- Customization for domain-specific NLP applications

How We Selected These Tools (Methodology)

- Market adoption and recognition in NLP and AI communities

- Completeness and range of NLP features

- Reliability and performance under enterprise workloads

- Security and compliance posture

- Integration with ML pipelines, analytics platforms, and APIs

- Suitability across solo developers, SMBs, mid-market, and enterprise users

- Multi-language and domain adaptability

- Ease of use and onboarding

- Community support and training resources

- Overall cost-effectiveness and licensing flexibility

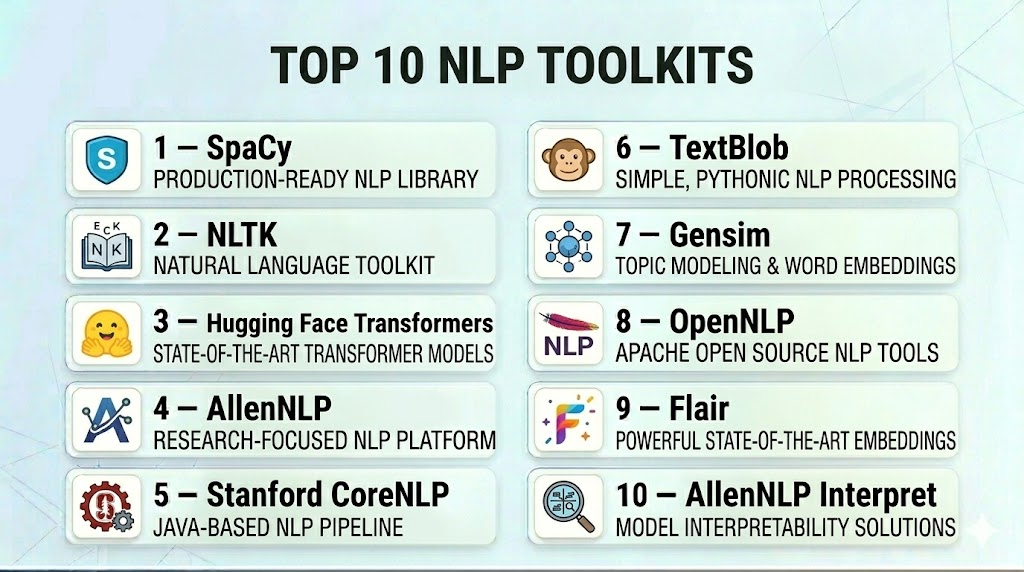

Top 10 NLP Toolkits

#1 — SpaCy

Short description : SpaCy is a popular open-source NLP library designed for high-performance text processing. Suitable for developers and data scientists building production-grade NLP applications.

Key Features

- Tokenization and part-of-speech tagging

- Named entity recognition and dependency parsing

- Pre-trained transformer-based models

- Text classification and similarity analysis

- Language modeling and vector embeddings

- Integration with machine learning frameworks

Pros

- High-speed processing for large datasets

- Well-documented and developer-friendly

- Extensive pre-trained models

Cons

- Primarily Python-based

- Limited support for low-resource languages

- Advanced customization requires ML expertise

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted / Cloud (via integration)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Works with TensorFlow and PyTorch

- API and Python SDK

- Extensible via custom pipelines

Support & Community

Active community, documentation, and tutorials.

#2 — NLTK

Short description : The Natural Language Toolkit (NLTK) is a comprehensive Python library for text processing and NLP research, ideal for education, prototyping, and lightweight applications.

Key Features

- Tokenization, stemming, and lemmatization

- Text classification and tagging

- Support for corpora and datasets

- Regular expression parsing

- Language modeling and feature extraction

Pros

- Extensive NLP resources for research

- Open-source and free

- Rich tutorials and educational support

Cons

- Slower performance on large datasets

- Limited modern deep learning models

- Less suitable for production-grade applications

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python integration

- Supports custom ML pipelines

Support & Community

Extensive academic community, documentation, and forums.

#3 — Hugging Face Transformers

Short description : Hugging Face Transformers is a leading library for state-of-the-art transformer models. Best for developers building advanced NLP applications with pre-trained models like BERT, GPT, and RoBERTa.

Key Features

- Pre-trained transformer models

- Fine-tuning for custom NLP tasks

- Tokenization and embeddings

- Multi-language support

- API for real-time inference

- Integration with deep learning frameworks

Pros

- Cutting-edge model support

- Easy fine-tuning and deployment

- Active research and community updates

Cons

- Requires GPU for optimal performance

- Steeper learning curve for beginners

- Cloud hosting needed for large-scale deployments

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- TensorFlow and PyTorch integration

- Python SDK and API

- Extensible for custom ML pipelines

Support & Community

Active developer community, tutorials, and model hub.

#4 — AllenNLP

Short description : AllenNLP is an open-source NLP research library designed for building deep learning models for text understanding and semantic analysis.

Key Features

- Pre-built modules for text classification

- Named entity recognition and semantic role labeling

- Integration with PyTorch

- Model interpretability tools

- Extensible experiment pipelines

Pros

- Research-focused and flexible

- Deep learning integration

- Modular and extensible

Cons

- Requires PyTorch knowledge

- Limited pre-trained production-ready models

- Steeper learning curve

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- PyTorch integration

- Python API and SDK

- Custom pipelines and experiments

Support & Community

Documentation, research community, and tutorials.

#5 — Stanford CoreNLP

Short description : CoreNLP is a Java-based NLP toolkit providing robust syntactic, semantic, and entity analysis, ideal for enterprise and research applications.

Key Features

- POS tagging, parsing, and lemmatization

- Named entity recognition and coreference resolution

- Sentiment analysis

- Multi-language support

- REST API for integration

Pros

- Enterprise-grade robustness

- Multi-language NLP capabilities

- Widely adopted in research

Cons

- Java dependency

- Higher memory usage

- Limited deep learning integration

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- REST API

- Python wrapper (StanfordNLP)

- Integration with ML pipelines

Support & Community

Active research community and academic support.

#6 — TextBlob

Short description : TextBlob is a Python library for simple NLP tasks, including sentiment analysis, part-of-speech tagging, and translation.

Key Features

- Tokenization and POS tagging

- Sentiment analysis

- Noun phrase extraction

- Language translation

- Simple API for rapid prototyping

Pros

- Easy to use and beginner-friendly

- Quick integration for simple NLP tasks

- Lightweight

Cons

- Limited performance for large datasets

- Fewer advanced NLP features

- Not ideal for production-grade deep learning models

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python API

- Compatible with NLTK and other libraries

Support & Community

Documentation and community support.

#7 — Gensim

Short description : Gensim is a Python library specialized in topic modeling and document similarity using vector space representations.

Key Features

- Topic modeling (LDA, LSI)

- Word embeddings (Word2Vec, FastText)

- Document similarity analysis

- Streaming and scalable processing

- Integration with ML workflows

Pros

- Scalable for large text corpora

- Efficient topic modeling

- Well-documented

Cons

- Focused on unsupervised models

- Limited deep NLP features

- Requires Python knowledge

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python API

- Integration with ML and analytics pipelines

Support & Community

Documentation and active community.

#8 — OpenNLP

Short description : Apache OpenNLP is a Java-based NLP library offering tokenization, sentence detection, part-of-speech tagging, and entity extraction.

Key Features

- Tokenization, POS tagging, and parsing

- Named entity recognition

- Document categorization

- Language detection

- Model training and evaluation

Pros

- Open-source and free

- Java ecosystem integration

- Modular and extensible

Cons

- Java dependency

- Less active development

- Limited deep learning support

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Java API

- Integration with analytics pipelines

Support & Community

Documentation and community forums.

#9 — Flair

Short description : Flair is a Python-based NLP library focused on sequence labeling, named entity recognition, and embeddings.

Key Features

- State-of-the-art embeddings (BERT, ELMo)

- Named entity recognition

- Text classification

- Multi-language support

- Sequence tagging

Pros

- Supports modern embeddings

- Flexible and modular

- Good for deep NLP tasks

Cons

- Requires deep learning knowledge

- GPU recommended for large datasets

- Limited on low-resource languages

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python API

- Integration with PyTorch and ML pipelines

Support & Community

Documentation and community support.

#10 — AllenNLP Interpret

Short description : AllenNLP Interpret is an extension of AllenNLP focusing on interpretability and explainability of NLP models.

Key Features

- Saliency mapping for models

- Token-level attribution

- Supports classification and sequence tasks

- Integration with AllenNLP models

- Visualization tools

Pros

- Enhances model transparency

- Supports debugging and evaluation

- Research-oriented

Cons

- Requires AllenNLP knowledge

- Limited standalone NLP capabilities

- Primarily for interpretability, not production NLP

Platforms / Deployment

- Web / Linux / Windows / macOS

- Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python API

- Integration with AllenNLP and PyTorch pipelines

Support & Community

Documentation, tutorials, and research community.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| SpaCy | Production-grade NLP | Web/Linux/Windows/macOS | Self-hosted/Cloud | Speed and transformer models | N/A |

| NLTK | Education & prototyping | Web/Linux/Windows/macOS | Self-hosted | Comprehensive NLP resources | N/A |

| Hugging Face Transformers | Advanced NLP research | Web/Linux/Windows/macOS | Self-hosted/Cloud | Pre-trained transformer models | N/A |

| AllenNLP | Deep NLP modeling | Web/Linux/Windows/macOS | Self-hosted | Modular research framework | N/A |

| Stanford CoreNLP | Enterprise & research | Web/Linux/Windows/macOS | Self-hosted | Multi-language NLP | N/A |

| TextBlob | Quick NLP tasks | Web/Linux/Windows/macOS | Self-hosted | Simple API | N/A |

| Gensim | Topic modeling & similarity | Web/Linux/Windows/macOS | Self-hosted | Scalable vector space modeling | N/A |

| OpenNLP | Java NLP integration | Web/Linux/Windows/macOS | Self-hosted | Java ecosystem compatibility | N/A |

| Flair | Sequence labeling & embeddings | Web/Linux/Windows/macOS | Self-hosted | Modern embeddings support | N/A |

| AllenNLP Interpret | Model interpretability | Web/Linux/Windows/macOS | Self-hosted | Explainable NLP models | N/A |

Evaluation & Scoring of NLP Toolkits

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| SpaCy | 9 | 8 | 8 | 7 | 9 | 8 | 8 | 8.3 |

| NLTK | 8 | 7 | 7 | 7 | 7 | 7 | 8 | 7.5 |

| Hugging Face Transformers | 9 | 8 | 8 | 7 | 9 | 8 | 8 | 8.3 |

| AllenNLP | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

| Stanford CoreNLP | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

| TextBlob | 7 | 8 | 7 | 7 | 7 | 7 | 7 | 7.3 |

| Gensim | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

| OpenNLP | 7 | 7 | 7 | 7 | 7 | 7 | 7 | 7.0 |

| Flair | 9 | 7 | 8 | 7 | 8 | 7 | 7 | 7.9 |

| AllenNLP Interpret | 7 | 7 | 7 | 7 | 7 | 7 | 7 | 7.0 |

Scores are comparative across core NLP capabilities, ease of use, integrations, performance, support, and value.

Which NLP Toolkits Tool Is Right for You?

Solo / Freelancer

TextBlob or Gensim for lightweight and fast prototyping.

SMB

SpaCy or Hugging Face Transformers for scalable NLP with modern models.

Mid-Market

AllenNLP, Flair, or Stanford CoreNLP for enterprise-level NLP projects with customization.

Enterprise

SpaCy, Hugging Face, and AllenNLP Interpret for deep NLP, multi-language support, and model interpretability.

Budget vs Premium

Open-source NLP toolkits are cost-effective, while premium enterprise versions provide production-ready performance, support, and scalability.

Feature Depth vs Ease of Use

TextBlob and NLTK are beginner-friendly, while Hugging Face and SpaCy offer extensive features for complex NLP applications.

Integrations & Scalability

Cloud-native or self-hosted toolkits integrate with ML pipelines, analytics platforms, and AI applications.

Security & Compliance Needs

Enterprise toolkits provide encryption, access control, and compliance-ready deployment options.

Frequently Asked Questions (FAQs)

1. What pricing models exist?

Open-source tools are free; enterprise solutions often have subscription-based or usage-based pricing.

2. How fast is onboarding?

Open-source libraries can be implemented immediately, enterprise options may require setup and integration.

3. Do these toolkits support multiple languages?

Many toolkits support multi-language NLP, crucial for global applications.

4. Can they integrate with ML pipelines?

Yes, APIs, SDKs, and Python libraries facilitate integration with analytics and ML workflows.

5. Are real-time NLP operations possible?

Yes, SpaCy, Hugging Face, and other modern toolkits can handle streaming and real-time processing.

6. Do these toolkits support pre-trained models?

Most include pre-trained models for sentiment, classification, and entity recognition.

7. Can NLP outputs be exported?

Structured outputs can be integrated with BI, CRM, or data storage systems.

8. Are these toolkits suitable for research?

NLTK, AllenNLP, and Hugging Face are widely used in NLP research and academic projects.

9. Are GPU resources required?

Transformer-based NLP models like Hugging Face benefit from GPU acceleration for performance.

10. Can these tools be customized?

Yes, SpaCy, Hugging Face, and AllenNLP allow custom training and fine-tuning for domain-specific tasks.

Conclusion

Natural Language Processing toolkits are essential for organizations seeking to leverage textual data for AI, analytics, and automation. Open-source libraries like SpaCy, NLTK, and Gensim offer flexibility for research and prototyping, while Hugging Face and AllenNLP provide production-ready capabilities with modern transformer-based models. Choosing the right toolkit depends on team size, deployment needs, data complexity, and integration requirements. Testing and pilot projects can ensure effective implementation and maximize ROI from NLP applications.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals