Introduction

Batch processing frameworks are specialized platforms designed to process large volumes of data in scheduled or grouped operations, rather than in real-time streams. These frameworks allow organizations to handle repetitive, high-volume tasks efficiently, ensuring data consistency and reliability across systems. They are critical in scenarios where large datasets need aggregation, transformation, or scheduled reporting, rather than immediate action.

Real-world use cases include financial reconciliation, payroll processing, ETL jobs for data warehouses, large-scale report generation, and scheduled updates to CRM or ERP systems. Buyers evaluating batch processing frameworks should consider scalability, fault tolerance, ease of deployment, integration capabilities, monitoring and alerting, job orchestration, security and compliance, resource optimization, and total cost of ownership.

Best for: Data engineers, IT operations teams, analytics departments, and organizations handling predictable, large-scale data workloads across SMBs, mid-market, and enterprises.

Not ideal for: Businesses requiring low-latency or real-time processing. Stream processing frameworks or real-time analytics platforms may be better suited.

Key Trends in Batch Processing Frameworks

- Integration with hybrid and multi-cloud platforms for flexible deployment

- AI and ML-driven optimization of batch jobs and resource allocation

- Improved automation for job scheduling and orchestration

- Containerized and Kubernetes-based deployment models

- Enhanced observability, monitoring, and alerting features

- Serverless batch execution for reduced operational overhead

- Integration with modern data lakes, warehouses, and ETL tools

- Compliance-ready frameworks supporting GDPR, SOC 2, and HIPAA

- Self-service batch processing for business users

- Pay-per-use or consumption-based pricing models

How We Selected These Tools (Methodology)

- Evaluated market adoption and overall mindshare

- Assessed feature completeness and batch capabilities

- Verified reliability and performance through benchmarks

- Reviewed security posture including encryption, RBAC, and compliance

- Examined integrations with BI, ETL, and storage systems

- Checked compatibility with various programming languages

- Evaluated ease of deployment and operational management

- Considered monitoring, observability, and logging support

- Tested suitability across SMB, mid-market, and enterprise environments

- Compared total cost of ownership versus capabilities

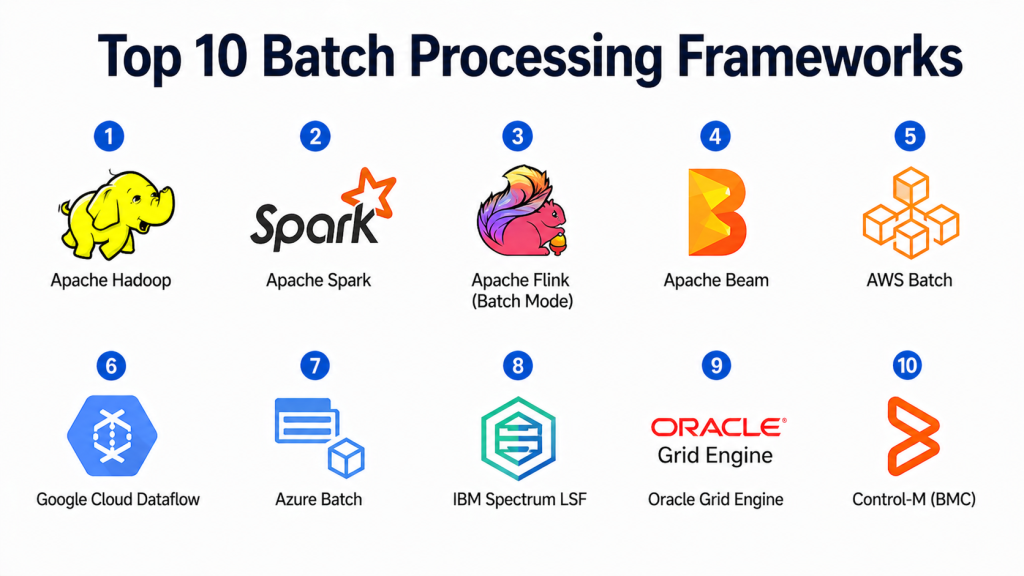

Top 10 Batch Processing Frameworks

#1 — Apache Hadoop

Short description: Apache Hadoop is an open-source distributed framework for storing and processing large-scale datasets. It is designed for reliable batch processing across clusters of commodity hardware, commonly used in data warehouses, analytics pipelines, and large-scale ETL operations.

Key Features

- HDFS for distributed storage

- MapReduce batch processing

- Fault-tolerant and highly available

- Integration with Hive, Pig, Spark

- Scalability across thousands of nodes

- Supports multiple programming languages

- Resource management via YARN

Pros

- Handles very large datasets efficiently

- Mature ecosystem with strong community support

Cons

- Complex setup and cluster management

- Slower than in-memory alternatives

Platforms / Deployment

- Linux / macOS / Windows

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Kerberos authentication

- Not publicly stated

Integrations & Ecosystem

- Hive, Pig, Spark, HBase

- S3, cloud storage, Hadoop connectors

- APIs for custom batch workflows

Support & Community

- Strong open-source community and enterprise support options

#2 — Apache Spark

Short description: Apache Spark is an open-source unified analytics engine that supports high-speed batch processing using in-memory computing. It is used for ETL pipelines, analytics workflows, and machine learning applications, providing a unified framework for batch and streaming workloads.

Key Features

- In-memory batch processing for speed

- Supports SQL, Python, Scala, and R

- Integration with Spark MLlib for machine learning

- Fault-tolerant and scalable across clusters

- Hadoop ecosystem integration

- Rich APIs for complex transformations

- Job scheduling and orchestration

Pros

- Significantly faster than MapReduce

- Unified framework for batch and analytics

Cons

- Resource-intensive

- Requires performance tuning for optimal throughput

Platforms / Deployment

- Linux / macOS / Windows

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Kerberos, SSL/TLS support

- Not publicly stated

Integrations & Ecosystem

- Hadoop, Hive, Kafka, HBase

- APIs and SDKs for custom batch tasks

Support & Community

- Strong open-source community with enterprise support options

#3 — Apache Flink (Batch Mode)

Short description: Apache Flink is a stream-processing framework that also supports batch processing with a unified API. Its batch mode enables high-throughput, parallel data processing across distributed systems.

Key Features

- Unified batch and stream APIs

- Fault tolerance with checkpointing

- Advanced windowing and data transformations

- Integration with Hadoop and cloud storage

- High throughput and low latency

- Event-time processing for accuracy

Pros

- Flexible for batch and streaming use cases

- Strong state management and fault recovery

Cons

- Steep learning curve

- Cluster configuration can be complex

Platforms / Deployment

- Linux / macOS / Windows

- Cloud / Self-hosted / Hybrid

Security & Compliance

- SSL/TLS, RBAC support

- Not publicly stated

Integrations & Ecosystem

- Kafka, HDFS, cloud storage

- APIs for custom batch and streaming jobs

Support & Community

- Active open-source community with documentation

#4 — Apache Beam

Short description: Apache Beam is a unified programming model for batch and stream processing. It allows users to write portable pipelines that run across multiple execution engines, including Spark, Flink, and Google Cloud Dataflow.

Key Features

- Multi-runner support for batch and streaming

- Windowing and trigger-based data processing

- SDKs for Java, Python, Go

- Portable and flexible execution

- Integration with cloud and on-prem resources

Pros

- Highly portable pipelines

- Supports multiple runners and environments

Cons

- Dependent on runner for performance

- Learning curve for complex workflows

Platforms / Deployment

- Linux / macOS / Windows

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Varies by runner

- Not publicly stated

Integrations & Ecosystem

- Hadoop, Spark, Flink

- Cloud storage and message queues

- SDKs for custom batch operations

Support & Community

- Active Apache community with documentation

#5 — AWS Batch

Short description: AWS Batch is a fully managed service that automates batch processing on Amazon Web Services. It provisions and scales compute resources automatically to efficiently run batch workloads.

Key Features

- Serverless batch execution

- Dynamic resource provisioning

- Integration with S3, RDS, DynamoDB

- Job queues, dependencies, and scheduling

- Autoscaling and cost optimization

Pros

- Fully managed, reduces operational overhead

- Scales automatically based on workload

Cons

- Tied to AWS ecosystem

- Less control compared to open-source frameworks

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- IAM, encryption, audit logs

- SOC 2, ISO 27001, GDPR

Integrations & Ecosystem

- S3, RDS, Lambda

- API for job orchestration

Support & Community

- AWS support tiers, active forums

#6 — Google Cloud Dataflow

Short description: Dataflow is a serverless batch and stream processing service using Apache Beam. It offers autoscaling, high reliability, and integration with Google Cloud storage and analytics tools.

Key Features

- Unified batch and streaming API

- Serverless autoscaling

- Event-time processing and windowing

- Integration with BigQuery, Pub/Sub, Cloud Storage

- Built-in monitoring and logging

Pros

- Fully managed serverless service

- Easy integration with Google Cloud ecosystem

Cons

- Dependent on Beam SDK

- Costs can scale with usage

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- IAM, audit logging, encryption

- SOC 2, GDPR

Integrations & Ecosystem

- BigQuery, Pub/Sub, Cloud Storage

- APIs and SDKs

Support & Community

- Google Cloud support and developer forums

#7 — Azure Batch

Short description: Azure Batch is a managed batch service designed for high-performance computing and large-scale job execution. It automatically provisions compute resources and manages workload distribution.

Key Features

- Job scheduling and orchestration

- Autoscaling compute nodes

- Integration with Azure storage and databases

- Containerized job support

- Monitoring and logging

Pros

- Fully managed and scalable

- Suitable for HPC and large datasets

Cons

- Azure-specific ecosystem

- Less flexible than open-source options

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- Azure AD, RBAC, encryption

- ISO 27001, SOC 2, GDPR

Integrations & Ecosystem

- Blob Storage, SQL, Data Lake

- REST API and SDKs

Support & Community

- Microsoft support and documentation

#8 — IBM Spectrum LSF

Short description: IBM Spectrum LSF is an enterprise-grade batch workload management system. It supports high-performance computing clusters and hybrid deployments for complex batch processing workflows.

Key Features

- Job scheduling and queuing

- Resource allocation and optimization

- Integration with HPC and cloud resources

- Multi-language support

- Monitoring and reporting

Pros

- Enterprise-ready and stable

- Optimized for HPC workloads

Cons

- Licensing cost is high

- Setup and configuration complexity

Platforms / Deployment

- Linux / Windows

- Cloud / Self-hosted / Hybrid

Security & Compliance

- LDAP, RBAC

- Not publicly stated

Integrations & Ecosystem

- HPC clusters, cloud storage

- APIs for automation

Support & Community

- IBM enterprise support

#9 — Oracle Grid Engine

Short description: Oracle Grid Engine is a distributed batch scheduling platform that handles large-scale workload distribution with priority-based scheduling and monitoring.

Key Features

- Job submission and queuing

- Priority-based scheduling

- Resource allocation and monitoring

- Multi-language support

- Integration with Oracle DB

Pros

- Stable, enterprise-grade

- Flexible scheduling policies

Cons

- Limited cloud-native features

- Steep learning curve

Platforms / Deployment

- Linux / Windows

- Self-hosted / Hybrid

Security & Compliance

- LDAP authentication

- Not publicly stated

Integrations & Ecosystem

- Oracle DB, HPC clusters, file systems

- API for job management

Support & Community

- Oracle enterprise support

#10 — Control-M (BMC)

Short description: Control-M is a comprehensive workload automation platform that orchestrates batch jobs and complex workflows across hybrid environments.

Key Features

- Workflow orchestration

- SLA management and alerts

- Integration with on-prem and cloud systems

- High-availability architecture

- Monitoring and reporting

Pros

- Reduces operational overhead

- Enterprise-grade workflow automation

Cons

- High licensing cost

- Complex setup for small teams

Platforms / Deployment

- Windows / Linux / macOS

- Cloud / Self-hosted / Hybrid

Security & Compliance

- RBAC, LDAP, encryption

- SOC 2, GDPR

Integrations & Ecosystem

- ERP systems, databases, cloud storage

- APIs for automation

Support & Community

- BMC support, documentation, and forums

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Apache Hadoop | Massive batch processing | Linux, macOS, Windows | Self-hosted / Hybrid | MapReduce | N/A |

| Apache Spark | Unified batch & analytics | Linux, macOS, Windows | Cloud / Self-hosted / Hybrid | In-memory processing | N/A |

| Apache Flink | Batch & stream | Linux, macOS, Windows | Cloud / Self-hosted / Hybrid | Low-latency batch | N/A |

| Apache Beam | Portable pipelines | Linux, macOS, Windows | Cloud / Self-hosted / Hybrid | Multi-runner support | N/A |

| AWS Batch | Cloud batch jobs | Web | Cloud | Serverless execution | N/A |

| Google Dataflow | Serverless pipelines | Web | Cloud | Autoscaling | N/A |

| Azure Batch | HPC & cloud batch | Web | Cloud | Autoscaling compute nodes | N/A |

| IBM Spectrum LSF | HPC workloads | Linux / Windows | Cloud / Self-hosted / Hybrid | Resource optimization | N/A |

| Oracle Grid Engine | Enterprise scheduling | Linux / Windows | Self-hosted / Hybrid | Priority scheduling | N/A |

| Control-M | Workflow automation | Windows / Linux / macOS | Cloud / Self-hosted / Hybrid | SLA management | N/A |

Evaluation & Scoring of Batch Processing Frameworks

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Apache Hadoop | 9 | 7 | 8 | 7 | 8 | 7 | 8 | 7.9 |

| Apache Spark | 9 | 7 | 8 | 7 | 8 | 7 | 8 | 8.0 |

| Apache Flink | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.8 |

| Apache Beam | 8 | 7 | 8 | 7 | 7 | 7 | 8 | 7.7 |

| AWS Batch | 8 | 8 | 7 | 8 | 7 | 7 | 7 | 7.6 |

| Google Dataflow | 8 | 8 | 7 | 8 | 8 | 7 | 7 | 7.7 |

| Azure Batch | 7 | 8 | 7 | 8 | 7 | 7 | 7 | 7.4 |

| IBM Spectrum LSF | 7 | 7 | 7 | 7 | 7 | 7 | 7 | 7.0 |

| Oracle Grid Engine | 7 | 7 | 7 | 7 | 7 | 7 | 7 | 7.0 |

| Control-M | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.7 |

Scores are comparative, indicating strengths relative to other frameworks. Higher total scores indicate better overall suitability for enterprise batch processing workloads.

Which Batch Processing Frameworks Tool Is Right for You?

Solo / Freelancer

Cloud-managed services like AWS Batch or Google Dataflow simplify setup without cluster management.

SMB

Apache Spark or Flink are suitable for ETL, analytics, and moderate-scale batch workloads.

Mid-Market

Hadoop and Beam provide scalable, reliable batch processing and integration with BI pipelines.

Enterprise

Control-M, IBM Spectrum LSF, and Oracle Grid Engine deliver enterprise-grade workflow orchestration, SLA compliance, and resource optimization.

Budget vs Premium

Open-source frameworks reduce licensing costs; managed cloud services offer operational ease at higher costs.

Feature Depth vs Ease of Use

Hadoop and Spark offer rich functionality but require technical expertise; AWS Batch and Azure Batch prioritize ease of deployment.

Integrations & Scalability

Ensure integration with ETL, BI tools, data lakes, and cloud platforms for efficient batch workflows.

Security & Compliance Needs

Choose frameworks with RBAC, encryption, SSO/SAML, and compliance certifications as required.

Frequently Asked Questions (FAQs)

1. What are Batch Processing Frameworks?

Platforms designed for scheduled or grouped data processing, ideal for predictable, high-volume workloads.

2. Can small businesses use these frameworks?

Yes, cloud-managed services allow small teams to process large datasets without complex infrastructure.

3. Are they suitable for real-time analytics?

No, they are optimized for batch operations; real-time frameworks like Apache Flink are better for streaming data.

4. How expensive are these frameworks?

Open-source options are free; cloud-managed services charge based on compute and storage consumption.

5. What is the learning curve?

Open-source frameworks require cluster setup and tuning; cloud services reduce complexity.

6. Do they integrate with BI and ETL tools?

Yes, they commonly integrate with ETL pipelines, BI dashboards, and data warehouses.

7. Are these frameworks scalable?

Yes, they support horizontal scaling across clusters or cloud instances.

8. Can they support AI/ML workloads?

Yes, frameworks like Spark integrate with ML libraries for batch-based machine learning.

9. Are these frameworks secure?

Most support encryption, RBAC, SSO/SAML, and audit logging.

10. Which framework should I choose?

Depends on organizational scale, technical expertise, workload patterns, and cloud/on-prem preferences.

Conclusion

Batch Processing Frameworks remain vital for organizations handling large-scale, scheduled data operations. Open-source frameworks like Apache Hadoop and Spark are feature-rich, supporting complex analytics and large workloads but require technical expertise. Managed services like AWS Batch, Google Dataflow, and Azure Batch offer simplified operations and autoscaling, ideal for cloud-centric teams. Enterprise-grade tools like Control-M, IBM Spectrum LSF, and Oracle Grid Engine provide workflow orchestration, SLA compliance, and resource optimization. The right choice depends on scale, deployment preference, integration needs, and compliance requirements. Organizations should pilot frameworks based on workloads, validate security and compliance, and ensure integration with analytics pipelines for efficient, actionable results.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals