Introduction

MLOps platforms streamline the operational side of machine learning, combining model deployment, monitoring, and governance into a single, scalable workflow. They help organizations bridge the gap between data science and IT operations, enabling continuous integration, testing, and deployment of ML models. In MLOps platforms are critical as AI adoption accelerates, and businesses demand repeatable, secure, and auditable ML workflows.

Use cases are abundant: deploying recommendation engines in real-time, monitoring predictive models for fraud detection, automating anomaly detection in manufacturing, scaling NLP applications across cloud services, and managing versioning for large AI models. Buyers evaluating MLOps platforms should consider:

- Pipeline orchestration and workflow automation

- Continuous integration/continuous deployment (CI/CD) for ML

- Model monitoring, drift detection, and retraining

- Scalability and distributed compute support

- Integration with cloud services, data lakes, and ML frameworks

- Governance, reproducibility, and compliance features

- Ease of use and collaboration features

- Security, role-based access, and encryption

- Cost and pricing flexibility

- Support for experimentation, version control, and deployment tracking

Best for: Data science teams, ML engineers, IT operations, and enterprises seeking to operationalize machine learning at scale.

Not ideal for: Small-scale projects or teams using only simple ML workflows; AutoML platforms or notebook-based solutions may suffice for low-complexity tasks.

Key Trends in MLOps Platforms

- Increasing adoption of end-to-end MLOps pipelines, combining CI/CD, monitoring, and retraining

- Automated monitoring and model drift detection using AI

- Cloud-native deployments with GPU/TPU support and hybrid integration

- Enhanced model explainability and auditability for regulatory compliance

- Integration with AutoML, feature stores, and experiment tracking tools

- Collaboration tools for distributed teams and remote data science workflows

- Low-code interfaces for operational teams to manage ML deployments

- Subscription and pay-as-you-go pricing models for flexible scaling

- Greater focus on reproducibility, versioning, and artifact management

- Edge deployment support for real-time ML inference

How We Selected These Tools (Methodology)

- Evaluated market adoption, global mindshare, and enterprise usage

- Assessed feature completeness for deployment, monitoring, and retraining

- Considered performance and reliability signals in production workloads

- Reviewed security posture, compliance certifications, and audit capabilities

- Examined integrations with cloud providers, ML frameworks, and orchestration tools

- Evaluated customer fit across solo developers, SMBs, mid-market, and enterprises

- Reviewed collaboration, reproducibility, and experiment tracking capabilities

- Prioritized active development and vendor support

- Considered scalability across GPU/TPU clusters and distributed workloads

- Balanced open-source flexibility with enterprise readiness and usability

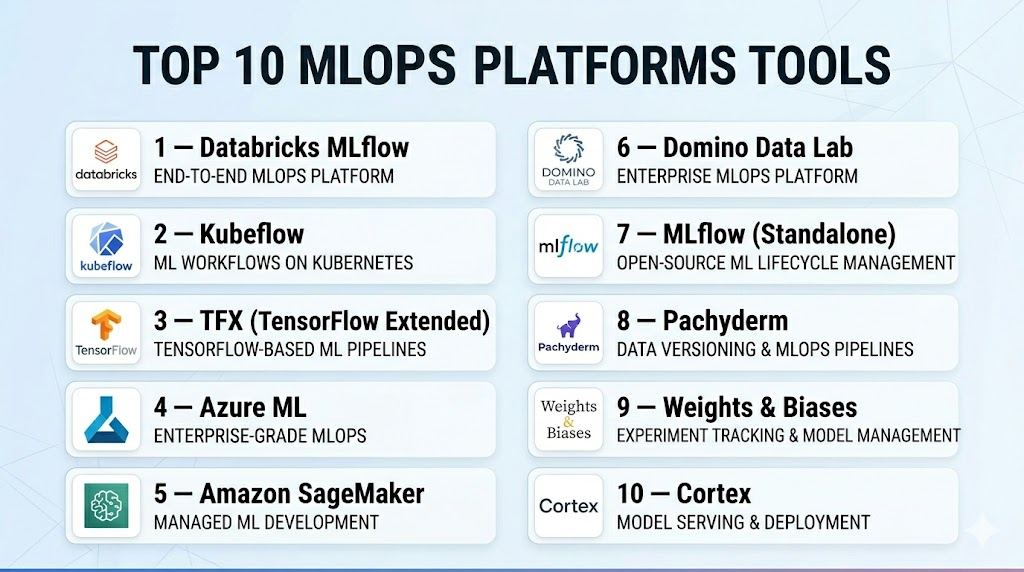

Top 10 MLOps Platforms Tools

#1 — Databricks MLflow

Short description : MLflow is an open-source platform for managing the ML lifecycle, including experimentation, reproducibility, and deployment. Ideal for data science teams aiming for robust, collaborative workflows.

Key Features

- Experiment tracking and model versioning

- Reproducible ML pipelines

- Deployment across cloud or on-premise

- Integration with Spark and Python ML frameworks

- Model registry for governance

- REST APIs for production deployment

- Scalable for distributed workloads

Pros

- Simplifies ML lifecycle management

- Strong community and open-source flexibility

- Scales for enterprise workloads

Cons

- Requires setup and configuration for production

- Limited low-code UI features

- Learning curve for newcomers

Platforms / Deployment

- Windows / macOS / Linux / Web

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python, R, Java APIs

- Spark, TensorFlow, PyTorch

- REST APIs for deployment

Support & Community

Active open-source community; enterprise support via Databricks.

#2 — Kubeflow

Short description : Kubeflow is an open-source MLOps platform designed for Kubernetes environments. It orchestrates ML pipelines, automates deployment, and scales model training.

Key Features

- Kubernetes-native pipeline orchestration

- Model training and serving

- Experiment tracking and versioning

- GPU and distributed training support

- Integration with cloud services

- Pipelines for CI/CD automation

Pros

- Cloud-native and scalable

- Supports containerized deployments

- Flexible for complex ML workflows

Cons

- Kubernetes knowledge required

- Setup can be complex

- Smaller enterprise support community

Platforms / Deployment

- Linux / Web

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- TensorFlow, PyTorch

- Docker, Kubernetes

- REST APIs

Support & Community

Open-source community; documentation available; community forums.

#3 — TFX (TensorFlow Extended)

Short description : TensorFlow Extended is an end-to-end MLOps framework for TensorFlow models. It automates model deployment, validation, and monitoring, suitable for production-grade ML pipelines.

Key Features

- Pipeline orchestration for TensorFlow models

- Data validation and preprocessing

- Model analysis and monitoring

- Deployment and serving tools

- Cloud integration with GCP

- Experiment tracking

Pros

- Production-ready for TensorFlow models

- Scales for large datasets

- Strong Google Cloud integration

Cons

- TensorFlow-dependent

- Cloud-centric

- Requires pipeline expertise

Platforms / Deployment

- Linux / Web

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- TensorFlow

- GCP services

- Python SDK

Support & Community

Google Cloud enterprise support; tutorials and community documentation.

#4 — Azure ML

Short description : Azure Machine Learning is a full-featured MLOps platform integrating model building, deployment, and monitoring, designed for enterprises in the Microsoft ecosystem.

Key Features

- CI/CD for ML models

- Automated pipelines

- Model versioning and governance

- Cloud and hybrid deployment

- Experiment tracking

- Python and R SDKs

Pros

- Deep integration with Microsoft services

- Enterprise-grade MLOps capabilities

- Scalable and secure

Cons

- Azure ecosystem required

- Learning curve for advanced workflows

- Premium features may be costly

Platforms / Deployment

- Windows / Web

- Cloud / Hybrid

Security & Compliance

- SOC 2, ISO 27001, HIPAA, GDPR

- Encryption and RBAC

Integrations & Ecosystem

- Azure Data Lake, SQL Database

- TensorFlow, PyTorch integration

- REST APIs

Support & Community

Microsoft enterprise support; strong documentation and forums.

#5 — Amazon SageMaker

Short description : SageMaker is AWS’s MLOps platform providing end-to-end support from training to deployment and monitoring of ML models. Ideal for enterprises heavily invested in AWS.

Key Features

- Model training, deployment, and monitoring

- Auto-scaling of compute resources

- MLOps pipelines for CI/CD

- Experiment tracking and reproducibility

- Integration with AWS data services

Pros

- Scalable cloud-native solution

- Enterprise-grade monitoring and retraining

- Pre-built model templates

Cons

- AWS subscription required

- Cloud-only deployment

- Cost scales with usage

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- SOC 2, ISO 27001, HIPAA, GDPR

- Encryption and access control

Integrations & Ecosystem

- S3, Redshift, Lambda

- TensorFlow, PyTorch

- Python SDK and REST APIs

Support & Community

AWS enterprise support; community forums; documentation.

#6 — Domino Data Lab

Short description : Domino Data Lab is an MLOps platform focusing on reproducibility, collaboration, and model deployment. It is ideal for enterprise data science teams.

Key Features

- Experiment tracking and versioning

- Deployment pipelines

- Collaboration for distributed teams

- GPU/CPU resource management

- Model monitoring and retraining

Pros

- Enterprise collaboration features

- Scalable compute resource management

- Supports multiple ML frameworks

Cons

- Premium pricing

- Setup complexity

- Cloud and hybrid deployment only

Platforms / Deployment

- Web / Linux

- Cloud / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python, R, and TensorFlow integration

- Cloud storage connectors

- REST APIs

Support & Community

Enterprise support tiers; active documentation and tutorials.

#7 — MLflow (Standalone)

Short description : MLflow manages ML lifecycle from experiment tracking to deployment. It is framework-agnostic and popular with data science teams building reproducible ML pipelines.

Key Features

- Experiment tracking and model versioning

- Deployment management

- Python, R, Java SDK

- Model registry and reproducibility

- Scalable for cloud or on-premise

Pros

- Open-source and flexible

- Framework-agnostic

- Scalable for enterprise workloads

Cons

- Requires infrastructure setup

- Less visual UI compared to enterprise platforms

- Enterprise-grade features require integration

Platforms / Deployment

- Windows / macOS / Linux / Web

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python, R, Java APIs

- Spark, TensorFlow, PyTorch

- REST APIs

Support & Community

Active open-source community; enterprise support via Databricks.

#8 — Pachyderm

Short description : Pachyderm is a data-centric MLOps platform automating data versioning, pipelines, and deployment. It is ideal for teams prioritizing reproducibility and data lineage.

Key Features

- Data versioning for ML pipelines

- Automated CI/CD pipelines

- Docker and Kubernetes integration

- Scalable distributed compute

- Python and Go SDKs

Pros

- Data lineage tracking

- Supports reproducible pipelines

- Kubernetes-native deployment

Cons

- Kubernetes knowledge required

- Learning curve for setup

- Smaller community

Platforms / Deployment

- Linux / Web

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Docker and Kubernetes

- Python SDK

- REST APIs

Support & Community

Open-source community; enterprise support optional.

#9 — Weights & Biases

Short description : Weights & Biases is a lightweight MLOps platform focused on experiment tracking, collaboration, and visualization for teams running ML at scale.

Key Features

- Experiment tracking and visualization

- Model versioning

- Team collaboration and dashboards

- Integration with Python ML libraries

- Cloud and hybrid deployment

Pros

- Rapid setup and ease of use

- Excellent visualization and collaboration

- Integrates with existing ML workflows

Cons

- Requires integration for full CI/CD

- Cloud subscription may be required

- Limited low-code deployment

Platforms / Deployment

- Windows / macOS / Linux / Web

- Cloud / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python ML libraries

- TensorFlow, PyTorch

- REST APIs

Support & Community

Active user community; enterprise support options.

#10 — Cortex

Short description : Cortex is a cloud-native MLOps platform for deploying and scaling ML models as APIs. Ideal for engineering teams needing production-grade inference pipelines.

Key Features

- Model deployment as APIs

- Auto-scaling and versioning

- Cloud-native infrastructure

- Integration with CI/CD pipelines

- Python SDK

Pros

- Focused on production deployments

- Scalable and resilient

- API-first approach

Cons

- Limited experiment tracking

- Cloud-only

- Learning curve for advanced features

Platforms / Deployment

- Linux / Web

- Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Python SDK

- CI/CD integration

- REST APIs

Support & Community

Documentation and enterprise support; small but active community.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Databricks MLflow | Experiment tracking | Windows/macOS/Linux/Web | Cloud / Hybrid | Full ML lifecycle management | N/A |

| Kubeflow | Kubernetes-native pipelines | Linux / Web | Cloud / Hybrid | Pipeline orchestration | N/A |

| TFX | TensorFlow model ops | Linux / Web | Cloud / Self-hosted | Production pipelines for TF | N/A |

| Azure ML | Microsoft ecosystem | Windows / Web | Cloud / Hybrid | CI/CD integration for ML | N/A |

| SageMaker | AWS users | Web | Cloud | End-to-end ML workflow | N/A |

| Domino Data Lab | Team collaboration | Web / Linux | Cloud / Hybrid | Reproducibility & collaboration | N/A |

| MLflow | Framework-agnostic lifecycle | Windows/macOS/Linux/Web | Cloud / Self-hosted | Experiment tracking & deployment | N/A |

| Pachyderm | Data lineage and reproducibility | Linux / Web | Cloud / Self-hosted/Hybrid | Data versioning pipelines | N/A |

| Weights & Biases | Experiment visualization | Windows/macOS/Linux/Web | Cloud / Hybrid | Visual dashboards and team tracking | N/A |

| Cortex | Production inference | Linux / Web | Cloud | API-first deployment of ML models | N/A |

Evaluation & Scoring of MLOps Platforms

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| Databricks MLflow | 9 | 7 | 9 | 7 | 9 | 8 | 8 | 8.3 |

| Kubeflow | 8 | 6 | 8 | 6 | 8 | 7 | 7 | 7.3 |

| TFX | 8 | 7 | 7 | 6 | 8 | 7 | 7 | 7.4 |

| Azure ML | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7.7 |

| SageMaker | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Domino Data Lab | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.5 |

| MLflow | 8 | 7 | 7 | 6 | 8 | 7 | 7 | 7.3 |

| Pachyderm | 7 | 6 | 7 | 6 | 7 | 6 | 7 | 6.8 |

| Weights & Biases | 7 | 8 | 7 | 6 | 7 | 7 | 7 | 7.3 |

| Cortex | 7 | 7 | 6 | 6 | 7 | 6 | 7 | 6.9 |

These scores are comparative, helping teams weigh functionality, ease of use, integrations, performance, and enterprise value.

Which MLOps Platforms Tool Is Right for You?

Solo / Freelancer

Weights & Biases, MLflow, or Pachyderm provide lightweight, accessible MLOps for experimentation and learning.

SMB

Domino Data Lab, Databricks MLflow, or Azure ML offer team collaboration and scalable pipelines without extensive infrastructure.

Mid-Market

SageMaker, Kubeflow, or Vertex AI provide cloud scalability, CI/CD automation, and model monitoring for growing teams.

Enterprise

Databricks MLflow, Azure ML, SageMaker, and Domino Data Lab deliver enterprise-grade MLOps, governance, and distributed workloads.

Budget vs Premium

Open-source tools like MLflow or Pachyderm are cost-effective; premium platforms provide additional automation, scalability, and support.

Feature Depth vs Ease of Use

Databricks MLflow, SageMaker, and Azure ML offer extensive features; Weights & Biases and Pachyderm prioritize ease of experimentation.

Integrations & Scalability

Cloud-native platforms integrate with data lakes, BI tools, and MLOps pipelines for enterprise-grade deployment.

Security & Compliance Needs

Enterprise deployments provide encryption, access control, audit logs, and regulatory compliance; open-source options require additional configuration.

Frequently Asked Questions (FAQs)

1. What is the pricing model for MLOps platforms?

Open-source platforms are free; enterprise versions may have subscription or pay-as-you-go models.

2. How quickly can teams onboard?

Low-code tools like Domino Data Lab allow rapid onboarding; platforms like Kubeflow require infrastructure expertise.

3. Can multiple users collaborate on MLOps workflows?

Yes, Databricks, Azure ML, and Domino Data Lab support multi-user collaboration with role-based access.

4. Are these platforms secure for sensitive data?

Enterprise solutions provide SOC 2, ISO 27001, HIPAA, and GDPR compliance; open-source setups require custom security.

5. How scalable are these platforms?

Cloud-native platforms like SageMaker, Azure ML, and Databricks MLflow scale for distributed GPU/TPU workloads.

6. Can I deploy models to production easily?

Yes, most MLOps platforms provide CI/CD pipelines, REST APIs, or Kubernetes-based deployment.

7. Which ML frameworks are supported?

Common frameworks include TensorFlow, PyTorch, Scikit-learn, and XGBoost; some platforms are framework-agnostic.

8. Do platforms support reproducibility?

Yes, they provide experiment tracking, model versioning, and pipeline management for reproducible results.

9. Can I switch platforms if needed?

Exporting models via ONNX or standard formats allows portability, though some platform-specific features may need adaptation.

10. Are there alternatives to MLOps platforms?

Yes, notebook environments, AutoML tools, or custom CI/CD pipelines can substitute for smaller or less complex workflows.

Conclusion

MLOps platforms are transforming how organizations operationalize machine learning, making it easier to deploy, monitor, and scale AI models. Open-source tools like MLflow and Pachyderm provide flexibility and cost-efficiency, while enterprise platforms such as Databricks MLflow, SageMaker, and Azure ML deliver governance, scalability, and production readiness. Choosing the right platform depends on team size, deployment strategy, workflow complexity, and regulatory requirements. Running pilot projects or trials is highly recommended to validate fit, assess integrations, and ensure performance before full-scale adoption

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals