Introduction

AI Safety & Evaluation Tools are specialized platforms designed to monitor, assess, and ensure the responsible deployment of AI systems. They provide capabilities to test AI models for alignment with ethical standards, detect biases, evaluate robustness, and validate safety under a variety of conditions. These tools are critical for organizations adopting AI at scale, helping mitigate operational, ethical, and regulatory risks while improving transparency and trust in AI-powered applications.

The importance of AI safety and evaluation has grown as AI systems are deployed in sensitive domains such as healthcare, finance, autonomous vehicles, and customer-facing applications. Companies increasingly require tools to ensure compliance, identify vulnerabilities, and maintain control over AI behavior.

Real-world use cases include:

- Testing AI models for bias and fairness

- Evaluating model robustness under adversarial inputs

- Monitoring AI behavior to prevent unsafe outcomes

- Validating compliance with regulations and internal policies

- Supporting governance and audit frameworks for AI

Key evaluation criteria when selecting these tools:

- Model evaluation and testing features

- Bias and fairness detection

- Adversarial robustness testing

- Explainability and interpretability

- Compliance and audit readiness

- Integration with AI pipelines

- Collaboration and workflow support

- Performance monitoring

- Deployment flexibility

- Security and access control

Best for: Enterprises, AI teams, compliance officers, regulators, and organizations with critical AI deployments

Not ideal for: Small teams with minimal AI operations or low-risk AI use cases

Key Trends in AI Safety & Evaluation Tools

- Increased adoption of automated model auditing and safety testing

- Integration of explainable AI and interpretability dashboards

- Enhanced bias detection, fairness metrics, and mitigation strategies

- Adversarial testing and robustness evaluation becoming standard practice

- Centralized dashboards for multi-model oversight

- Cloud-native platforms with hybrid deployment options

- Standardized AI risk assessment frameworks

- Collaboration tools for cross-functional AI governance teams

- APIs and plug-ins for pipeline integration

- Subscription or usage-based pricing models

How We Selected These Tools (Methodology)

- Market adoption and recognition in AI safety community

- Feature completeness across evaluation, testing, and bias mitigation

- Reliability and performance signals from production environments

- Security posture and access control mechanisms

- Integrations with AI/ML platforms and enterprise systems

- Customer fit across different organization sizes and industries

- Workflow and collaboration support

- Documentation, training, and community engagement

- Ongoing development and future-proofing of tools

- Alignment with AI ethics, governance, and regulatory standards

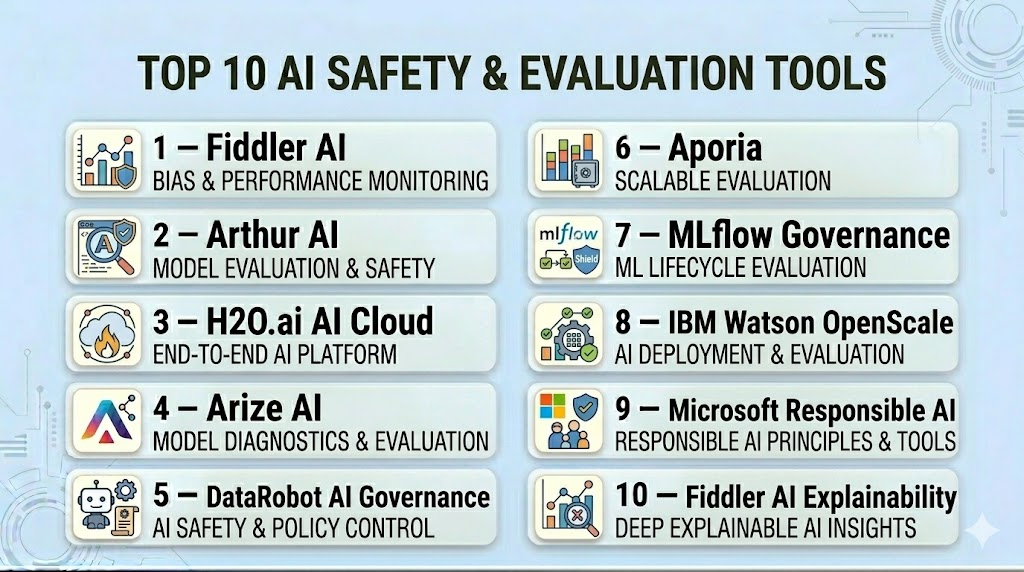

Top 10 AI Safety & Evaluation Tools

#1 — Fiddler AI

Short description: Fiddler AI offers enterprise AI observability, monitoring model performance, and providing bias detection and explainability dashboards. It helps AI teams maintain governance, compliance, and trust across deployed models.

Key Features

- Model monitoring and drift detection

- Bias and fairness evaluation

- Explainable AI insights

- Compliance reporting

- Multi-model integration

- Alerts and workflow automation

Pros

- Real-time monitoring and insights

- Supports collaboration across teams

Cons

- Enterprise-oriented pricing

- Steep learning curve for advanced features

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Connectors for ML frameworks

- API for integration with AI pipelines

- Workflow integration

Support & Community

- Dedicated enterprise support

- Documentation and onboarding guides

#2 — Arthur AI

Short description: Arthur AI focuses on model observability, bias detection, and AI risk management. It enables AI teams to identify and mitigate safety risks proactively across multiple models.

Key Features

- Bias detection and fairness analytics

- Drift and performance monitoring

- Explainability dashboards

- Alerting and notifications

- Multi-model support

Pros

- Proactive risk management

- Scales for enterprise deployments

Cons

- Pricing may be high for smaller teams

- May require technical expertise

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Supports popular ML frameworks

- API and SDK integration

- Workflow connectors

Support & Community

- Documentation and onboarding

- Customer support tiers

#3 — H2O.ai AI Cloud

Short description: H2O.ai AI Cloud provides governance, model monitoring, explainability, and bias detection within a scalable AI platform for enterprise users.

Key Features

- AI model lifecycle management

- Bias and fairness evaluation

- Explainable AI dashboards

- Compliance and reporting

- Multi-cloud and hybrid deployment

Pros

- Scalable and enterprise-ready

- Comprehensive governance capabilities

Cons

- Complexity in implementation

- Requires training for advanced usage

Platforms / Deployment

- Web / Cloud / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- ML frameworks support

- Enterprise workflow connectors

- APIs for data pipelines

Support & Community

- Enterprise support options

- Extensive documentation

#4 — Arize AI

Short description: Arize AI offers model monitoring, bias detection, and safety evaluation for AI applications. It is suited for organizations needing observability and governance across AI deployments.

Key Features

- Model drift and performance monitoring

- Fairness assessment and bias detection

- Multi-model management

- Alerting and notifications

- Explainable AI dashboards

Pros

- Real-time observability

- Scalable for large deployments

Cons

- Enterprise-focused pricing

- Requires technical expertise

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- ML frameworks, APIs, workflow tools

Support & Community

- Documentation and onboarding

- Customer support

#5 — DataRobot AI Governance

Short description: DataRobot integrates governance features into its AI platform, providing explainability, bias detection, and regulatory compliance capabilities for enterprise AI models.

Key Features

- Explainable AI dashboards

- Compliance reporting and audit-ready tools

- Bias detection and mitigation

- Model lifecycle management

- Collaboration features

Pros

- Enterprise-grade governance

- Integration with existing AI pipelines

Cons

- Costly for smaller teams

- Complex deployment

Platforms / Deployment

- Web / Cloud / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- ML frameworks, APIs, enterprise workflows

Support & Community

- Enterprise support tiers

- Documentation

#6 — Aporia

Short description: Aporia focuses on AI model monitoring and observability to ensure governance and policy compliance across deployments.

Key Features

- Model performance monitoring

- Bias detection and fairness analytics

- Real-time alerts

- Multi-model support

- Collaboration dashboards

Pros

- Proactive model monitoring

- Alerts and automated reporting

Cons

- Enterprise-oriented

- Requires technical knowledge

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- ML frameworks, APIs, workflow connectors

Support & Community

- Documentation

- Customer support

#7 — MLflow Governance

Short description: MLflow offers model versioning, experiment tracking, and reproducibility features with governance capabilities integrated into ML pipelines.

Key Features

- Model versioning and lifecycle management

- Experiment tracking and reproducibility

- Integration with ML pipelines

- Open-source extensibility

- Audit trails

Pros

- Open-source flexibility

- Strong lifecycle management

Cons

- Governance features less comprehensive

- Requires customization

Platforms / Deployment

- Web / Linux / macOS

- Self-hosted / Cloud

Security & Compliance

- Varies / N/A

Integrations & Ecosystem

- Python, ML frameworks, APIs

Support & Community

- Community support

- Documentation

#8 — IBM Watson OpenScale

Short description: IBM Watson OpenScale provides enterprise AI governance, bias detection, explainability, and monitoring for deployed models.

Key Features

- Bias detection and mitigation

- Explainable AI insights

- Audit trails and compliance

- Multi-model support

- Monitoring dashboards

Pros

- Enterprise-grade compliance

- Scalable and robust

Cons

- Complexity in setup

- Costly for small teams

Platforms / Deployment

- Web / Cloud / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- IBM ecosystem, APIs, ML frameworks

Support & Community

- Enterprise support

- Documentation

#9 — Microsoft Responsible AI

Short description: Microsoft Responsible AI delivers governance, bias monitoring, and compliance tools integrated with Azure AI.

Key Features

- AI monitoring and auditing

- Fairness and bias assessment

- Compliance reporting

- Multi-model support

- Explainable AI features

Pros

- Integrates with Azure ecosystem

- Enterprise-ready governance

Cons

- Primarily for Azure users

- Complexity for small teams

Platforms / Deployment

- Web / Cloud / Hybrid

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- Azure ML, APIs, workflow tools

Support & Community

- Enterprise support

- Documentation

#10 — Fiddler AI Explainability

Short description: Fiddler AI Explainability offers advanced AI explainability and governance tools for auditing, bias detection, and model transparency.

Key Features

- Explainable AI dashboards

- Bias and fairness monitoring

- Audit-ready reporting

- Integration with enterprise ML pipelines

- Multi-model support

Pros

- Strong explainability features

- Supports compliance and audits

Cons

- Enterprise-focused

- Requires advanced knowledge

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- ML frameworks, APIs, workflow connectors

Support & Community

- Documentation

- Enterprise support

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Fiddler AI | Enterprise AI observability | Web | Cloud | Explainability & monitoring | N/A |

| Arthur AI | AI risk & performance | Web | Cloud | Bias detection | N/A |

| H2O.ai AI Cloud | Enterprise governance | Web | Cloud/Hybrid | Model lifecycle | N/A |

| Arize AI | Model drift & fairness | Web | Cloud | Real-time alerts | N/A |

| DataRobot AI Governance | Enterprise teams | Web | Cloud/Hybrid | Compliance & reporting | N/A |

| Aporia | Monitoring & observability | Web | Cloud | Alerts & fairness | N/A |

| MLflow Governance | ML pipelines | Web/Linux/macOS | Self-hosted/Cloud | Experiment tracking | N/A |

| IBM Watson OpenScale | Enterprise AI governance | Web | Cloud/Hybrid | Bias detection | N/A |

| Microsoft Responsible AI | Azure governance | Web | Cloud/Hybrid | Fairness & compliance | N/A |

| Fiddler AI Explainability | Explainable AI | Web | Cloud | Audit-ready dashboards | N/A |

Evaluation & Scoring of AI Safety & Evaluation Tools

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| Fiddler AI | 9 | 8 | 8 | 8 | 8 | 8 | 8 | 8.3 |

| Arthur AI | 8 | 7 | 8 | 8 | 8 | 7 | 8 | 7.8 |

| H2O.ai AI Cloud | 9 | 7 | 8 | 8 | 8 | 8 | 8 | 8.1 |

| Arize AI | 8 | 7 | 8 | 8 | 8 | 7 | 8 | 7.9 |

| DataRobot AI Governance | 9 | 7 | 8 | 8 | 8 | 8 | 8 | 8.1 |

| Aporia | 8 | 7 | 8 | 8 | 8 | 7 | 8 | 7.9 |

| MLflow Governance | 7 | 7 | 7 | 7 | 7 | 7 | 7 | 7.0 |

| IBM Watson OpenScale | 9 | 7 | 8 | 8 | 8 | 8 | 8 | 8.1 |

| Microsoft Responsible AI | 8 | 7 | 8 | 8 | 8 | 8 | 8 | 7.9 |

| Fiddler AI Explainability | 8 | 7 | 7 | 8 | 7 | 7 | 7 | 7.5 |

Which AI Safety & Evaluation Tools Tool Is Right for You?

Solo / Freelancer

- Open-source or lightweight tools like MLflow are suitable for experimentation and learning AI safety practices.

SMB

- Aporia, Arize AI, and Arthur AI provide safety evaluation, monitoring, and fairness features suitable for small teams.

Mid-Market

- H2O.ai AI Cloud and DataRobot offer advanced governance, reporting, and multi-model management for mid-sized organizations.

Enterprise

- Fiddler AI, IBM Watson OpenScale, and Microsoft Responsible AI deliver enterprise-grade safety, compliance, and audit-ready capabilities.

Budget vs Premium

- Open-source frameworks reduce costs but require technical setup; premium platforms provide robust enterprise compliance and monitoring.

Feature Depth vs Ease of Use

- Developer-focused tools provide deeper control; enterprise tools prioritize usability and cross-team collaboration.

Integrations & Scalability

- Enterprise platforms scale across multiple models and integrate with ML pipelines, data systems, and workflow tools.

Security & Compliance Needs

- High-risk or regulated industries require platforms with strong access control, audit logs, and compliance reporting.

Frequently Asked Questions (FAQs)

1. What are AI safety tools?

AI safety tools monitor, evaluate, and mitigate risks in AI models to ensure ethical, robust, and compliant deployments.

2. Do these tools detect bias in AI models?

Yes, bias detection and fairness evaluation are core functionalities of most AI safety platforms.

3. Can small teams use these tools?

Open-source and lightweight tools like MLflow can be suitable for small teams or solo practitioners.

4. How do these tools support compliance?

They provide reporting, audit trails, and monitoring features aligned with industry regulations and internal policies.

5. Can these tools monitor multiple AI models simultaneously?

Yes, multi-model monitoring is common in enterprise-focused AI safety platforms.

6. Are cloud and on-prem deployments supported?

Many platforms offer cloud, hybrid, or self-hosted deployment options.

7. Do these tools integrate with ML frameworks?

Yes, integration with frameworks like TensorFlow, PyTorch, and enterprise ML pipelines is standard.

8. Can AI safety tools reduce operational risk?

Yes, by monitoring model performance, bias, and compliance, they mitigate AI-related risks.

9. How steep is the learning curve?

Developer-oriented tools require technical expertise, while enterprise platforms offer easier-to-use interfaces.

10. What alternatives exist?

Manual audits and internal governance processes are alternatives, though less scalable and efficient.

Conclusion

AI Safety & Evaluation Tools are crucial for organizations deploying AI responsibly. They provide real-time monitoring, bias detection, explainability, and compliance reporting, enabling safe and ethical AI adoption. Selecting the right tool depends on the scale of AI usage, regulatory requirements, and organizational priorities. Small teams may benefit from open-source tools, while enterprises require comprehensive platforms for audit-ready governance and cross-team collaboration. A careful evaluation and pilot implementation can help organizations mitigate risk, ensure fairness, and maintain trust in AI-driven decision-making

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals