Introduction

RAG (Retrieval-Augmented Generation) tooling refers to platforms and frameworks that combine traditional language model generation with external knowledge retrieval. These tools enable AI systems to provide accurate, contextually relevant outputs by fetching information from documents, databases, or APIs before generating responses. RAG bridges the gap between static model knowledge and dynamic, up-to-date information, making it essential for enterprise AI, customer support, and research applications.

The relevance of RAG tooling has grown as businesses increasingly rely on AI to deliver precise answers in real-time contexts. These tools are critical for reducing hallucinations in LLMs, enhancing answer quality, and providing traceable outputs for compliance and verification purposes.

Real-world use cases include:

- Enterprise knowledge management and internal documentation queries

- Customer support automation with accurate and context-aware responses

- Research assistance in academia or corporate R&D

- Generating data-driven content for business intelligence and reports

- Question-answering over large document corpora

Key evaluation criteria for buyers:

- Retrieval capabilities (vector search, embeddings)

- Generation quality and LLM integration

- Scalability and performance

- Integration with internal knowledge bases

- Security and compliance

- Explainability and traceability

- API and workflow support

- Ease of deployment

- Monitoring and logging

- Cost and pricing flexibility

Best for: Enterprises, research teams, AI product developers, knowledge workers

Not ideal for: Teams with simple or single-source AI applications that do not require retrieval-augmented outputs

Key Trends in RAG Tooling

- Growing adoption of vector databases for efficient retrieval

- Enhanced model-agnostic RAG frameworks supporting multiple LLMs

- Real-time retrieval for dynamic and up-to-date content generation

- Explainable retrieval logs and output traceability

- Integration with enterprise knowledge management systems

- Deployment flexibility: cloud, on-prem, hybrid

- Automated evaluation and safety checks to reduce hallucinations

- API-first approaches for seamless integration into existing pipelines

- Multi-modal RAG combining text, images, and structured data

- Subscription and usage-based pricing models

How We Selected These Tools (Methodology)

- Market adoption and recognition among AI teams and enterprises

- Feature completeness across retrieval, generation, and monitoring

- Reliability and performance in production-scale deployments

- Security posture and compliance with data privacy standards

- Ecosystem integrations with vector databases, LLMs, and workflows

- Customer fit across different organization sizes and domains

- Ease of deployment and operational overhead

- Documentation, onboarding, and community support

- Continuous development and innovation in retrieval and generation

- Alignment with responsible AI and audit-ready outputs

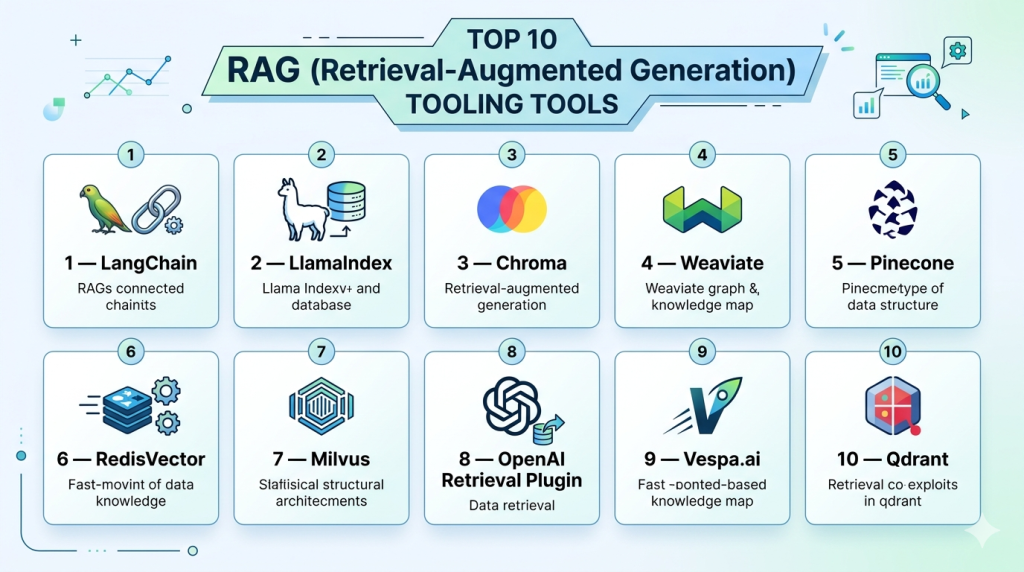

Top 10 RAG (Retrieval-Augmented Generation) Tooling Tools

#1 — LangChain

Short description: LangChain is a framework for building applications powered by language models with retrieval-augmented capabilities. It is widely used for integrating LLMs with external knowledge sources.

Key Features

- Modular framework for LLM integration

- Support for multiple vector databases

- Prompt management and chaining

- Retrieval-augmented pipelines

- Streaming and asynchronous operations

- Extensive examples and templates

Pros

- Highly flexible and customizable

- Strong developer community

Cons

- Requires coding expertise

- Can be complex for beginners

Platforms / Deployment

- Web / Linux / macOS

- Self-hosted / Cloud

Security & Compliance

- Varies / N/A

Integrations & Ecosystem

- OpenAI, Hugging Face, Pinecone, Weaviate

- APIs for custom retrieval

- Connector support for document sources

Support & Community

- Active open-source community

- Extensive documentation and tutorials

#2 — LlamaIndex

Short description: LlamaIndex simplifies the construction of RAG pipelines, enabling developers to query and index diverse data sources with LLMs efficiently.

Key Features

- Indexing and retrieval over documents

- LLM-agnostic integration

- Query interface for structured and unstructured data

- Storage connectors for cloud and local data

- Extensible with custom logic

Pros

- Fast setup for RAG pipelines

- Supports multiple data types

Cons

- Advanced customization requires programming

- Limited pre-built enterprise connectors

Platforms / Deployment

- Web / Linux / macOS

- Self-hosted / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- OpenAI, Cohere, Hugging Face

- Vector stores: Pinecone, Weaviate, FAISS

Support & Community

- Active GitHub community

- Tutorials and documentation available

#3 — Chroma

Short description: Chroma is a vector database and RAG platform optimized for LLM retrieval, allowing fast semantic search across large datasets.

Key Features

- High-performance vector search

- LLM integration for generation

- Multi-source document retrieval

- Real-time indexing and querying

- Scalable architecture

Pros

- Extremely fast retrieval

- Easy integration with RAG frameworks

Cons

- Limited built-in generation features

- Enterprise-grade support varies

Platforms / Deployment

- Linux / macOS

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- LangChain, LlamaIndex, OpenAI APIs

- Pinecone and Weaviate connectors

Support & Community

- Active open-source community

- Documentation available

#4 — Weaviate

Short description: Weaviate is an open-source vector database with built-in RAG support, providing semantic search and retrieval for AI applications.

Key Features

- Semantic vector search engine

- RAG-ready pipelines

- Multi-modal embeddings

- Cloud-native and scalable

- API-first design

Pros

- Supports large-scale datasets

- Multi-modal data handling

Cons

- Initial setup can be complex

- Self-hosting requires expertise

Platforms / Deployment

- Web / Linux / macOS

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- LangChain, LlamaIndex, Hugging Face

- RESTful and GraphQL APIs

Support & Community

- Documentation and community support

- Open-source community active

#5 — Pinecone

Short description: Pinecone is a managed vector database that simplifies retrieval for RAG applications, focusing on performance and scalability.

Key Features

- Cloud-native vector indexing

- Real-time search and retrieval

- LLM integration for generation

- High availability and scaling

- Multi-language support

Pros

- Fully managed service

- Excellent retrieval performance

Cons

- Cloud-only deployment

- Cost scales with usage

Platforms / Deployment

- Web / Cloud

Security & Compliance

- SOC 2, GDPR, Not publicly stated

Integrations & Ecosystem

- LangChain, LlamaIndex

- APIs and SDKs for Python and Node.js

Support & Community

- Enterprise-grade support

- Documentation and examples

#6 — RedisVector

Short description: RedisVector extends Redis to handle vector embeddings for RAG tasks, enabling fast retrieval and semantic search.

Key Features

- Vector similarity search

- In-memory speed for large datasets

- Real-time updates

- LLM integration

- Multi-database support

Pros

- High-speed retrieval

- Reliable performance

Cons

- Requires Redis knowledge

- Limited built-in orchestration

Platforms / Deployment

- Linux / Windows / macOS

- Self-hosted / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- LangChain, LlamaIndex

- Redis modules for pipelines

Support & Community

- Redis community and documentation

#7 — Milvus

Short description: Milvus is an open-source vector database that provides scalable RAG capabilities for semantic search and retrieval in AI applications.

Key Features

- High-performance vector search

- Multi-modal embeddings

- LLM integrations

- Scalable architecture

- Cloud-native and self-hosted options

Pros

- Handles massive datasets

- Open-source flexibility

Cons

- Requires technical expertise

- Monitoring and maintenance overhead

Platforms / Deployment

- Linux / macOS

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- LangChain, LlamaIndex

- Python SDKs and REST API

Support & Community

- Open-source community active

- Documentation available

#8 — OpenAI Retrieval Plugin

Short description: OpenAI provides retrieval plugins that connect LLMs to knowledge bases, enabling RAG-based generation for real-time and accurate responses.

Key Features

- LLM-native retrieval

- Pre-built connectors to external knowledge

- API-driven integration

- Fine-tuning and caching

- Retrieval-augmented generation

Pros

- Seamless with OpenAI models

- Easy deployment

Cons

- Limited to OpenAI ecosystem

- Enterprise support varies

Platforms / Deployment

- Web / Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- LangChain, LlamaIndex

- Knowledge base connectors

Support & Community

- OpenAI support

- Community forums

#9 — Vespa.ai

Short description: Vespa.ai is a search engine and vector platform optimized for RAG workflows, providing real-time retrieval and generation integration.

Key Features

- Semantic search and ranking

- Vector and keyword search

- Real-time updates

- LLM integration

- Scalable architecture

Pros

- Real-time retrieval

- Handles hybrid search use cases

Cons

- Deployment requires expertise

- Limited built-in LLM orchestration

Platforms / Deployment

- Linux / macOS

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- LangChain, LlamaIndex

- RESTful API

Support & Community

- Documentation

- Community support

#10 — Qdrant

Short description: Qdrant is a vector search engine designed for RAG applications, providing high-performance semantic retrieval and embedding management.

Key Features

- Vector similarity search

- Embedding storage and management

- Real-time updates

- LLM integration

- Scalable deployment

Pros

- Fast and efficient retrieval

- Open-source flexibility

Cons

- Self-hosting requires expertise

- Limited built-in generation

Platforms / Deployment

- Linux / macOS

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

- LangChain, LlamaIndex

- Python SDK and REST API

Support & Community

- Open-source community

- Documentation available

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| LangChain | LLM integration | Web/Linux/macOS | Self-hosted/Cloud | Modular pipelines | N/A |

| LlamaIndex | Data indexing & querying | Web/Linux/macOS | Self-hosted/Cloud | Multi-source query | N/A |

| Chroma | High-speed vector search | Linux/macOS | Cloud/Self-hosted | Fast retrieval | N/A |

| Weaviate | Semantic search | Web/Linux/macOS | Cloud/Self-hosted | Multi-modal embeddings | N/A |

| Pinecone | Managed vector DB | Web | Cloud | Scalable vector search | N/A |

| RedisVector | In-memory vector DB | Linux/Windows/macOS | Cloud/Self-hosted | High-speed retrieval | N/A |

| Milvus | Large-scale vector DB | Linux/macOS | Cloud/Self-hosted | Scalable architecture | N/A |

| OpenAI Retrieval Plugin | OpenAI model retrieval | Web | Cloud | Seamless OpenAI integration | N/A |

| Vespa.ai | Real-time retrieval & ranking | Linux/macOS | Cloud/Self-hosted | Hybrid search | N/A |

| Qdrant | Vector search & embeddings | Linux/macOS | Cloud/Self-hosted | Fast semantic search | N/A |

Evaluation & Scoring of RAG Tooling

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 7 | 9 | 8 | 8 | 7 | 8 | 8.2 |

| LlamaIndex | 8 | 7 | 8 | 8 | 8 | 7 | 8 | 7.9 |

| Chroma | 8 | 7 | 8 | 8 | 9 | 7 | 8 | 8.0 |

| Weaviate | 8 | 7 | 8 | 8 | 8 | 7 | 8 | 7.9 |

| Pinecone | 9 | 8 | 8 | 8 | 9 | 8 | 8 | 8.5 |

| RedisVector | 8 | 7 | 7 | 8 | 9 | 7 | 8 | 7.9 |

| Milvus | 8 | 7 | 8 | 8 | 9 | 7 | 8 | 8.0 |

| OpenAI Retrieval Plugin | 8 | 8 | 7 | 8 | 8 | 7 | 8 | 7.9 |

| Vespa.ai | 8 | 7 | 7 | 8 | 9 | 7 | 8 | 7.9 |

| Qdrant | 8 | 7 | 7 | 8 | 9 | 7 | 8 | 7.9 |

Interpretation: Scores reflect a comparative evaluation across core features, integrations, performance, and usability. Higher totals indicate stronger overall capabilities, but the ideal choice depends on specific use cases and deployment preferences.

Which RAG Tooling Tool Is Right for You?

Solo / Freelancer

- Lightweight frameworks like LlamaIndex or LangChain are suitable for experimentation and personal projects.

SMB

- Tools like Chroma, Weaviate, and Pinecone offer managed services with scalability and easy integration for small teams.

Mid-Market

- Milvus, RedisVector, and Qdrant support larger datasets and multi-model RAG applications, suitable for mid-sized enterprises.

Enterprise

- LangChain combined with Pinecone or Weaviate provides enterprise-grade retrieval, monitoring, and multi-source integration.

Budget vs Premium

- Open-source tools reduce costs but require setup; premium managed services provide faster deployment and SLA guarantees.

Feature Depth vs Ease of Use

- Open-source tools offer deep customization, while cloud-native platforms prioritize ease of integration and speed.

Integrations & Scalability

- Select platforms that can connect to existing LLMs, vector stores, and enterprise workflows.

Security & Compliance Needs

- Enterprises should prioritize tools with secure access controls, logging, and cloud security certifications.

Frequently Asked Questions (FAQs)

1. What is RAG tooling?

RAG tooling integrates retrieval mechanisms with LLMs to enhance AI responses with relevant external data.

2. Can these tools handle multiple LLMs?

Yes, most frameworks are LLM-agnostic and support OpenAI, Hugging Face, or custom models.

3. Are open-source tools suitable for production?

Yes, but they may require more setup and monitoring compared to managed services.

4. How do these tools reduce AI hallucinations?

By retrieving relevant documents before generation, RAG tools reduce reliance on model memory alone.

5. Do they integrate with knowledge bases?

Yes, connectors exist for vector databases, SQL, NoSQL, and document stores.

6. Is real-time retrieval possible?

Most managed platforms support real-time or near-real-time retrieval for interactive applications.

7. How steep is the learning curve?

Open-source frameworks require coding skills; managed services are more user-friendly.

8. Can these tools scale to enterprise datasets?

Yes, vector databases like Pinecone, Milvus, and Weaviate handle millions of embeddings efficiently.

9. Are these tools secure?

Security varies; cloud-managed services typically provide enterprise-grade protections.

10. What alternatives exist?

Alternatives include pure LLM use without retrieval or static knowledge embedding, though less accurate.

Conclusion

RAG tooling is essential for organizations looking to combine LLMs with real-world data sources, enhancing accuracy, context, and traceability. Selecting the right tool depends on scale, integration requirements, and technical expertise. Open-source options suit developers and small teams, while managed services provide enterprise-grade performance, scalability, and support. By piloting and integrating RAG frameworks into existing AI workflows, organizations can significantly improve knowledge retrieval, reduce hallucinations, and maintain compliance and audit readiness.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals