Introduction

Data quality tools are specialized software solutions designed to identify, understand, and correct flaws in datasets. These tools ensure that information is accurate, complete, consistent, and reliable for business operations. In the modern data-driven landscape, high-quality data is the foundational element required for successful artificial intelligence (AI) implementations, machine learning models, and complex analytics. Without robust data quality, organizations face “garbage in, garbage out” scenarios where strategic decisions are based on flawed insights.

As organizations scale their digital infrastructure, data is often scattered across hybrid-cloud environments, legacy systems, and real-time streams. Data quality software automates the process of profiling, cleansing, and monitoring this information at scale. It moves beyond simple error-checking to encompass data observability, identifying anomalies before they impact downstream applications.

Real-world use cases:

- Customer 360 Initiatives: Consolidating customer data from CRM, support, and billing systems to create a single, accurate view.

- Compliance & Governance: Ensuring data meets regulatory standards like GDPR or CCPA by identifying sensitive information and maintaining accuracy.

- AI/ML Pipeline Readiness: Cleaning and labeling training data to ensure machine learning models produce unbiased and precise results.

- Supply Chain Optimization: Rectifying inventory and logistics data to prevent shipping delays and inventory stockouts.

- Financial Reporting: Validating transaction data to ensure financial statements are error-free and audit-ready.

Evaluation criteria for buyers:

- Profiling Depth: The ability to automatically scan data to discover patterns, distributions, and anomalies.

- Cleansing Automation: Features for standardized formatting, deduplication, and error correction.

- Real-time Monitoring: The capacity to alert teams to quality drops as data flows through pipelines.

- Ease of Integration: Support for modern data stacks including Snowflake, Databricks, and various SQL/NoSQL databases.

- User Experience: Accessibility for “citizen data scientists” and business analysts via low-code or no-code interfaces.

- Rule Management: Flexibility in defining and enforcing custom business rules for data validation.

- Data Observability: Advanced features that track data lineage and health over time.

- Scalability: Performance levels when processing multi-terabyte or petabyte-scale datasets.

- Automation via AI: Use of machine learning to suggest fixes or predict quality issues.

- Deployment Flexibility: Support for on-premises, cloud-native, or multi-cloud architectures.

Best for: Enterprise data teams, Chief Data Officers (CDOs), and analytics departments managing high-volume data pipelines that feed critical business decisions.

Not ideal for: Small businesses with static, low-volume spreadsheets that can be managed manually, or teams looking for basic data entry validation without broader integration.

Key Trends in Data Quality Software

- Proactive Data Observability: Moving from “find and fix” to “predict and prevent,” modern tools use AI to monitor data health and alert teams to schema changes or volume anomalies before they break reports.

- Shift-Left Data Quality: Organizations are increasingly integrating quality checks earlier in the data lifecycle (during the ingestion phase) rather than waiting for errors to appear in the dashboard.

- AI-Augmented Data Profiling: Generative AI is being utilized to automatically suggest data quality rules and documentation, significantly reducing manual setup time.

- Data Contracts: The adoption of formal agreements between data producers and consumers, enforced by quality tools to ensure data arrives in the expected format.

- Self-Healing Data Pipelines: Systems are beginning to automate the correction of common errors (like formatting or missing values) without human intervention.

- Governance-Integrated Quality: Deep integration between data catalogs and quality tools, allowing users to see “quality scores” directly within their search for data assets.

- Real-time Stream Quality: As businesses move toward event-driven architectures, tools are evolving to validate data as it moves through Kafka or Spark streams.

- Focus on Data Ethics: Quality tools are incorporating checks for bias and representation to ensure AI models are ethical and fair.

How We Selected These Tools (Methodology)

To determine the most effective data quality solutions, we applied a standardized evaluation methodology focused on enterprise performance and future-readiness:

- Market Adoption: Prioritizing tools widely used by Fortune 500 companies and leaders in the data space.

- Breadth of Capabilities: The selected tools must cover the core pillars of profiling, cleansing, and monitoring.

- Modern Infrastructure Support: We evaluated how well each tool handles cloud-native environments and modern data warehouses.

- Security & Compliance Frameworks: Selection was weighted toward tools offering robust administrative controls and data privacy protections.

- Technical Innovation: We favored tools that have successfully integrated AI and machine learning into their core quality engines.

- Community and Ecosystem: Analyzing the strength of documentation, third-party integrations, and user community size.

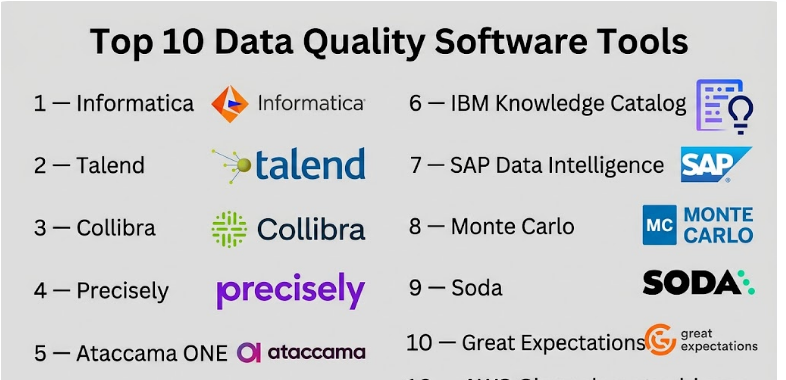

Top 10 Data Quality Software Tools

#1 — Informatica Data Quality

Short description: A flagship component of the Informatica Intelligent Data Management Cloud, this tool offers enterprise-grade profiling, cleansing, and monitoring for complex global organizations.

Key Features

- CLAIRE AI Engine: Uses metadata-driven AI to automate data discovery and suggest quality rules.

- Universal Connectivity: Broad support for cloud data warehouses, traditional databases, and mainframes.

- Rules Engine: A powerful interface for creating reusable quality rules that can be applied across the entire organization.

- Address Validation: Specialized tools for verifying and standardizing global address data.

- Scorecards and Dashboards: Visual representations of data health for business stakeholders.

- Exception Management: Automated workflows to route data errors to the correct owners for manual review.

Pros

- Deeply integrated with a broader data management ecosystem (MDM, Catalog, Governance).

- Highly scalable, capable of handling the most demanding enterprise workloads.

Cons

- High cost of ownership, often requiring specialized consultants for setup.

- Complex interface that may be overwhelming for smaller teams.

Platforms / Deployment

- Web / Windows

- Cloud / Hybrid

Security & Compliance

- SSO/SAML, MFA, RBAC.

- SOC 2, ISO 27001, HIPAA (Varies by region/tier).

Integrations & Ecosystem

Informatica is designed to be the central pillar of an enterprise data strategy.

- Snowflake / Databricks

- AWS / Azure / Google Cloud

- Salesforce

- SAP

Support & Community

Comprehensive enterprise support, a global network of certified partners, and an extensive “Informatica University” training portal.

#2 — Talend Data Fabric

Short description: Now part of Qlik, Talend Data Fabric provides an integrated suite for data integration and quality, focusing on “Data Trust” scores to help users gauge information reliability.

Key Features

- Talend Trust Score: Automatically assesses data for validity, completeness, and popularity.

- Self-Service Data Preparation: A browser-based interface allowing business users to clean data without writing code.

- Machine Learning Deduplication: Advanced algorithms to find and merge duplicate records.

- Standardization Components: Extensive libraries for transforming and masking data.

- Real-time Quality Checks: Ability to run quality gates during the ETL/ELT process.

- Collaborative Curation: Tools for teams to work together on resolving data conflicts.

Pros

- Excellent balance between developer-centric power and business-user accessibility.

- Unified platform reduces the need for multiple disjointed tools.

Cons

- Integration with Qlik’s ecosystem is ongoing, which may lead to roadmap shifts.

- Resource-intensive for on-premises deployments.

Platforms / Deployment

- Web / Windows / macOS / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

- RBAC, Encryption at rest and in transit.

- SOC 2, GDPR compliance features.

Integrations & Ecosystem

Broad connectivity through its open-source roots and modern cloud connectors.

- Snowflake

- Amazon S3 / Redshift

- Microsoft Azure Data Lake

- Kafka

Support & Community

Strong community support via Talend Forge and a robust set of professional services now enhanced by Qlik’s global reach.

#3 — Collibra Data Quality & Observability

Short description: A modern, proactive data quality solution that leverages predictive algorithms to detect anomalies and ensure data health across the enterprise.

Key Features

- Predictive Quality Rules: Uses machine learning to auto-generate rules based on historical data patterns.

- Data Observability: Monitors for pipeline breaks, schema changes, and volume fluctuations.

- No-code Rule Builder: Allows business users to define quality checks through a visual interface.

- Root Cause Analysis: Quickly identifies where in the pipeline a data quality issue originated.

- Data Lineage Integration: Visually maps how quality issues impact downstream reports.

- Enterprise Scale: Built to handle high-frequency data streams and massive datasets.

Pros

- Extremely strong focus on the “Observability” aspect of data quality.

- Deeply integrated with Collibra’s leading Data Governance platform.

Cons

- Primarily targeted at large enterprises; price may be high for mid-market users.

- Requires Collibra Data Intelligence Platform for maximum value.

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- SSO, SAML 2.0, MFA, RBAC.

- SOC 2 Type II, ISO 27001.

Integrations & Ecosystem

Strongly aligned with the modern cloud data stack.

- Snowflake

- Databricks

- Google BigQuery

- Tableau / Power BI

Support & Community

High-touch enterprise support, Collibra University, and a specialized community for data governance professionals.

#4 — Precisely Spectrum Quality

Short description: Precisely focuses on “Data Integrity,” offering deep capabilities in data profiling, standardization, and enrichment, particularly for location-based data.

Key Features

- Advanced Profiling: Deep technical and semantic discovery of data assets.

- Global Address Validation: Industry-leading tools for hyper-accurate location data.

- Data Enrichment: Ability to append third-party data (demographics, weather, points of interest).

- Entity Resolution: Sophisticated matching to create a “Golden Record” for customers or products.

- Browser-Based Stewardship: Dedicated portal for data stewards to manage quality tasks.

- High-Volume Processing: Optimized for large batch jobs and real-time transaction processing.

Pros

- The best-in-class option for organizations heavily reliant on location and geospatial data.

- Very strong entity resolution capabilities for master data management.

Cons

- Traditional interface can feel dated compared to newer cloud-native competitors.

- Modular pricing can become complex as more features are added.

Platforms / Deployment

- Windows / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

- MFA, RBAC.

- Not publicly stated.

Integrations & Ecosystem

Strong heritage in mainframe and RDBMS systems with growing cloud support.

- IBM Mainframe / iSeries

- SAP

- Oracle

- AWS / Azure

Support & Community

Excellent professional services and a long history of supporting complex enterprise IT environments.

#5 — Ataccama ONE

Short description: Ataccama ONE is an AI-powered data management platform that unifies data quality, governance, and master data management into a single, automated fabric.

Key Features

- Automated Data Profiling: Instant discovery of data types, masks, and quality issues.

- AI Data Quality Rules: Suggests and creates rules based on data behavior analysis.

- Data Catalog Integration: Quality scores are automatically visible within the data catalog.

- Self-Healing Capabilities: Automated workflows for basic data cleansing and enrichment.

- Edge Computing Support: Can run quality checks locally where the data resides to save on egress fees.

- Master Data Management: Built-in features for creating and managing golden records.

Pros

- High degree of automation significantly reduces manual workload for data teams.

- Excellent user interface that is modern and intuitive for both IT and business users.

Cons

- Full platform deployment can be a significant undertaking.

- Smaller community and third-party plugin ecosystem compared to Informatica.

Platforms / Deployment

- Web

- Cloud / Self-hosted / Hybrid

Security & Compliance

- SSO, MFA, Encryption.

- SOC 2, GDPR ready.

Integrations & Ecosystem

Focused on being a flexible “data fabric” that connects to diverse sources.

- Snowflake

- Microsoft Azure Data Lake

- Hadoop / Spark

- Tableau

Support & Community

Responsive technical support and a growing set of online documentation and training modules.

#6 — IBM Knowledge Catalog

Short description: Part of the IBM Cloud Pak for Data, this tool provides integrated data quality, governance, and cataloging features powered by IBM Watson.

Key Features

- Automated Metadata Discovery: Watson-powered discovery of data assets and their quality.

- Data Quality Scorecards: High-level views of data health across different departments.

- Intelligent Data Mapping: Automatically maps source data to a centralized business glossary.

- Privacy and Protection: Automated masking of sensitive data during quality checks.

- Data Lineage: Tracks the flow of data to identify where quality issues are introduced.

- Collaboration Tools: Integrated workflows for data stewards and analysts.

Pros

- Ideal for organizations already committed to the IBM Cloud or Cloud Pak for Data ecosystem.

- Strong AI capabilities through the integration of IBM Watson.

Cons

- Can be difficult to navigate if not using the broader IBM software suite.

- Licensing and packaging can be confusing for new customers.

Platforms / Deployment

- Web

- Cloud / Hybrid

Security & Compliance

- Enterprise-grade IAM, SSO, RBAC.

- SOC 2, ISO 27001, HIPAA, GDPR.

Integrations & Ecosystem

Deeply integrated with IBM’s hardware and software portfolio.

- IBM Db2

- Netezza

- Hadoop

- AWS / Azure / Google Cloud

Support & Community

World-class enterprise support and a massive global network of IBM-certified consultants.

#7 — SAP Data Intelligence

Short description: SAP’s premier tool for data integration and quality, specifically optimized for organizations running on SAP S/4HANA and other SAP business applications.

Key Features

- Integrated Quality Engines: Built-in profiling and cleansing for SAP and non-SAP data.

- Metadata Management: Centralized repository for all business and technical metadata.

- Machine Learning Integration: Build and deploy ML models that use high-quality data.

- Data Pipeline Orchestration: Combines quality checks with complex data movement tasks.

- Business Glossary: Links technical data fields to business terminology for clarity.

- Mass Data Processing: Optimized for high-throughput data processing in SAP environments.

Pros

- Unbeatable integration for companies that rely on SAP as their primary ERP system.

- Strong support for handling both structured and unstructured data.

Cons

- High cost and complexity for organizations not already using SAP.

- Steep learning curve for developers unfamiliar with the SAP ecosystem.

Platforms / Deployment

- Web

- Cloud / Hybrid

Security & Compliance

- SAP Cloud Platform security, SSO, MFA.

- Varies / N/A (Standard SAP compliance certifications).

Integrations & Ecosystem

Optimized for the SAP world but supports external connections.

- SAP S/4HANA / SAP BW

- Google BigQuery

- Amazon Redshift

- Azure Data Lake

Support & Community

Vast corporate support network and the SAP Community (formerly SCN) provide extensive knowledge.

#8 — Monte Carlo

Short description: A pioneer in “Data Observability,” Monte Carlo focuses on “Data Downtime” by providing end-to-end monitoring and alerting for data quality issues.

Key Features

- Automated Monitoring: Instantly monitors pipelines for schema changes, volume issues, and distribution shifts.

- Incident Management: Integrated workflows for acknowledging and resolving data issues.

- Data Lineage: Automatically maps how data flows from source to dashboard to pinpoint breaks.

- Anomalous Value Detection: Uses machine learning to find outliers in datasets without manual rules.

- Field-Level Lineage: Tracks individual data fields across the entire pipeline.

- Post-Mortems: Tools for analyzing why a data break occurred to prevent future issues.

Pros

- Fastest setup time for organizations looking for immediate visibility into data health.

- Does not require manual rule writing to start providing value.

Cons

- Focuses more on observability/monitoring than on “cleansing” or “standardization.”

- Pricing scales based on data volume, which can become expensive for large enterprises.

Platforms / Deployment

- Web

- Cloud

Security & Compliance

- SSO/SAML, SOC 2 Type II compliance.

- GDPR / HIPAA ready.

Integrations & Ecosystem

Purpose-built for the modern cloud data stack.

- Snowflake

- Databricks

- Looker / Tableau

- dbt (Data Build Tool)

Support & Community

Highly responsive support, a strong focus on “Data Engineering” communities, and extensive technical blogs.

#9 — Soda

Short description: An open-source and enterprise data quality platform that allows teams to define, monitor, and resolve data quality issues using a developer-friendly language.

Key Features

- Soda Checks Language (SodaCL): A human-readable, YAML-based language for defining quality checks.

- Soda Cloud: A centralized platform for managing alerts, incidents, and reporting.

- Automated Profiling: Automatically analyzes data structures to suggest initial checks.

- Git-Integrated Workflow: Allows teams to manage data quality rules as code.

- Anomaly Detection: Integrated machine learning to find “silent” data failures.

- Slack/Teams Integration: Real-time alerting for data engineers.

Pros

- Highly developer-friendly; integrates perfectly into modern CI/CD pipelines.

- Flexible pricing with a strong open-source core for smaller projects.

Cons

- Requires some technical knowledge to configure compared to pure “no-code” tools.

- Enterprise reporting features are locked behind the paid Cloud tier.

Platforms / Deployment

- Linux / Windows / macOS (CLI) / Web

- Cloud / Self-hosted / Hybrid

Security & Compliance

- SSO, Encryption.

- Not publicly stated.

Integrations & Ecosystem

Integrates with nearly every tool in the modern data engineering toolkit.

- dbt

- Airflow / Prefect

- Snowflake / BigQuery

- PostgreSQL

Support & Community

Vibrant open-source community, active Slack channel, and professional support for Cloud customers.

#10 — Great Expectations

Short description: A leading open-source tool for validating, documenting, and profiling your data to maintain “Data Integrity” throughout the pipeline.

Key Features

- Expectations: Declarative statements about what your data should look like (e.g., “expect column value to be between 0 and 100”).

- Data Docs: Automatically generates clean, readable documentation about your data quality.

- Automated Profiling: Inspects your data to generate a suite of “expectations” automatically.

- Extensible Library: Hundreds of built-in tests for common data quality scenarios.

- Pipeline Agnostic: Can be integrated into Airflow, Dagster, or custom Python scripts.

- Checkpointing: Allows for quality gates to be placed at any point in a data workflow.

Pros

- The “standard” for data quality in the Python data engineering world.

- Extremely flexible and can be customized for nearly any data source or test.

Cons

- High technical overhead; requires Python proficiency for advanced use cases.

- Enterprise features (GX Cloud) are still maturing compared to established vendors.

Platforms / Deployment

- Linux / macOS / Windows / Web (Cloud)

- Cloud / Self-hosted

Security & Compliance

- Not publicly stated (Open source); GX Cloud provides SSO and standard security.

Integrations & Ecosystem

Broad support across the data science and engineering landscape.

- Spark / Pandas

- SQLAlchemy (Postgres, MySQL, etc.)

- Airflow

- Databricks

Support & Community

A massive community of data engineers, extensive GitHub documentation, and a dedicated Slack community.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| Informatica | Global Enterprises | Web, Windows | Hybrid | CLAIRE AI Engine | 4.4/5 |

| Talend | Integrated ETL/DQ | Web, Windows, Linux | Hybrid | Talend Trust Score | 4.3/5 |

| Collibra | Data Governance | Web | Cloud | Predictive DQ Rules | 4.5/5 |

| Precisely | Location Accuracy | Windows, Linux | Hybrid | Global Address Validation | 4.2/5 |

| Ataccama ONE | Automated Fabric | Web | Hybrid | AI-Powered Self-Healing | 4.6/5 |

| IBM Knowledge Cat | Watson-driven DQ | Web | Hybrid | Automated Masking | 4.2/5 |

| SAP Data Intel | SAP Ecosystems | Web | Hybrid | Native SAP Connectivity | 4.0/5 |

| Monte Carlo | Observability | Web | Cloud | End-to-end Lineage | 4.8/5 |

| Soda | Dev-first Teams | CLI, Web | Hybrid | SodaCL Check Language | N/A |

| Great Expectations | Python Data Pipelines | CLI, Web | Self-hosted | Automated Data Docs | 4.7/5 |

Evaluation & Scoring of Data Quality Tools

This scoring model assesses each tool based on its utility for a modern enterprise data team.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| Informatica | 10 | 4 | 10 | 9 | 10 | 9 | 5 | 8.20 |

| Talend | 9 | 7 | 9 | 8 | 8 | 8 | 7 | 8.10 |

| Collibra | 9 | 6 | 9 | 9 | 8 | 9 | 6 | 7.95 |

| Precisely | 8 | 5 | 8 | 7 | 10 | 8 | 7 | 7.50 |

| Ataccama ONE | 9 | 8 | 8 | 8 | 9 | 7 | 8 | 8.25 |

| IBM Know. Cat | 8 | 6 | 9 | 10 | 8 | 9 | 6 | 7.75 |

| SAP Data Intel | 8 | 5 | 8 | 9 | 9 | 8 | 6 | 7.30 |

| Monte Carlo | 7 | 9 | 10 | 9 | 8 | 9 | 7 | 8.15 |

| Soda | 8 | 7 | 9 | 7 | 9 | 7 | 9 | 8.05 |

| Great Expectations | 9 | 5 | 9 | 6 | 9 | 7 | 10 | 7.75 |

Scoring Interpretation:

- Performance & Reliability: High scores (Precisely, Informatica) reflect the ability to handle massive batches without failure.

- Ease of Use: Tools like Monte Carlo score higher here due to their “plug-and-play” observability approach.

- Weighted Total: A score above 8.0 indicates a tool that offers exceptional capabilities for modern data challenges.

Which Data Quality Software Tool Is Right for You?

Solo / Freelancer

Individual consultants working with smaller datasets should prioritize Great Expectations or the open-source version of Soda. These tools provide professional-grade validation without the high cost of enterprise licenses.

SMB

Small and mid-sized businesses should look at Talend (for integrated pipelines) or Monte Carlo (if they already have a cloud warehouse and just need observability). These offer a good balance of power and manageable overhead.

Mid-Market

For growing companies with dedicated data teams, Ataccama ONE provides a modern, automated platform that grows with the organization’s needs without requiring an army of consultants.

Enterprise

Large-scale global organizations should choose between Informatica for deep, all-in-one data management or Collibra if their focus is heavily on data governance and compliance.

Budget vs Premium

- Budget: Great Expectations (Open Source), Soda (Free Tier).

- Premium: Informatica, IBM Cloud Pak, SAP Data Intelligence.

Feature Depth vs Ease of Use

- Technical Depth: Informatica, Houdini (Note: Comparison only), Great Expectations.

- Ease of Use: Monte Carlo, Ataccama ONE.

Integrations & Scalability

- Highest Scalability: Precisely, Informatica.

- Best Cloud Integrations: Monte Carlo, Soda.

Security & Compliance Needs

Organizations in highly regulated sectors (Finance, Healthcare) should prioritize IBM Knowledge Catalog or Informatica, as they provide the most robust, audit-ready compliance frameworks.

Frequently Asked Questions (FAQs)

- What is the difference between Data Quality and Data Observability?

Data quality traditionally focuses on the “health” of the data itself (accuracy, completeness). Data observability focuses on the health of the entire pipeline, including schema changes and volume anomalies. - How much does enterprise data quality software typically cost?

Enterprise solutions like Informatica can cost $50,000 to $250,000+ per year. Open-source or mid-market tools may range from free to $20,000 per year depending on volume. - Can I use these tools with a small data team?

Yes. Tools like Ataccama and Monte Carlo are designed with high automation so a small team can monitor large amounts of data without manual rule creation. - Is AI actually effective for data quality?

AI is highly effective at “anomaly detection” and suggesting rules, but human expertise is still required to define what “quality” specifically means for a particular business context. - Do these tools help with GDPR compliance?

Yes, most top-tier tools include PII (Personally Identifiable Information) discovery and masking features to help organizations comply with privacy regulations. - How do these tools integrate with BI tools like Tableau?

Quality tools often provide “trust signals” or metadata that can be pulled into BI tools to show dashboard users how reliable the underlying data is. - What is “Self-Healing” data?This refers to the ability of a tool to automatically correct common errors, such as fixing state abbreviations or filling in missing zip codes from a reference database.

- Can these tools handle unstructured data?

Traditional DQ tools focus on structured data, but modern platforms like SAP Data Intelligence and IBM Knowledge Catalog are increasingly supporting unstructured formats. - How difficult is it to migrate between data quality tools?

It can be difficult because rules are often defined in vendor-specific languages. Choosing tools that use standard formats like SQL or YAML (like Soda) makes future migrations easier. - Do I need a data quality tool if I use a modern warehouse like Snowflake?

Snowflake provides basic constraints, but a dedicated DQ tool is still necessary for cross-system validation, complex profiling, and business-level monitoring.

Conclusion

Data quality is the silent engine behind successful modern businesses. As we move into an era dominated by AI and automated decision-making, the tools we use to validate our information become as critical as the databases that store it. Whether you choose the deep enterprise capabilities of Informatica, the automated fabric of Ataccama, or the developer-first approach of Great Expectations, the goal remains the same: transforming raw data into a reliable asset.The most successful teams start by identifying their biggest “data pain points”—whether it’s broken pipelines, inaccurate customer reports, or compliance risks—and selecting a tool that solves those specific issues while offering a path toward broader data maturity.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals