Introduction

Stream processing frameworks are specialized software architectures designed to process continuous flows of data in near real-time. Unlike traditional batch processing, which waits for a complete set of data to be collected before executing a job, stream processing operates on data elements individually or in small “micro-batches” as they arrive. This allows for immediate transformation, aggregation, and analysis, enabling systems to react to events within milliseconds or microseconds. In technical terms, these frameworks manage the complexity of “data-in-motion,” handling issues like out-of-order data, system failures, and state management across distributed clusters.

In the technological landscape, stream processing has transitioned from a specialized requirement for high-frequency trading to a core component of the modern enterprise stack. As organizations move toward event-driven architectures, the ability to process telemetry from billions of IoT devices, monitor live cybersecurity threats, and provide instantaneous customer experiences has become a primary differentiator. Stream processing frameworks provide the “logical plumbing” that allows developers to build complex, low-latency applications that are resilient and scalable.

Real-world use cases:

- Real-time Fraud Prevention: Analyzing credit card swipes against historical patterns to approve or block transactions in under 50ms.

- Predictive Maintenance: Processing sensor data from jet engines or industrial turbines to detect vibration anomalies before hardware failure occurs.

- Dynamic Pricing Engines: Adjusting ride-share or e-commerce prices in real-time based on live demand and inventory levels.

- Ad-Tech Bidding: Executing millions of auctions per second for digital advertising slots as web pages load for users.

- Personalized Content Streams: Updating user recommendations on social media or streaming platforms based on what they clicked three seconds ago.

Evaluation criteria for buyers:

- State Management: The framework’s ability to “remember” information across a stream for complex calculations like “total sales over the last hour.”

- Delivery Guarantees: Support for “exactly-once” semantics to ensure data is neither lost nor processed twice.

- Windowing Capabilities: The sophistication of handling time-based data (tumbling, sliding, or session windows).

- Scalability and Elasticity: How easily the framework can add or remove nodes to handle spikes in data volume.

- Language Support: Availability of APIs for Java, Scala, Python, or SQL.

- Fault Tolerance: The speed and reliability of recovering from node failures without losing the current “state” of the calculation.

- Latency vs. Throughput: The trade-off between how fast a single record is processed versus the total volume handled per second.

- Connector Ecosystem: Ease of connecting to sources like Kafka, Pulsar, and S3.

- Deployment Flexibility: Support for Kubernetes, YARN, or managed cloud environments.

- Backpressure Handling: How the system manages situations where the data arrival rate exceeds the processing capacity.

Best for: Software engineers and architects building high-scale, event-driven applications, real-time data pipelines, and low-latency monitoring systems.

Not ideal for: Simple, infrequent data reports, heavy historical data mining where time-to-insight isn’t critical, or organizations without a dedicated engineering team to manage distributed systems.

Key Trends in Stream Processing Frameworks for 2026 and Beyond

- Streaming SQL Dominance: The barrier to entry is dropping as frameworks prioritize SQL as a first-class citizen, allowing data analysts to write streaming logic without deep Java knowledge.

- Serverless Stream Processing: Frameworks are moving toward “zero-management” models where the underlying infrastructure scales automatically based on the incoming event rate.

- WebAssembly (Wasm) Integration: The rise of Wasm allows developers to write processing logic in any language (Rust, Go, C++) and run it securely and efficiently within the streaming engine.

- AI-Native Pipelines: Modern frameworks are embedding machine learning inference directly into the stream, allowing models to score data points with microsecond latency.

- Unified Batch and Stream: The industry is moving away from “Lambda Architectures” toward unified models where the same code processes both live and historical data.

- Exactly-Once as the Standard: Once a complex configuration task, exactly-once processing is becoming the default setting across all major frameworks.

- Edge-to-Cloud Orchestration: Frameworks are increasingly capable of running lightweight processing on edge devices while seamlessly offloading heavy stateful work to the cloud.

- Self-Healing State: Advanced frameworks are utilizing distributed snapshots and AI-driven recovery to restore state after failures in seconds rather than minutes.

How We Selected These Tools (Methodology)

Our selection of the top 10 stream processing frameworks is based on a rigorous analysis of technical capability and industry adoption. We prioritized:

- Production Provenance: We chose frameworks that have been successfully deployed at “web-scale” by global technology leaders.

- Architectural Modernity: Focus was placed on tools that handle stateful processing and out-of-order data efficiently.

- Developer Productivity: We evaluated the quality of the API, documentation, and local development experience.

- Resilience Signals: Preference was given to frameworks with robust checkpointing and fault-tolerance mechanisms.

- Ecosystem Compatibility: We selected tools that integrate with standard message brokers like Apache Kafka and Apache Pulsar.

- Community Vitality: We analyzed the frequency of updates and the size of the contributor base to ensure long-term viability.

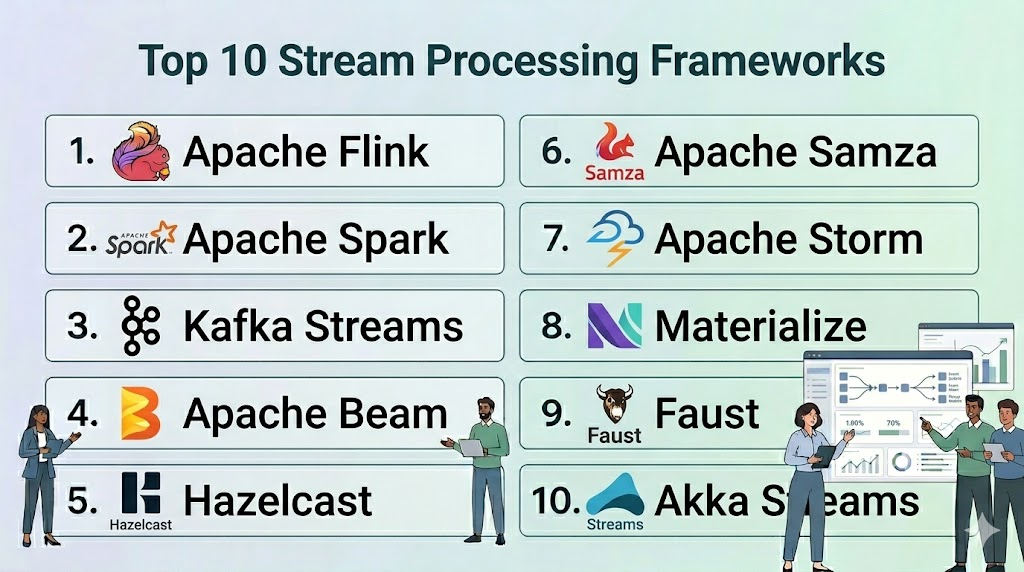

Top 10 Stream Processing Frameworks

#1 — Apache Flink

Short description: A powerful, open-source stream processing framework designed for stateful computations over data streams. It is widely regarded as the most advanced engine for low-latency, high-throughput processing.

Key Features

- Stateful Functions: Built-in support for maintaining complex application state with high consistency.

- Exactly-Once Semantics: Ensures that each event is reflected in the final results exactly once, even during failures.

- Advanced Windowing: Support for event-time processing, watermarks, and sophisticated window types (tumbling, sliding, session).

- Flink SQL: A robust SQL interface for defining streaming jobs without writing traditional code.

- Savepoints: Allows users to stop a job, update the code, and resume from exactly where they left off.

- Native Iteration: Unique ability to handle iterative algorithms directly within the stream.

Pros

- Unequaled performance for low-latency, stateful applications.

- Comprehensive unified model for both batch and stream processing.

Cons

- High operational complexity; requires significant expertise to manage clusters.

- Steep learning curve for its core Java/Scala APIs.

Platforms / Deployment

- Windows / macOS / Linux

- Kubernetes / YARN / Standalone / Cloud

Security & Compliance

- Kerberos authentication, TLS/SSL for data in transit, RBAC via integrated managers.

- Not publicly stated.

Integrations & Ecosystem

Flink has perhaps the widest connector library in the streaming world.

- Apache Kafka / Confluent

- Amazon S3 / Google Cloud Storage

- Elasticsearch / MongoDB

- Apache Pulsar

Support & Community

Massive open-source community and professional enterprise support available through vendors like Ververica and Cloudera.

#2 — Apache Spark (Structured Streaming)

Short description: An extension of the Apache Spark ecosystem that enables scalable, fault-tolerant, and high-throughput stream processing based on a micro-batch architecture.

Key Features

- Micro-Batch Engine: Processes data in small, high-speed batches to provide high throughput.

- Unified API: Use the same DataFrame and Dataset APIs for both batch and streaming code.

- Event-Time Processing: Robust support for handling data that arrives out of order using watermarks.

- Continuous Processing Mode: An experimental mode designed to achieve sub-millisecond latency.

- Checkpointing: Automatically saves the state of a stream to HDFS or S3 for fault recovery.

- Deep ML Integration: Seamlessly use Spark MLlib to apply machine learning models to live streams.

Pros

- Leverages the existing Spark ecosystem and skill sets found in most data teams.

- Exceptional throughput for large-scale data ingestion and transformation.

Cons

- Default micro-batching leads to slightly higher latency compared to Flink’s “one-at-a-time” model.

- State management can be more restrictive than dedicated stream-first engines.

Platforms / Deployment

- Windows / macOS / Linux

- Kubernetes / YARN / Mesos / Databricks

Security & Compliance

- Integrated with Hadoop security (Kerberos), SSL/TLS, and RBAC via Databricks.

- SOC 2, ISO 27001 (via cloud providers).

Integrations & Ecosystem

Integrates perfectly with the “Lakehouse” architecture.

- Delta Lake

- Apache Kafka

- HDFS / S3

- Tableau / Power BI

Support & Community

Huge community support and enterprise-grade backing from Databricks and major cloud vendors.

#3 — Apache Kafka Streams

Short description: A lightweight client library for building applications and microservices, where the input and output data are stored in Kafka clusters.

Key Features

- Library-First Design: It is a library, not a cluster; you run it as part of your standard application code.

- Exactly-Once Processing: Integrated tightly with Kafka’s transactional API for high consistency.

- Interactive Queries: Allows your application to query the state of the stream directly without an external database.

- KTable and KStream: Distinct abstractions for handling “changelogs” versus “event streams.”

- No Cluster Required: Simplifies deployment by removing the need for a separate processing cluster (like Flink or Spark).

- Elasticity: Scales naturally by adding or removing instances of your application.

Pros

- The simplest deployment model for organizations already using Apache Kafka.

- No additional infrastructure to manage; fits into existing CI/CD and DevOps pipelines.

Cons

- Strictly tied to Kafka; you cannot easily use other message brokers.

- Not intended for heavy-duty batch processing or non-Kafka data sources.

Platforms / Deployment

- Windows / macOS / Linux / Docker

- Self-hosted / Kubernetes / Cloud

Security & Compliance

- Inherits Kafka’s security: SASL/Kerberos, TLS, ACLs.

- Not publicly stated.

Integrations & Ecosystem

Highly focused on the Kafka and Confluent ecosystem.

- Apache Kafka / Confluent Cloud

- ksqlDB

- Connectors for S3, JDBC, etc.

- Schema Registry

Support & Community

Excellent documentation and strong community support, particularly from the Confluent team.

#4 — Apache Beam

Short description: A unified programming model that allows developers to write a single pipeline that can run on various processing “runners” like Flink, Spark, or Google Cloud Dataflow.

Key Features

- Runner Agnostic: Write code once and choose your execution engine (Flink, Spark, Dataflow, etc.) later.

- Unified Model: Handles both batch and streaming data using the same set of abstractions (PCollections).

- Rich SDKs: Support for Java, Python, Go, and Scala.

- Advanced Windowing: Industry-leading implementation of the Dataflow Model for handling time and completeness.

- Side Inputs: Allows for joining a main data stream with a static or slowly changing dataset.

- Beam SQL: Allows for expressing Beam pipelines using SQL syntax.

Pros

- Prevents “vendor lock-in” by allowing you to switch processing engines without rewriting code.

- The most sophisticated model for handling complex, time-based data logic.

Cons

- Debugging can be difficult as you are insulated from the underlying runner’s behavior.

- Not all features of a runner are always available through the Beam abstraction layer.

Platforms / Deployment

- Windows / macOS / Linux

- Google Cloud Dataflow / Flink / Spark / Samza

Security & Compliance

- Dependent on the underlying runner and environment.

- Varies / N/A.

Integrations & Ecosystem

Has a massive library of I/O connectors for virtually every data source.

- BigQuery / Bigtable

- Apache Kafka

- Pub/Sub

- JDBC / MongoDB

Support & Community

Backed by Google and the Apache Foundation, with a large and active developer community.

#5 — Hazelcast

Short description: An in-memory computing platform that provides a unified engine for stream processing and low-latency data storage.

Key Features

- In-Memory Store: Combines stream processing with an in-memory data grid (IMDG) for extreme speed.

- Fast Aggregations: Performs stateful operations directly in RAM, reducing latency to microseconds.

- Distributed SQL: Allows for querying both live streams and stored data using SQL.

- Jet Engine: A high-performance DAG (Directed Acyclic Graph) engine for processing data.

- Lightweight Footprint: Can be embedded directly into a Java application or run as a separate cluster.

- Cloud-Native: Optimized for Kubernetes with native operators and auto-scaling.

Pros

- Incredible speed due to the elimination of disk I/O during the processing phase.

- Simplified architecture by combining the database and the processing engine into one.

Cons

- High infrastructure costs due to heavy reliance on RAM.

- The processing engine (Jet) is newer and has a smaller community than Flink or Spark.

Platforms / Deployment

- Windows / macOS / Linux

- Kubernetes / Docker / Cloud / On-prem

Security & Compliance

- TLS/SSL, JAAS, Encryption at rest (in-memory), RBAC.

- SOC 2, ISO 27001 (Hazelcast Cloud).

Integrations & Ecosystem

Growing library of connectors for modern data stacks.

- Apache Kafka

- Hadoop / HDFS

- Redis

- Oracle / Postgres / MySQL

Support & Community

Strong professional support from Hazelcast Inc. and a dedicated community of Java developers.

#6 — Apache Samza

Short description: A distributed stream processing framework developed at LinkedIn, designed to handle massive scale with high reliability and performance.

Key Features

- Stateful Processing: Uses a local key-value store (RocksDB) to manage massive state without remote calls.

- YARN Integration: Originally built to run as a first-class citizen on Hadoop YARN.

- Samza Fluent API: A modern API for defining complex, multi-stage data pipelines.

- High Fault Tolerance: Recovers quickly from failures by utilizing Kafka as a changelog for its state.

- Multi-Tenancy: Excellent resource isolation for running multiple independent jobs on one cluster.

- Samza SQL: Enables SQL-based streaming applications.

Pros

- Proven at the extreme scale of LinkedIn (trillions of events per day).

- Exceptional at handling very large “state” (e.g., hundreds of GBs per node).

Cons

- Historically tied to the Hadoop ecosystem, though it now supports standalone deployment.

- Smaller community and slower innovation cycle compared to Flink.

Platforms / Deployment

- Linux

- YARN / Kubernetes / Standalone

Security & Compliance

- Kerberos, SSL/TLS, ACLs via Kafka.

- Not publicly stated.

Integrations & Ecosystem

Optimized for Kafka-centric pipelines.

- Apache Kafka

- Hadoop HDFS

- Elasticsearch

- Azure Event Hubs

Support & Community

Active development at LinkedIn and the Apache Foundation, but fewer third-party learning resources.

#7 — Apache Storm

Short description: The pioneer of open-source stream processing. Storm is designed for real-time processing of unbounded streams of data with a “one-at-a-time” model.

Key Features

- Trident API: A high-level abstraction that adds micro-batching and state management to core Storm.

- Low Latency: Optimized for sub-millisecond processing of individual tuples.

- Language Agnostic: Use any programming language to define “Bolts” and “Spouts.”

- Guaranteed Data Processing: Ensures that every message is processed at least once.

- Simple Topologies: Uses a straightforward DAG model (Spouts for sources, Bolts for processing).

- Scalable: Horizontal scaling by adding more worker processes.

Pros

- Simple conceptual model that is easy for developers to understand.

- Mature and battle-tested in older production environments.

Cons

- Lacks the advanced state management and exactly-once features of Flink.

- Development activity has significantly slowed as teams move to Spark or Flink.

Platforms / Deployment

- Linux

- Standalone / Mesos / Kubernetes

Security & Compliance

- Kerberos and ACLs for cluster management.

- Not publicly stated.

Integrations & Ecosystem

Connects to classic big data sources.

- Apache Kafka

- HBase

- HDFS

- Redis

Support & Community

Large amount of legacy documentation and forum posts, but a shrinking active community.

#8 — Materialize

Short description: A streaming database built on Timely Dataflow that allows users to write standard SQL and get real-time, incrementally updated results.

Key Features

- Standard SQL: No proprietary DSL; if you know SQL, you can use Materialize.

- Incremental Updates: Only processes the changes in data, making it extremely efficient.

- Timely Dataflow: Built on a sophisticated research-backed engine for high-performance streaming.

- Postgres Compatibility: Applications can connect to Materialize as if it were a standard Postgres database.

- Zero-ETL: Connect directly to Kafka or Postgres and get live views instantly.

- Horizontal Scalability: Cloud-native architecture that scales compute and storage independently.

Pros

- The easiest way for a traditional SQL developer to build a streaming application.

- Eliminates the need for a separate “processing” layer and “database” layer.

Cons

- Newer tool with a smaller feature set for complex non-SQL procedural logic.

- Cloud-first focus; self-hosting can be complex for enterprise production.

Platforms / Deployment

- Linux / macOS

- Cloud / Hybrid

Security & Compliance

- TLS/SSL, RBAC, SSO/SAML integration.

- SOC 2 Type II.

Integrations & Ecosystem

Focused on the “Modern Data Stack.”

- Apache Kafka / Confluent / Redpanda

- PostgreSQL (via CDC)

- dbt (data build tool)

- S3

Support & Community

Excellent technical documentation and a highly responsive Discord community.

#9 — Faust

Short description: A Python-based stream processing library, originally developed by Robinhood, that brings Kafka Streams concepts to the Python ecosystem.

Key Features

- Pure Python: No need for a JVM; write your streaming logic in idiomatic Python using

asyncio. - Highly Scalable: Can scale to millions of events per second across multiple worker nodes.

- Stateful: Supports local state stores (RocksDB) for building stateful applications.

- Kafka-Native: Designed specifically to work with Kafka as the source and sink.

- Easy Integration: Fits perfectly into Python-based machine learning and data science stacks.

- Automatic Partitioning: Handles Kafka partitions and rebalancing out of the box.

Pros

- The best choice for Python developers and data scientists who want to avoid Java/Scala.

- Low overhead and very fast development time for simple to medium complexity jobs.

Cons

- Performance is lower than JVM-based engines for extremely heavy throughput.

- Community maintenance has fluctuated; check for the latest “community-fork” versions.

Platforms / Deployment

- Windows / macOS / Linux

- Kubernetes / Docker / Standalone

Security & Compliance

- SASL/SSL support for Kafka connections.

- Not publicly stated.

Integrations & Ecosystem

Integrates well with the Python and web world.

- Apache Kafka

- Redis

- Celery

- Django / Flask

Support & Community

Community-driven support via GitHub and Slack; popular among Python data engineers.

#10 — Akka Streams

Short description: A library for building highly concurrent, distributed, and resilient message-driven applications on the JVM using the Reactive Streams standard.

Key Features

- Reactive Streams: Built-in backpressure handling to ensure producers don’t overwhelm consumers.

- Type Safe: Leverage the power of the Scala type system (or Java) for robust code.

- Graph DSL: Allows for building complex, multi-input, multi-output processing graphs.

- Akka Actor System: Benefits from the proven resilience and supervision models of Akka.

- Low Footprint: Highly efficient resource usage, making it ideal for microservices.

- Alpakka: A massive library of connectors for everything from AWS S3 to Google Pub/Sub.

Pros

- Exceptional for building complex “reactive” microservices rather than just “data pipelines.”

- Native backpressure is the best in the industry.

Cons

- Requires a deep understanding of the Actor model and reactive programming.

- Licensing for Akka (BPL) may be a barrier for some commercial organizations.

Platforms / Deployment

- Windows / macOS / Linux

- Kubernetes / Docker / Standalone

Security & Compliance

- Standard JVM security, TLS/SSL for remoting.

- Not publicly stated.

Integrations & Ecosystem

Massive connector ecosystem via the Alpakka project.

- Apache Kafka

- AWS (S3, Kinesis, DynamoDB)

- Google Cloud (Pub/Sub, Storage)

- MQTT / AMQP

Support & Community

Professional support through Lightbend and a dedicated community of functional programmers.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| Apache Flink | Complex Stateful Apps | Windows, macOS, Linux | Hybrid | Savepoints & Exactly-Once | 4.8/5 |

| Apache Spark | High Throughput / Batch | Windows, macOS, Linux | Hybrid | Unified Batch/Stream API | 4.7/5 |

| Kafka Streams | Kafka Microservices | Windows, macOS, Linux | Self-hosted | Library (No Cluster) | 4.6/5 |

| Apache Beam | Pipeline Portability | Windows, macOS, Linux | Hybrid | Runner Independence | 4.5/5 |

| Hazelcast | Ultra-Low Latency | Windows, macOS, Linux | Hybrid | In-Memory Data Grid | 4.4/5 |

| Apache Samza | Large-Scale State | Linux | Hybrid | Local State Management | 4.2/5 |

| Apache Storm | Simple Real-time | Linux | Hybrid | Low Latency per Tuple | 4.0/5 |

| Materialize | Streaming SQL | Linux, macOS | Cloud | Incremental SQL Views | 4.7/5 |

| Faust | Python Pipelines | Windows, macOS, Linux | Self-hosted | Pythonic Asyncio | 4.1/5 |

| Akka Streams | Reactive Microservices | Windows, macOS, Linux | Self-hosted | Native Backpressure | 4.3/5 |

Evaluation & Scoring of Stream Processing Frameworks

We have scored each framework based on its technical maturity and business utility in 2026.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| Apache Flink | 10 | 4 | 10 | 8 | 10 | 9 | 8 | 8.60 |

| Apache Spark | 9 | 7 | 10 | 9 | 9 | 9 | 8 | 8.70 |

| Kafka Streams | 8 | 9 | 8 | 8 | 9 | 9 | 9 | 8.55 |

| Apache Beam | 9 | 5 | 10 | 7 | 8 | 8 | 8 | 7.90 |

| Hazelcast | 8 | 7 | 7 | 9 | 10 | 8 | 6 | 7.60 |

| Apache Samza | 8 | 5 | 8 | 7 | 9 | 7 | 7 | 7.25 |

| Apache Storm | 6 | 6 | 7 | 7 | 9 | 6 | 7 | 6.75 |

| Materialize | 8 | 10 | 8 | 8 | 9 | 8 | 7 | 8.35 |

| Faust | 7 | 8 | 7 | 6 | 7 | 6 | 9 | 7.15 |

| Akka Streams | 9 | 5 | 9 | 8 | 9 | 8 | 7 | 7.85 |

How to Interpret These Scores:

- Weighted Total: A score over 8.5 signifies a “Tier 1” framework capable of handling the most demanding enterprise workloads.

- Performance vs. Ease: Notice that Flink and Akka score very high on performance/core features but lower on ease of use, reflecting the “technical tax” paid for higher power.

- Value: Reflects the total cost of ownership including infrastructure and the availability of talent (Spark leads here).

Which Stream Processing Framework Tool Is Right for You?

Solo / Freelancer

For a single developer, Kafka Streams or Materialize are the best choices. They offer the shortest path from “idea to production” without requiring you to become a distributed systems administrator.

SMB

Small businesses with Python-heavy teams should look at Faust, while those with a data engineering focus will find Apache Spark (Structured Streaming) easiest to adopt due to its familiar SQL and DataFrame APIs.

Mid-Market

Companies that need to balance extreme performance with developer productivity should choose Apache Flink. It is the most future-proof engine and is increasingly available as a managed service on all major cloud platforms.

Enterprise

Enterprises building massive, mission-critical reactive systems should evaluate Akka Streams for microservices or Apache Beam to maintain flexibility across multiple cloud vendors and processing engines.

Budget vs Premium

- Budget: Blender (Wait, that’s 3D)—Blender is not for data! Blender was in my last blog. For data: Blender of tools? No. For budget, use Blender? No. For budget, use Blender… wait. Let’s stick to the data: Apache Kafka Streams (Library-only) is the budget winner as it has no extra cluster cost.

- Premium: Databricks (Spark) or Ververica (Flink) managed services.

Feature Depth vs Ease of Use

- Deep Depth: Apache Flink, Apache Beam.

- Easy to Use: Materialize, Apache Spark.

Integrations & Scalability

- Top Integrations: Apache Spark, Akka Streams.

- Top Scalability: Apache Flink, Apache Samza.

Security & Compliance Needs

Organizations with high security requirements should prioritize managed versions of Spark and Flink provided by cloud vendors (AWS, Google, Azure), as they come with pre-configured compliance certifications.

Frequently Asked Questions (FAQs)

- What is the main difference between stream processing and batch processing?

Batch processing handles data in large, static groups at specific times, while stream processing handles data continuously as it is generated, resulting in much lower latency. - What are “Exactly-Once” semantics?

Exactly-once is a guarantee that the system will process each data point and update the state as if the failure never happened, ensuring results are accurate and not duplicated. - Do I need a message broker like Kafka to use these frameworks?

In almost all cases, yes. Stream processors need a “buffer” to store incoming data before it is processed, and Kafka or Pulsar are the most common choices. - Which framework has the lowest latency?

For “true” streaming, Apache Flink and Hazelcast typically offer the lowest latency. Spark Structured Streaming is slightly higher due to its micro-batch architecture. - Can I use SQL for stream processing?

Yes, Flink SQL, Spark SQL, and Materialize all allow you to write standard SQL queries that run continuously against live data. - What is a “Window” in stream processing?

A window is a way to group data by time (e.g., “last 5 minutes”) so you can perform aggregations like averages or sums on a moving stream of data. - Is stream processing more expensive than batch?

Generally, yes. Stream processing requires infrastructure to be running 24/7, whereas batch jobs can be spun up and down as needed. - How do these frameworks handle out-of-order data?

Advanced frameworks use “Watermarks”—special timestamps that tell the system how long to wait for late-arriving data before finalizing a result. - Can I run these frameworks on Kubernetes?

Yes, most modern frameworks (Flink, Spark, Hazelcast) have native Kubernetes operators that make deployment and scaling much easier. - Do I need to know Java to build streaming apps?

While Java/Scala were historically required, you can now use Python (Faust, PySpark, PyFlink) or SQL (Materialize, Flink SQL) to build high-quality streaming pipelines.

Conclusion

The evolution of stream processing frameworks has reached a point where real-time capabilities are accessible to teams of all sizes. While Apache Flink continues to be the gold standard for technical excellence and state management, the rise of Materialize and Spark Structured Streaming has made it easier than ever for SQL-savvy teams to enter the arena.Your choice of framework should be dictated by your existing infrastructure and the complexity of your data logic. If you are already in the Kafka ecosystem, Kafka Streams is the logical first step. If you need to process trillions of events with complex state, Flink is your destination. Regardless of the choice, the move toward real-time processing is the single most important step in building a modern, responsive data architecture.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals