Introduction

Batch processing is the execution of a series of jobs in a computer program without manual intervention. In this model, data is collected, entered, processed, and then the results are produced in bulk. Unlike real-time processing, which handles data as it arrives, batch processing is designed to handle high-volume, repetitive tasks where “latency” is traded for “throughput.” It is the digital equivalent of a factory assembly line: you gather all the raw materials first and then run the machines at full capacity to produce the final product.In the modern data ecosystem, batch processing remains the backbone of enterprise operations. While the “real-time” buzz often dominates conversations, batch processing is where the heavy lifting happens for deep historical analysis, large-scale machine learning model training, and complex financial reconciliations. It is significantly more cost-effective for processing petabytes of data because it allows for optimized resource allocation and the use of “spot” or “preemptible” cloud instances that are much cheaper than the high-availability resources required for streaming.

Real-world use cases:

- Financial Services: Processing millions of end-of-day transactions, payroll execution, and generating monthly bank statements.

- Data Warehousing & ETL: Moving massive datasets from operational databases into a central “Single Source of Truth” for business intelligence.

- Machine Learning: Training large-scale language models or recommendation engines on years of historical user data.

- Scientific Research: Processing genomic sequences or satellite imagery where the sheer volume of data makes instantaneous processing impossible.

- Supply Chain: Running inventory optimization algorithms across thousands of retail locations every night.

Evaluation criteria for buyers:

- Scalability: The ability to handle a sudden jump from gigabytes to petabytes without rewriting the code.

- Resource Management: How efficiently the framework utilizes CPU, memory, and storage during peak loads.

- Fault Tolerance: The ability to restart a failed job from the last checkpoint rather than starting from zero.

- Language Support: Availability of APIs for Java, Python, Scala, and SQL to suit the existing engineering team.

- Cost Efficiency: Support for serverless or on-demand scaling to minimize idle infrastructure costs.

- Scheduling & Orchestration: Built-in or external tools for managing complex job dependencies.

- Data Connector Ecosystem: Ease of connecting to modern data lakes, warehouses, and NoSQL stores.

- Security & Governance: Robustness of identity management (SSO/IAM) and data encryption.

- Ease of Deployment: Support for containerization (Docker/Kubernetes) or fully managed cloud environments.

- Community & Documentation: Availability of third-party libraries and expert talent in the job market.

Best for: Large enterprises, data engineers, and researchers who need to process massive datasets reliably and cost-effectively where results are needed in minutes or hours rather than milliseconds.

Not ideal for: Systems requiring immediate feedback (e.g., credit card fraud triggers or live chat), very small datasets where simple scripts suffice, or low-latency interactive applications.

Key Trends in Batch Processing Frameworks

- Serverless Batch Dominance: Organizations are moving away from managing fixed clusters in favor of serverless engines that spin up only for the duration of the job, significantly reducing FinOps overhead.

- AI-Driven Optimization: Frameworks are beginning to use machine learning to predict resource needs, automatically adjusting memory and CPU allocation to prevent job failures and optimize costs.

- Unified Batch and Stream: The line between “batch” and “stream” is blurring, with modern frameworks using the same codebase to handle both paradigms (often called the “Kappa Architecture”).

- Carbon-Aware Processing: New scheduling trends focus on running heavy batch jobs when renewable energy availability is high or grid carbon intensity is low.

- Zero-ETL Integrations: A push toward processing data in-place without the need to move it between storage and compute layers, reducing data gravity issues.

- Vector-Enabled Batching: Enhancements to process high-dimensional vector data in bulk to support the retrieval-augmented generation (RAG) needs of modern AI.

- Data Contract Enforcement: Batch jobs are increasingly incorporating automated schema validation to ensure that a “garbage-in” scenario doesn’t corrupt the downstream data lake.

- Kubernetes-Native Orchestration: The shift toward using Kubernetes operators to manage the lifecycle of batch jobs across hybrid-cloud environments.

How We Selected These Tools (Methodology)

To determine the top 10 frameworks for this guide, we analyzed the current landscape of high-volume data engineering. Our selection was based on the following methodology:

- Market Share & Reliability: We prioritized tools that are used in production by the world’s largest data-driven companies.

- Technical Resilience: We evaluated how each framework handles job failure, data skew, and resource contention.

- Future-Proofing: We looked for frameworks that are actively integrating AI capabilities and modern security standards.

- Functional Versatility: The tools selected cover a range from core processing engines to orchestration-heavy batch managers.

- Developer Experience: We assessed the quality of the developer workflow, from local testing to production deployment.

- Security Posture: We evaluated the strength of administrative controls and compliance readiness.

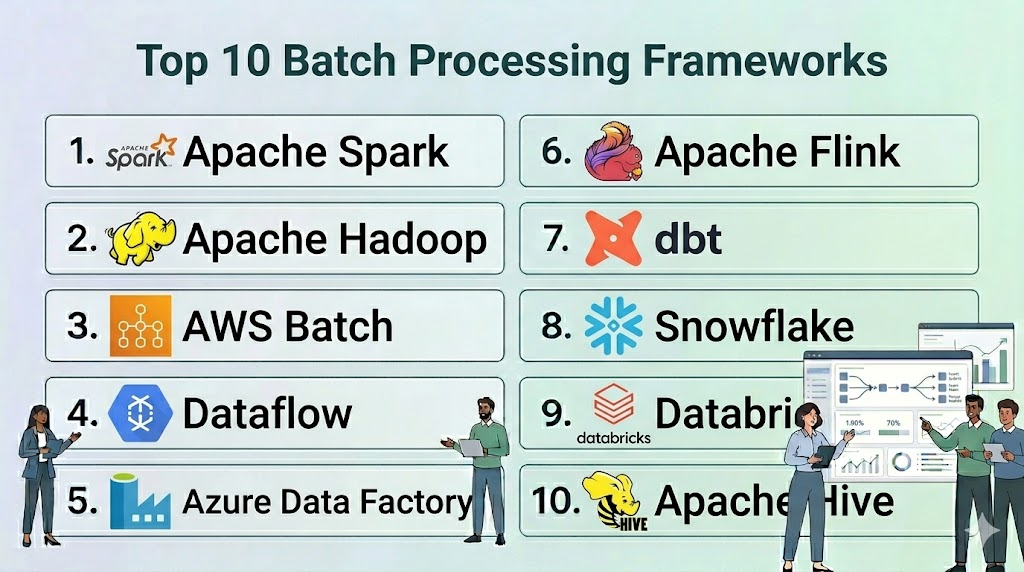

Top 10 Batch Processing Frameworks

#1 — Apache Spark

Short description: The industry standard for distributed data processing. It provides a unified engine for large-scale data processing that is significantly faster than traditional models.

Key Features

- In-Memory Processing: Utilizes RAM for intermediate calculations, making it up to 100x faster than MapReduce for certain tasks.

- Unified Engine: Supports batch, streaming, SQL analytics, and machine learning (MLlib) in a single framework.

- Advanced DAG Scheduler: Optimizes the execution plan to reduce data shuffling across the network.

- Language Versatility: Full support for Python (PySpark), Scala, Java, and R.

- Spark SQL: Allows users to query structured data inside Spark programs using familiar SQL syntax.

- Adaptive Query Execution: Dynamically re-optimizes query plans based on runtime statistics.

Pros

- Massive community and ecosystem; it is easy to find experienced talent and third-party libraries.

- Extremely versatile; can handle everything from simple ETL to complex graph processing.

Cons

- High memory consumption can lead to expensive infrastructure if not managed carefully.

- Configuration can be complex, especially tuning parameters for large-scale clusters.

Platforms / Deployment

- Windows / macOS / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Kerberos authentication, TLS/SSL, RBAC (via integrated managers like Databricks or EMR).

- Not publicly stated.

Integrations & Ecosystem

Spark is the center of the modern data world, connecting to nearly everything.

- Apache Kafka

- Amazon S3 / Azure Data Lake / Google Cloud Storage

- Snowflake / Redshift

- Hadoop HDFS

Support & Community

Unparalleled community support, extensive documentation, and premium enterprise support through vendors like Databricks.

#2 — Apache Hadoop (MapReduce)

Short description: The pioneer of big data processing. While older than Spark, it remains a robust and reliable choice for massive, disk-heavy batch operations.

Key Features

- MapReduce Model: A programming model for processing large datasets with a parallel, distributed algorithm on a cluster.

- HDFS Integration: Designed to work natively with the Hadoop Distributed File System for massive data storage.

- High Fault Tolerance: Naturally resilient to hardware failure; if a node fails, the job is automatically reassigned.

- YARN Resource Management: Allows for efficient sharing of cluster resources across multiple applications.

- Disk-Based Processing: Unlike Spark, it is less reliant on RAM, making it suitable for jobs where data far exceeds available memory.

- Scalability: Proven to run on clusters with thousands of nodes.

Pros

- Rock-solid stability for very long-running, non-interactive batch jobs.

- Excellent for cost-sensitive projects where high-speed RAM is not a priority.

Cons

- Significantly slower than Spark for iterative algorithms due to frequent disk I/O.

- The programming model is more rigid and requires more “boilerplate” code.

Platforms / Deployment

- Linux

- Self-hosted / Cloud / Hybrid

Security & Compliance

- Kerberos, Apache Ranger, Apache Atlas for governance.

- Varies / N/A.

Integrations & Ecosystem

Integrates deeply with the legacy and modern Hadoop stack.

- Apache Hive / Apache Pig

- Apache HBase

- Amazon EMR

- Cloudera / Hortonworks

Support & Community

Mature community with decades of documentation. Enterprise support is available through Cloudera.

#3 — AWS Batch

Short description: A fully managed service that enables developers and engineers to easily and efficiently run hundreds of thousands of batch computing jobs on AWS.

Key Features

- Fully Managed Infrastructure: Automatically provisions the right amount and type of compute resources (e.g., CPU or memory-optimized).

- Fargate Support: Allows for a completely serverless batch experience; no servers to manage.

- Priority-Based Scheduling: Manage multiple queues with different priority levels for your business-critical jobs.

- Integration with AWS EC2 Spot: Automatically utilizes spare capacity for a significant cost reduction (up to 90%).

- Container Native: Uses Docker containers to package your code, ensuring consistent execution across environments.

- Custom SDKs: Deep integration with AWS Step Functions for complex workflow orchestration.

Pros

- Eliminates the operational overhead of managing a cluster.

- Incredible cost optimization through native Spot Instance integration.

Cons

- Strongly locked into the AWS ecosystem; not portable to other clouds.

- Requires a solid understanding of AWS IAM and networking for setup.

Platforms / Deployment

- AWS

- Cloud

Security & Compliance

- IAM roles, VPC isolation, KMS encryption, CloudTrail auditing.

- SOC 1/2/3, ISO 27001, HIPAA, PCI DSS.

Integrations & Ecosystem

Designed to be the glue for AWS data pipelines.

- Amazon S3

- AWS Lambda / AWS Step Functions

- Amazon EMR

- Amazon Redshift

Support & Community

Enterprise-grade support from AWS and a vast library of technical guides.

#4 — Google Cloud Dataflow

Short description: A serverless, fully managed service for unified batch and stream data processing based on the Apache Beam model.

Key Features

- Unified Programming Model: Use the same Apache Beam code for both batch and streaming jobs.

- Horizontal Autoscaling: Automatically adds or removes worker nodes based on the current workload.

- Dynamic Work Rebalancing: Moves work from “lagging” workers to those that have finished their tasks.

- Dataflow Prime: A newer version that uses AI to optimize resource allocation and simplify troubleshooting.

- Flex Templates: Allows for packaging Dataflow pipelines as reusable components.

- Exactly-Once Processing: Guarantees that data is processed correctly even in the event of failures.

Pros

- Truly serverless; no need to think about machine types or cluster sizes.

- Exceptional at handling “late” data and complex windowing logic.

Cons

- The Apache Beam learning curve can be steep for those used to simple SQL.

- Can be more expensive than manual Spark tuning if not monitored.

Platforms / Deployment

- Google Cloud

- Cloud

Security & Compliance

- VPC Service Controls, CMEK (Customer Managed Encryption Keys), IAM.

- SOC 1/2/3, ISO 27001, HIPAA.

Integrations & Ecosystem

Optimized for the Google Cloud data stack.

- BigQuery

- Google Cloud Storage

- Pub/Sub

- Vertex AI

Support & Community

Premium support from Google and a growing community around the Apache Beam project.

#5 — Azure Data Factory

Short description: A cloud-based data integration service that allows you to create data-driven workflows for orchestrating and automating data movement and data transformation.

Key Features

- Visual Authoring: A drag-and-drop interface for building complex ETL and batch pipelines without code.

- Mapping Data Flows: Visually design data transformation logic that scales out on Spark without writing code.

- Integration Runtime: Allows for secure connection to on-premises data sources behind firewalls.

- CI/CD Integration: Native support for Git and Azure DevOps for version control and deployment.

- Hybrid Cloud Support: Easily move data between on-premises, Azure, and other clouds.

- Pipeline Monitoring: Comprehensive dashboards for tracking job success, failure, and performance.

Pros

- Excellent for organizations that prefer a “low-code” approach to data engineering.

- Seamless integration for companies already using the Microsoft 365 or Azure ecosystem.

Cons

- The visual interface can become cluttered for extremely complex logic.

- Debugging custom code inside the visual pipeline can be challenging.

Platforms / Deployment

- Azure

- Cloud / Hybrid

Security & Compliance

- Azure Active Directory (SSO), Managed Identities, VNET support.

- SOC 1/2/3, ISO 27001, HIPAA, GDPR.

Integrations & Ecosystem

Central to the Azure data landscape.

- Azure SQL Database / Azure Synapse

- Azure Blob Storage / ADLS Gen2

- Microsoft Power BI

- Azure Databricks

Support & Community

Deep enterprise support from Microsoft and a vast library of “solution templates.”

#6 — Apache Flink

Short description: While famous for streaming, Flink is a powerful batch processing framework that treats batch as a “special case” of streaming, leading to exceptional performance.

Key Features

- High-Performance Batch Engine: Uses specialized algorithms for sorting and joining large datasets.

- Unified Stack: One set of code for both batch and stream, reducing maintenance overhead.

- Memory Management: Its own managed memory system avoids Java “Garbage Collection” issues.

- DataSet API: A dedicated API for batch-specific operations like joins, grouping, and transformations.

- Query Optimization: A cost-based optimizer that chooses the best execution plan for your batch jobs.

- Fault Tolerance: Efficient checkpointing ensures jobs can recover quickly from failures.

Pros

- Offers better performance than Spark for certain complex, stateful batch operations.

- Perfect for teams that want to standardize on a single framework for all data needs.

Cons

- Smaller community compared to Spark, making it harder to find niche libraries.

- Historically, the batch API was less mature than the streaming API (though this is changing).

Platforms / Deployment

- Windows / macOS / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

- Kerberos, SSL/TLS, RBAC.

- Not publicly stated.

Integrations & Ecosystem

Broad connectivity across the big data landscape.

- Apache Kafka

- Elasticsearch

- Hadoop HDFS

- AWS S3

Support & Community

Growing community and enterprise support through Ververica and Cloudera.

#7 — dbt (data build tool)

Short description: A development framework that combines modular SQL with software engineering best practices like testing and version control to transform data in flight.

Key Features

- SQL-First: Allows analysts to build batch transformation pipelines using only SQL

SELECTstatements. - Automated Documentation: Generates a lineage graph and documentation for your data models automatically.

- Data Testing: Built-in testing for data quality (e.g., checking for null values or unique constraints).

- Version Control: Designed to work with Git, enabling code reviews and collaboration.

- Modular Design: Encourages the reuse of code through “macros” and “packages.”

- ELT Approach: Transforms data after it has been loaded into the warehouse, utilizing the warehouse’s own power.

Pros

- Empowers data analysts to do the work traditionally reserved for data engineers.

- Significantly improves data quality through rigorous testing and documentation.

Cons

- Only handles the “Transformation” part of ETL; requires other tools for data movement.

- Limited to what the underlying data warehouse (e.g., Snowflake) can support in SQL.

Platforms / Deployment

- Web / Windows / macOS / Linux

- Cloud / Self-hosted

Security & Compliance

- SSO, RBAC, Secret management.

- SOC 2 Type II (dbt Cloud).

Integrations & Ecosystem

Integrates with nearly all modern cloud data warehouses.

- Snowflake

- Google BigQuery

- Amazon Redshift

- Databricks

Support & Community

One of the most vibrant communities in modern data (dbt Slack), with extensive documentation.

#8 — Snowflake

Short description: A cloud-native data platform that provides a massive, scalable engine for running SQL-based batch transformations and analytics.

Key Features

- Multi-Cluster Shared Data: Separates compute from storage, allowing you to scale up for heavy batch jobs instantly.

- Zero-Copy Cloning: Create instant copies of your data for testing batch jobs without taking up extra storage.

- Snowpark: Allows developers to write batch processing code in Python, Java, and Scala directly inside Snowflake.

- Automatic Query Optimization: No need to manage indexes or manual tuning; the engine handles it.

- Tasks & Streams: Built-in scheduling and change-tracking for building batch pipelines.

- Time Travel: Query data as it existed at any point in the past (up to 90 days).

Pros

- Zero maintenance; no servers to manage or software to update.

- Performance is incredibly consistent regardless of the data volume.

Cons

- Can become very expensive if batch jobs are not optimized for compute usage.

- Proprietary platform; moving away from Snowflake can be complex.

Platforms / Deployment

- AWS / Azure / Google Cloud

- Cloud

Security & Compliance

- End-to-end encryption, MFA, SSO, Private Link.

- SOC 1/2, ISO 27001, HIPAA, PCI DSS, FedRAMP.

Integrations & Ecosystem

Huge marketplace of third-party connectors and native data sharing.

- dbt

- Fivetran / Airbyte

- Tableau / Power BI

- Informatica

Support & Community

Excellent 24/7 corporate support and a large community of “Data Heroes.”

#9 — Databricks

Short description: A unified Data and AI platform built on top of Apache Spark that provides a collaborative environment for batch engineering and machine learning.

Key Features

- Delta Lake: An open-source storage layer that brings ACID transactions to your batch jobs.

- Collaborative Notebooks: Share code, visualizations, and results across the team in real-time.

- Photon Engine: A next-generation vectorized query engine that makes batch jobs up to 20x faster.

- Workflows: A fully managed orchestrator for running multi-step batch jobs and ML pipelines.

- Serverless SQL: Run SQL batch transformations without managing any underlying Spark clusters.

- Unity Catalog: A unified governance and security layer for all your data assets.

Pros

- Provides the most high-performance version of Apache Spark available.

- Excellent for teams where data engineers and data scientists need to work together.

Cons

- The premium features come with a significant price tag.

- Can be “overkill” for simple batch tasks that don’t require Spark’s power.

Platforms / Deployment

- AWS / Azure / Google Cloud

- Cloud

Security & Compliance

- SSO, MFA, RBAC, HIPAA compliance, SOC 2.

- ISO 27001, GDPR.

Integrations & Ecosystem

Deeply integrated with the open-source Spark and Delta Lake ecosystems.

- dbt

- Apache Kafka

- Tableau

- Power BI

Support & Community

World-class enterprise support and a large community of Spark experts.

#10 — Apache Hive

Short description: A data warehouse software project built on top of Apache Hadoop for providing data query and analysis using a SQL-like interface.

Key Features

- HiveQL: A SQL-like language that allows users familiar with SQL to run MapReduce or Spark jobs.

- Metastore: A central repository for metadata, used by many other tools (including Spark) to understand data structure.

- LLAP (Live Long and Process): A feature that enables sub-second query execution for batch data.

- Partitioning: Extremely robust tools for organizing massive datasets to speed up batch reads.

- ACID Transactions: Supports insert, update, and delete operations on HDFS.

- Scalability: Like Hadoop, it is designed to scale to thousands of nodes and exabytes of data.

Pros

- The “standard” for metadata management in the Hadoop ecosystem.

- Great for moving legacy SQL workloads to a distributed batch environment.

Cons

- Can be slow for interactive tasks without LLAP.

- The architecture is more complex to maintain than modern cloud-native warehouses.

Platforms / Deployment

- Linux

- Self-hosted / Cloud / Hybrid

Security & Compliance

- Kerberos, Apache Ranger integration.

- Varies / N/A.

Integrations & Ecosystem

Deeply embedded in the Hadoop and big data ecosystem.

- Apache Spark

- Hadoop HDFS

- Presto / Trino

- Cloudera

Support & Community

Mature community with massive amounts of legacy knowledge and documentation.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| Apache Spark | General Batch/ML | Multi-Platform | Hybrid | In-Memory Processing | 4.8/5 |

| Apache Hadoop (MapReduce) | Massive Disk-Heavy Batch | Linux | Hybrid | HDFS Integration | 4.2/5 |

| AWS Batch | AWS Ecosystem | AWS | Cloud | Spot Instance Pricing | 4.5/5 |

| Google Cloud Dataflow | Serverless Unified Pipe | Google Cloud | Cloud | Dynamic Rebalancing | 4.6/5 |

| Azure Data Factory | Low-Code Orchestration | Azure | Hybrid | Visual Authoring | 4.4/5 |

| Apache Flink | High-Perf Unified Pipe | Multi-Platform | Hybrid | Managed Memory | 4.5/5 |

| dbt (data build tool) | SQL-Based Transformations | Multi-Platform | Hybrid | Data Testing | 4.9/5 |

| Snowflake | Zero-Maintenance SQL | AWS, Azure, GCP | Cloud | Zero-Copy Cloning | 4.7/5 |

| Databricks | Collaborative Data/AI | AWS, Azure, GCP | Cloud | Delta Lake / Photon | 4.8/5 |

| Apache Hive | Legacy SQL on Hadoop | Linux | Hybrid | Hive Metastore | 4.0/5 |

Evaluation & Scoring of Batch Processing Frameworks

The following scoring is based on a weighted average of critical business and technical factors. These scores represent the tool’s standing in the current enterprise market.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| Apache Spark | 10 | 5 | 10 | 8 | 10 | 9 | 8 | 8.60 |

| Apache Hadoop | 8 | 3 | 9 | 8 | 6 | 8 | 9 | 7.10 |

| AWS Batch | 8 | 8 | 9 | 10 | 8 | 9 | 9 | 8.65 |

| Dataflow | 9 | 9 | 9 | 10 | 9 | 8 | 8 | 8.80 |

| Azure Data Factory | 7 | 10 | 10 | 10 | 7 | 9 | 8 | 8.35 |

| Apache Flink | 10 | 4 | 8 | 8 | 10 | 8 | 8 | 8.10 |

| dbt | 8 | 9 | 10 | 9 | 8 | 10 | 9 | 9.00 |

| Snowflake | 9 | 10 | 10 | 10 | 9 | 9 | 7 | 8.90 |

| Databricks | 10 | 7 | 10 | 9 | 10 | 9 | 7 | 8.75 |

| Apache Hive | 7 | 4 | 9 | 8 | 6 | 8 | 8 | 6.95 |

How to interpret these scores:

- dbt scores exceptionally high on “Value” and “Ease” because it turns analysts into engineers.

- Google Cloud Dataflow leads in “Core” and “Security” for its truly serverless, hands-off approach.

- Apache Spark remains the gold standard for “Performance” and “Core” features for complex logic.

Which Batch Processing Framework Tool Is Right for You?

Solo / Freelancer

If you are a solo consultant, Snowflake or dbt Cloud are your best friends. They allow you to build enterprise-grade batch pipelines with almost zero infrastructure management, allowing you to focus on the data logic rather than the plumbing.

SMB

For small to medium businesses, Azure Data Factory or AWS Batch offer the best balance of cost and ease. You can leverage the power of the cloud without needing a dedicated team of five data engineers just to keep the cluster running.

Mid-Market

If your team is growing and you have a mix of SQL analysts and Python developers, Databricks is the ideal choice. It provides the collaboration features needed for a growing team while handling the underlying Spark complexity for you.

Enterprise

For large-scale enterprises with massive legacy data, a hybrid approach using Apache Spark for core processing and Apache Hive for metadata management is common. For new projects, Google Cloud Dataflow offers the best future-proof, serverless path.

Budget vs Premium

- Budget: Apache Spark (Open Source), dbt Core (Open Source), Apache Hadoop.

- Premium: Databricks, Snowflake, Azure Data Factory.

Feature Depth vs Ease of Use

- Technical Depth: Apache Flink, Apache Spark, SideFX (Wait, SideFX is for 3D! I mean Hadoop).

- Extreme Ease: Azure Data Factory, dbt.

Integrations & Scalability

- Top Scalability: Apache Spark, Google Cloud Dataflow, Snowflake.

- Top Integrations: Azure Data Factory, Snowflake.

Security & Compliance Needs

Organizations in banking or healthcare should prioritize Snowflake, AWS Batch, or Google Cloud Dataflow, as these platforms provide the most “out-of-the-box” compliance certifications and audit controls.

Frequently Asked Questions (FAQs)

- What is the difference between batch and streaming processing?

Batch processing handles large amounts of data at once at scheduled times, while streaming processing handles data continuously as it is generated. Batch is usually more cost-effective for deep historical analysis.

- How do I choose between Spark and Hadoop?

Choose Spark if you need high-speed, iterative processing and have plenty of RAM. Choose Hadoop MapReduce if you have massive datasets that are way larger than your memory and you are not in a rush for the results.

- What does “Serverless Batch” actually mean?

It means you provide the code and the data, and the cloud provider handles all the servers, scaling, and maintenance. You only pay for the seconds your code is actually running.

- Is SQL powerful enough for complex batch processing?

With modern frameworks like Snowflake and dbt, SQL is extremely powerful. Most common business transformations and even some machine learning tasks can now be done entirely in SQL.

- How do these frameworks handle job failures?

Most frameworks use “checkpointing” or “lineage.” If a job fails, the system knows exactly which part was finished and can restart from that point instead of starting the whole multi-hour job over.

- Can I run these frameworks on my own local servers?

Yes, open-source frameworks like Spark, Hadoop, and Flink can be run on-premises. However, cloud-managed services (AWS Batch, Dataflow) are strictly cloud-based.

- How does “FinOps” relate to batch processing?

FinOps is about managing cloud costs. Batch processing is a key FinOps strategy because it allows you to use “Spot Instances” (cheap, spare capacity) and run jobs when energy costs are lower.

- What is a “Data Contract”?

A data contract is a formal agreement between the data producer and the batch process. It defines the schema and quality standards the data must meet before it is processed.

- Why is dbt so popular compared to traditional ETL?

dbt is popular because it uses “ELT” (Extract, Load, Transform). It moves the transformation into the data warehouse where it’s faster and allows people to use SQL instead of complex coding languages.

- Do I need to be a developer to use these tools?

For tools like Apache Spark, yes. For tools like Azure Data Factory or dbt, you primarily need to know SQL or be comfortable with visual interfaces, making them accessible to data analysts.

Conclusion

The era of manual, fragile batch scripts is over. Modern batch processing frameworks like Apache Spark and Google Cloud Dataflow have made it possible to process exabytes of data with high reliability and efficiency. Whether you choose the “code-first” power of Spark or the “SQL-first” simplicity of dbt, the key is to build a pipeline that is modular, testable, and cost-aware.As we move deeper into the age of AI, the ability to process high-quality historical data at scale will be the primary competitive advantage. Your choice of framework should not just solve today’s ETL problem, but provide the foundation for the massive data demands of tomorrow’s machine learning models.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals