Introduction

Machine Learning (ML) platforms are comprehensive integrated environments that provide the infrastructure, tools, and workflows necessary to build, train, deploy, and manage machine learning models at scale. In the context of , these platforms have evolved beyond simple model-building environments into “LLMOps” and “Agentic” hubs. They act as the foundational layer for enterprise artificial intelligence, abstracting the complexity of underlying compute resources (GPUs/TPUs) while providing a unified interface for data scientists, ML engineers, and developers.

The shift toward generative AI and large-scale foundation models has transformed the value proposition of these platforms. They are no longer just for predictive analytics; they are the engines powering autonomous agents, real-time recommendation systems, and sophisticated natural language interfaces. For organizations, choosing the right platform is a strategic decision that impacts speed-to-market, operational costs, and the ability to maintain “Responsible AI” standards.

Real-World Use Cases:

- Predictive Maintenance: Analyzing sensor data from industrial assets to predict failure before it occurs.

- Dynamic Personalization: Real-time adjustment of e-commerce storefronts based on user behavior and intent.

- Medical Imaging: Automating the detection of anomalies in X-rays or MRIs with high precision.

- Algorithmic Trading: Processing millions of market signals per second to execute high-frequency trades.

- Customer Support Agents: Powering sophisticated LLM-based agents that can resolve complex support tickets autonomously.

Evaluation Criteria for Buyers:

- Compute Orchestration: Efficiency in managing expensive GPU clusters and serverless scaling.

- MLOps Capabilities: Robustness of versioning, monitoring, and automated retraining pipelines.

- Foundation Model Support: Native integration with LLMs and the ability to perform fine-tuning or RAG (Retrieval-Augmented Generation).

- Data Labeling & Preparation: Built-in tools for data cleaning and high-quality labeling.

- Security & Governance: Controls for model bias detection, explainability, and data privacy.

- Interoperability: Support for open-source frameworks like PyTorch, TensorFlow, and Scikit-learn.

- Deployment Flexibility: Ability to deploy at the edge, on-premises, or across multiple clouds.

- Cost Management: Granular visibility into training and inference spending.

- Ease of Use: Balance between low-code interfaces and deep technical control for researchers.

- Ecosystem Strength: Availability of pre-trained models and a marketplace for specialized algorithms.

Best for: Data science teams, AI engineers, and enterprises looking to centralize their AI lifecycle and move models from experimental notebooks to high-availability production environments.

Not ideal for: Small businesses with simple, static reporting needs or developers who only require a single API call for an existing model without needing to customize or manage the underlying infrastructure.

Key Trends in Machine Learning Platforms

- LLMOps Integration: Platforms are now prioritizing the lifecycle management of Large Language Models, including prompt engineering, fine-tuning, and vector database orchestration.

- Agentic Frameworks: The rise of autonomous AI agents has forced platforms to incorporate orchestration layers that manage multi-step, goal-oriented AI behaviors.

- Sustainable AI (Green ML): New features that optimize training jobs to reduce carbon footprints and energy consumption on massive GPU clusters.

- Edge AI Convergence: Seamless “one-click” deployment from cloud-trained models to specialized AI chips in mobile devices and industrial hardware.

- Automated Responsible AI: Built-in, real-time guardrails that detect hallucinations, bias, and toxic outputs before they reach the end-user.

- Serverless Model Inference: Moving away from persistent clusters toward highly elastic, pay-per-request inference models that significantly lower operational costs.

- Low-Code/No-Code AI Expansion: The democratization of AI through drag-and-drop interfaces that allow business analysts to build complex predictive models.

- GPU Sharing & Fractionalization: Advanced orchestration that allows multiple smaller training jobs to share a single high-end GPU, maximizing resource utilization.

How We Selected These Tools (Methodology)

To identify the premier machine learning platforms for this guide, we applied a rigorous selection methodology designed to highlight the most credible and technologically advanced solutions.

- Production Viability: We prioritized platforms that have a proven track record of handling high-traffic production workloads.

- Technological Innovation: We evaluated the speed at which platforms have integrated modern generative AI and LLMOps features.

- Administrative Robustness: Preference was given to tools with enterprise-grade security, identity management, and compliance frameworks.

- Framework Support: The tools selected support a broad range of open-source frameworks, ensuring no vendor lock-in at the code level.

- Market Share & Credibility: We analyzed adoption rates among Fortune 500 companies and tech-forward startups.

- Operational Efficiency: We looked for platforms that provide strong automation features for the “boring” parts of ML, such as infrastructure provisioning and model monitoring.

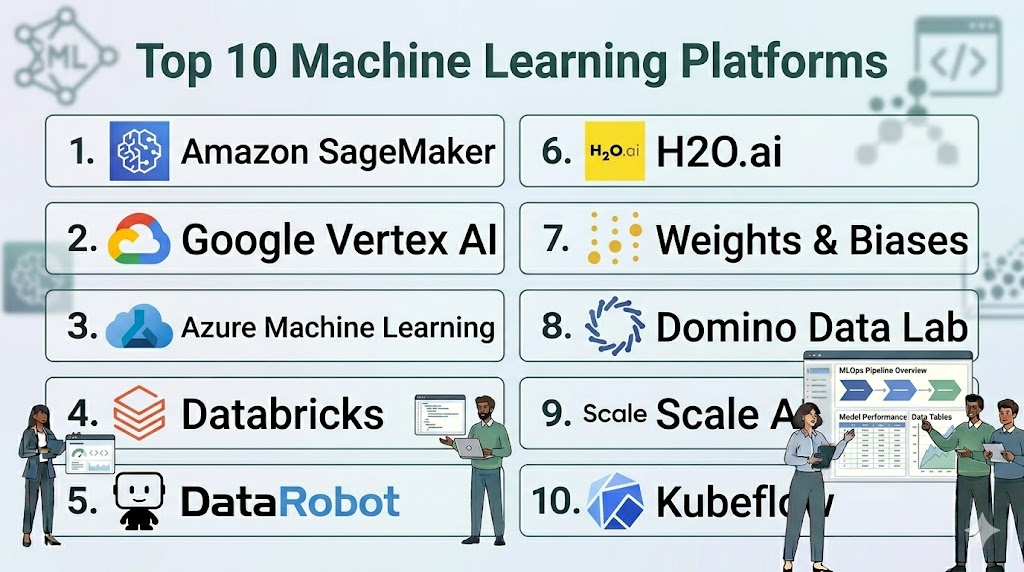

Top 10 Machine Learning Platforms

#1 — Amazon SageMaker

Short description: A fully managed service that provides every developer and data scientist with the ability to build, train, and deploy machine learning models quickly.

Key Features

- SageMaker Studio: A unified web-based IDE for the entire ML lifecycle.

- Autopilot: Automatically builds, trains, and tunes the best ML models based on your data.

- Model Monitor: Detects quality deviations in your models and triggers alerts for retraining.

- JumpStart: A hub for pre-trained models and solution templates, including popular LLMs.

- Clarify: Provides bias detection and model explainability throughout the ML workflow.

- Serverless Inference: Allows for model deployment without managing any underlying infrastructure.

Pros

- Deepest integration with the vast AWS ecosystem of data and compute services.

- Highly scalable, capable of handling the largest training jobs in the world.

Cons

- The sheer number of features can lead to a cluttered and complex user experience.

- Costs can become opaque without rigorous tagging and budget management.

Platforms / Deployment

- AWS

- Cloud / Hybrid (via AWS Outposts)

Security & Compliance

- VPC isolation, IAM roles, KMS encryption, SSO.

- SOC 1/2/3, ISO 27001, HIPAA, FedRAMP High.

Integrations & Ecosystem

SageMaker is the center of the AWS AI stack, connecting to everything from S3 to Redshift.

- AWS Glue / Lake Formation

- Amazon S3 / Redshift

- Hugging Face

- Terraform

Support & Community

Massive AWS support network, extensive documentation, and a global community of certified practitioners.

#2 — Google Vertex AI

Short description: Google’s unified AI platform that brings together AutoML and AI Platform into a single environment for building and scaling AI models.

Key Features

- Model Garden: A curated collection of foundation models (Gemini, PaLM) and open-source models.

- AutoML: Enables developers with limited ML expertise to train high-quality models specific to their business needs.

- Vector Search: Industry-leading similarity search for building RAG applications at scale.

- Feature Store: A centralized repository for sharing and discovering ML features across teams.

- Pipelines: Orchestrates the ML workflow using a serverless approach.

- Workbench: A Jupyter-based environment for experimentation and development.

Pros

- Best-in-class support for Google’s specialized TPU (Tensor Processing Unit) hardware.

- Seamless integration with BigQuery for “ML-on-the-data” workflows.

Cons

- Can feel less mature for non-TensorFlow frameworks compared to competitors.

- Documentation can sometimes lag behind the rapid pace of feature releases.

Platforms / Deployment

- Google Cloud

- Cloud / Hybrid (via Anthos)

Security & Compliance

- VPC Service Controls, CMEK, IAM.

- SOC 2, ISO 27001, HIPAA, PCI DSS.

Integrations & Ecosystem

Deeply integrated with the Google Cloud data ecosystem and the open-source community.

- BigQuery / Looker

- Cloud Storage

- KubeFlow

- TensorFlow

Support & Community

Strong corporate support and a community driven by Google’s leadership in AI research.

#3 — Microsoft Azure Machine Learning

Short description: An enterprise-grade AI service for the end-to-end ML lifecycle, with a heavy emphasis on collaborative development and governance.

Key Features

- Azure AI Studio: A high-level interface specifically for building generative AI solutions.

- Designer: A drag-and-drop interface for building pipelines without writing code.

- Prompt Flow: A specialized tool for developing and monitoring LLM-based applications.

- Managed Endpoints: Simplifies model deployment for both real-time and batch inference.

- Responsible AI Dashboard: Tools for debugging models and ensuring fairness and transparency.

- Automated ML: Quickly identifies the best algorithm and hyperparameters for your data.

Pros

- Superior integration for companies already using the Microsoft 365 or Azure stacks.

- Strongest focus on enterprise “Responsible AI” and governance tools.

Cons

- The transition between different interfaces (Studio vs. Classic) can be confusing.

- Some advanced features have a steep learning curve for non-Azure users.

Platforms / Deployment

- Azure

- Cloud / Hybrid / Edge (via Azure IoT Edge)

Security & Compliance

- Azure Active Directory (Entra ID), RBAC, VNETs.

- SOC 2, ISO 27001, HIPAA, FedRAMP.

Integrations & Ecosystem

Tightly coupled with the Microsoft data and developer ecosystem.

- Azure Databricks

- Power BI / Synapse Analytics

- GitHub / Azure DevOps

- ONNX Runtime

Support & Community

Extensive enterprise support and a large community supported by Microsoft’s developer outreach.

#4 — Databricks (Mosaic AI)

Short description: A “Data Intelligence” platform that unified data engineering, data science, and AI on a single lakehouse architecture.

Key Features

- Mosaic AI Model Training: Tools for pre-training or fine-tuning foundation models on your own data.

- MLflow: The industry-standard open-source platform for managing the ML lifecycle.

- Unity Catalog: Unified governance for data, models, and features.

- Feature Store: Integrated into the lakehouse for consistent feature engineering.

- Vector Search: Integrated vector database for building high-performance RAG apps.

- Databricks Workflows: Orchestrates complex data and ML pipelines in a single view.

Pros

- Eliminates the “data silos” between engineering and AI teams.

- Highly performant for massive-scale data processing and model training.

Cons

- Primarily focused on high-scale use cases; can be overkill for small projects.

- Infrastructure management (clusters) requires more technical oversight than “pure” serverless tools.

Platforms / Deployment

- AWS / Azure / Google Cloud

- Cloud

Security & Compliance

- Unity Catalog (RBAC), SSO, MFA, Customer-managed keys.

- SOC 2 Type II, ISO 27001, HIPAA.

Integrations & Ecosystem

Built on open-source standards, ensuring high portability.

- Apache Spark / Delta Lake

- MLflow

- dbt

- Tableau / Power BI

Support & Community

Excellent professional services and a massive community built around the Spark and MLflow ecosystems.

#5 — DataRobot

Short description: An AI platform focused on value-driven AI, offering extensive automation for building, deploying, and managing models for business impact.

Key Features

- Automated Machine Learning (AutoML): High-speed model discovery and optimization.

- Generative AI Hub: Specialized tools for evaluating and deploying LLMs safely.

- MLOps Monitoring: Real-time tracking of model performance and drift across all environments.

- AI App Builder: No-code tools for turning models into interactive business applications.

- Bias Mitigation: Proactive tools to detect and fix unfairness in models.

- Time Series Pro: Sophisticated forecasting capabilities for complex business data.

Pros

- Extremely high productivity for business analysts and small data teams.

- Strongest “business value” focus, including ROI tracking for AI projects.

Cons

- Can feel restrictive for “code-first” researchers who want total control.

- Licensing costs are high and generally target the enterprise market.

Platforms / Deployment

- AWS / Azure / GCP / On-prem

- Cloud / Self-hosted / Hybrid

Security & Compliance

- RBAC, Encryption, SSO.

- SOC 2 Type II, HIPAA, ISO 27001.

Integrations & Ecosystem

Designed to sit on top of any existing data stack.

- Snowflake / BigQuery

- Tableau / Looker

- Slack / Microsoft Teams

- Python / R SDKs

Support & Community

Highly rated customer success teams and a strong community of business AI leaders.

#6 — H2O.ai

Short description: An open-source-first ML platform known for its high-performance algorithms and powerful “Driverless AI” automation.

Key Features

- H2O Driverless AI: Advanced AutoML that performs automated feature engineering and model tuning.

- H2O-3: The core open-source distributed machine learning engine.

- Hydrogen Torch: A specialized tool for building deep learning models for vision and NLP.

- LLM Studio: A no-code interface for fine-tuning large language models.

- Wave: A framework for building real-time AI applications with Python.

- MLOps: Centrally manage, deploy, and govern models regardless of where they were trained.

Pros

- Legendary performance for structured/tabular data competitions (Kaggle-style).

- Flexible open-source core allows for deep customization and research.

Cons

- The product suite can feel fragmented with different names for various tools.

- The advanced “Driverless AI” requires a significant technical foundation to configure optimally.

Platforms / Deployment

- Windows / macOS / Linux / Cloud

- Self-hosted / Cloud / Hybrid

Security & Compliance

- LDAP/Active Directory, RBAC, Encryption.

- Not publicly stated.

Integrations & Ecosystem

Strong roots in the data science research community.

- R / Python / Scala

- Hadoop / Spark

- Snowflake

- Kubernetes

Support & Community

Excellent technical community and specialized support for enterprise customers.

#7 — Weights & Biases (W&B)

Short description: An “AI Developer Platform” that focuses on the experimentation, tracking, and collaboration needs of high-performance ML teams.

Key Features

- Experiments: Automatically track hyperparameters, code, and metrics for every run.

- Artifacts: Manage and version datasets and model weights across the team.

- Sweeps: Automated hyperparameter optimization that scales across any infrastructure.

- Reports: Collaborative dashboards for sharing ML progress with stakeholders.

- Prompts: Specifically designed to track and visualize LLM inputs and outputs.

- Tables: Interactive tool for visualizing and querying large ML datasets.

Pros

- The “gold standard” for experiment tracking; loved by developers for its simplicity.

- Infrastructure-agnostic; works with SageMaker, Vertex AI, or local GPUs.

Cons

- Not a “full-stack” platform; requires other tools for actual compute and training.

- Data privacy concerns for some enterprises regarding metadata stored on W&B servers.

Platforms / Deployment

- Web / Linux / macOS

- Cloud / Self-hosted

Security & Compliance

- SSO, MFA, Customer-managed storage for artifacts.

- SOC 2 Type II.

Integrations & Ecosystem

Integrates with nearly every popular ML library and platform.

- PyTorch / TensorFlow / Keras

- Hugging Face

- Amazon SageMaker / Google Vertex AI

- Kubeflow / Ray

Support & Community

Cult-like following among ML researchers and highly responsive developer support.

#8 — Domino Data Lab

Short description: An “Enterprise AI Platform” designed specifically for large, regulated industries to centralize and govern AI at scale.

Key Features

- Workbench: Provides self-service access to infrastructure and preferred tools (Jupyter, RStudio).

- Model API: High-availability hosting for real-time model deployment.

- Knowledge Center: A searchable library of all past experiments and code within the company.

- Governance & Compliance: Automated audit logs and approval workflows for model deployment.

- Hybrid Cloud Orchestration: Seamlessly move workloads between on-prem and cloud.

- Data Sources: Secure, centralized access to enterprise databases without local copies.

Pros

- Best-in-class for highly regulated industries (Pharma, Finance, Insurance).

- Preserves institutional knowledge by making all historical AI work searchable.

Cons

- User interface can feel a bit dated compared to modern “AI Studio” tools.

- High infrastructure overhead; requires significant resources to maintain the platform.

Platforms / Deployment

- AWS / Azure / GCP / On-prem

- Self-hosted / Cloud / Hybrid

Security & Compliance

- SSO, RBAC, Air-gapped installation support, Encryption.

- SOC 2 Type II, HIPAA.

Integrations & Ecosystem

Focused on enterprise technology and research tools.

- Jupyter / RStudio / VS Code

- SAS / MATLAB

- Snowflake / Teradata

- Kubernetes

Support & Community

High-touch professional services and a strong network of enterprise data science leaders.

#9 — Scale AI

Short description: A “Data Engine” platform that has expanded from high-quality labeling into a full suite for building and fine-tuning foundation models.

Key Features

- Scale Data Engine: The gold standard for human-in-the-loop data labeling.

- Scale Donovan: An AI-powered decision-making platform for government and defense.

- Scale GenAI Platform: Tools for RLHF (Reinforcement Learning from Human Feedback) and fine-tuning.

- Nucleus: A tool for visualizing and managing datasets to find edge cases.

- Model Evaluation: Specialized frameworks for testing the safety and performance of LLMs.

- Custom LLMs: Bespoke model development for specific enterprise domains.

Pros

- The undisputed leader in data quality and RLHF, essential for high-end LLM work.

- Unique focus on mission-critical sectors like defense and autonomous driving.

Cons

- Can be extremely expensive for high-volume data labeling tasks.

- Less focused on “standard” tabular ML compared to AWS or DataRobot.

Platforms / Deployment

- Web / Cloud

- Cloud / Hybrid

Security & Compliance

- SSO, MFA, FedRAMP, Air-gapped labeling support.

- SOC 2 Type II, ISO 27001.

Integrations & Ecosystem

Connected to the data sources that fuel model training.

- S3 / Google Cloud Storage

- PyTorch / TensorFlow

- Hugging Face

- Snowflake

Support & Community

White-glove support for enterprise clients and deep ties to the top AI research labs.

#10 — Kubeflow

Short description: An open-source, Kubernetes-native platform for making deployments of machine learning workflows simple, portable, and scalable.

Key Features

- Kubeflow Pipelines: A platform for building and deploying portable, scalable ML workflows.

- KFServing (KServe): High-performance model serving on Kubernetes.

- Notebooks: Integrated Jupyter notebooks within the Kubernetes cluster.

- Katib: A framework for automated hyperparameter tuning and architecture search.

- Central Dashboard: A web-based UI for managing all components of the platform.

- Multi-tenancy: Isolated environments for different teams within the same cluster.

Pros

- Completely free and open-source, with no vendor lock-in.

- The standard for organizations that want to run ML on-premises using Kubernetes.

Cons

- Extremely difficult to install and maintain without a dedicated DevOps team.

- The user experience is less polished and cohesive than commercial alternatives.

Platforms / Deployment

- Linux / Cloud (via Kubernetes)

- Self-hosted / Hybrid

Security & Compliance

- Dependent on Kubernetes configuration (Istio, RBAC, etc.).

- Varies / N/A.

Integrations & Ecosystem

Deeply rooted in the cloud-native and Kubernetes ecosystem.

- Kubernetes / Docker

- Argo Workflows

- Istio

- TensorFlow / PyTorch / XGBoost

Support & Community

Massive open-source community with contributions from Google, IBM, and Red Hat.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| Amazon SageMaker | AWS Ecosystem | AWS | Hybrid | Autopilot & Studio | 4.6/5 |

| Google Vertex AI | TPU Power & Search | Google Cloud | Hybrid | Model Garden | 4.5/5 |

| Azure Machine Learning | Enterprise Governance | Azure | Hybrid | Prompt Flow | 4.5/5 |

| Databricks | Lakehouse / Big Data | AWS, Azure, GCP | Cloud | Unity Catalog | 4.7/5 |

| DataRobot | Business Value / ROI | Multi-Cloud | Hybrid | Automated ML | 4.4/5 |

| H2O.ai | Tabular Data / Research | Multi-Platform | Hybrid | Driverless AI | 4.3/5 |

| Weights & Biases | Experiment Tracking | Web, Linux | Hybrid | Experiment Dashboard | 4.8/5 |

| Domino Data Lab | Regulated Industries | Multi-Cloud | Hybrid | Knowledge Center | 4.2/5 |

| Scale AI | Data Quality / RLHF | Web | Cloud | RLHF Expertise | 4.7/5 |

| Kubeflow | Kubernetes Natives | Linux | Self-hosted | Pipeline Portability | 4.0/5 |

Evaluation & Scoring of Machine Learning Platforms

The following scoring model evaluates each platform based on the weights established for modern enterprise AI requirements.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| SageMaker | 10 | 6 | 10 | 10 | 10 | 9 | 7 | 8.70 |

| Vertex AI | 9 | 7 | 9 | 9 | 10 | 8 | 8 | 8.55 |

| Azure ML | 9 | 7 | 10 | 10 | 9 | 9 | 8 | 8.65 |

| Databricks | 9 | 6 | 10 | 9 | 10 | 9 | 7 | 8.45 |

| DataRobot | 8 | 10 | 8 | 9 | 8 | 9 | 6 | 8.05 |

| H2O.ai | 9 | 6 | 8 | 7 | 10 | 7 | 8 | 7.85 |

| W&B | 7 | 9 | 10 | 8 | 8 | 9 | 9 | 8.30 |

| Domino | 8 | 5 | 8 | 10 | 8 | 9 | 6 | 7.55 |

| Scale AI | 10 | 6 | 8 | 9 | 9 | 9 | 6 | 8.10 |

| Kubeflow | 8 | 3 | 9 | 7 | 9 | 6 | 10 | 7.15 |

How to Interpret the Scores:

- Weighted Total: A score above 8.5 indicates a global leader in the space.

- Core (25%): Reflects the technical depth and breadth of the ML lifecycle features.

- Value (15%): Analyzes the ROI; open-source tools like Kubeflow score high here, while premium tools like DataRobot score lower.

- Ease (15%): Higher scores reflect automation and low-code capabilities.

Which Machine Learning Platform Tool Is Right for You?

Solo / Freelancer

If you are an individual practitioner, Weights & Biases combined with a local machine or a basic cloud instance is the most efficient way to work. It keeps you organized without the overhead of an enterprise platform. Google Vertex AI is also a strong choice for its generous free tier and easy-to-use AutoML.

SMB

Small and medium businesses should prioritize DataRobot or Azure Machine Learning. These platforms offer the highest level of automation, allowing a small team to perform like a much larger one. They handle the infrastructure “plumbing” so your team can focus on business problems.

Mid-Market

For companies with a dedicated data science team but not an infinite budget, Databricks or H2O.ai offer the best balance. They provide deep technical control and performance while integrating into modern data architectures.

Enterprise

Large, global enterprises already committed to a cloud provider should look to Amazon SageMaker, Google Vertex AI, or Azure Machine Learning. These offer the scale, compliance, and security that massive organizations require. For those in highly regulated fields like Pharma or Finance, Domino Data Lab is often the gold standard.

Budget vs Premium

- Budget: Kubeflow (Free), H2O-3 (Open-source core).

- Premium: Scale AI, DataRobot, Domino Data Lab.

Feature Depth vs Ease of Use

- Maximum Depth: SageMaker, Vertex AI, Houdini (Note: Houdini is for 3D; in ML, the equivalent depth is SageMaker or Kubeflow).

- Highest Ease: DataRobot, H2O Driverless AI.

Integrations & Scalability

- Best Integrations: Databricks, Weights & Biases.

- Best Scalability: Amazon SageMaker, Google Vertex AI.

Security & Compliance Needs

Organizations with extreme security requirements (e.g., Department of Defense or major banks) should prioritize Azure Machine Learning, Domino Data Lab, or Scale AI, as they offer air-gapped and high-compliance deployment options.

Frequently Asked Questions (FAQs)

1. What is the difference between a Machine Learning platform and an ML library?

A library (like PyTorch or Scikit-learn) is a set of code for building models, whereas a platform (like SageMaker) is the entire environment that provides servers, storage, monitoring, and security for those models.

2. Do I need to be a coder to use these platforms?

Not necessarily. Platforms like DataRobot and Azure ML Designer offer “no-code” or “low-code” interfaces, though a basic understanding of data science concepts is still required to get good results.

3. How do these platforms handle the high cost of GPUs?

Most modern platforms offer “spot instances,” serverless inference, and cluster auto-scaling, which turn off the expensive hardware as soon as your job is finished to save money.

4. Can I use these platforms to build Large Language Models (LLMs)?

Yes, platforms like Google Vertex AI and Databricks now have specialized “Generative AI” hubs for fine-tuning LLMs and building RAG (Retrieval-Augmented Generation) applications.

5. What is “Model Drift” and do these platforms fix it?

Model drift is when a model’s accuracy drops because the real world has changed. Platforms like SageMaker and DataRobot have built-in monitors that alert you when this happens so you can retrain the model.

6. Can I run these platforms on my own servers?

Yes, tools like Kubeflow, H2O.ai, and Domino Data Lab are designed to be installed on your own “on-premises” hardware or private clouds if you cannot use the public cloud.

7. What is MLOps and why is it included in these platforms?

MLOps is the practice of automating the deployment and maintenance of models. Platforms include it so that data scientists don’t have to spend all their time doing “IT work” manually.

8. How do I protect my data from being used to train the platform’s own AI?

Enterprise platforms like AWS, Microsoft, and Google provide legal and technical “opt-out” guarantees, ensuring your proprietary data stays within your private VPC and isn’t shared.

9. Which platform is best for the “Responsible AI” requirements?

Azure Machine Learning and DataRobot have the most advanced built-in dashboards for tracking model fairness, bias detection, and explainability for regulators.

10. How long does it take to set up an enterprise ML platform?

Cloud platforms like SageMaker can be “turned on” in minutes, but configuring the enterprise security, data connections, and team workflows typically takes 4 to 8 weeks for a medium-sized company.

Conclusion

The machine learning platform landscape has reached a point of maturity where the “standard” predictive AI and the “new” generative AI are merging into unified workstreams. While Amazon SageMaker and Google Vertex AI continue to dominate the high-scale infrastructure market, specialized players like Weights & Biases and Databricks are winning the hearts of developers through superior user experiences and open-source alignment.Choosing the right platform is no longer just a technical checkbox; it is a cultural decision about how your organization wants to build. For companies beginning their journey, we suggest starting with a small pilot on a cloud-native tool like Azure ML or Vertex AI before committing to the deeper, more complex ecosystems of the specialized enterprise providers.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals