Introduction

A Feature Store is a centralized repository designed to store, document, and serve machine learning (ML) features. In ML, a “feature” is an individual measurable property or characteristic of a phenomenon being observed. Feature store platforms act as a bridge between data engineering and data science, ensuring that the same feature logic used to train a model is exactly what is used when the model makes real-time predictions. This eliminates the “training-serving skew,” which is a common cause of model failure in production.

In , as generative AI and real-time recommendation engines dominate the market, feature stores have evolved from simple databases into complex orchestration layers. They handle high-velocity data from streaming sources like Kafka, perform point-in-time joins to prevent data leakage, and provide a searchable catalog for teams to discover and reuse existing work. By centralizing feature management, organizations can significantly reduce the time it takes to move an AI idea from research into a live environment.

Real-world use cases:

- Real-time Fraud Detection: Retrieving a user’s transaction frequency and average spend over the last 10 minutes to score a current purchase.

- Personalized E-commerce: Serving live product recommendations based on a customer’s clickstream data from the current session.

- Credit Scoring: Accessing historical financial behavior and current debt levels instantly during a loan application process.

- Predictive Maintenance: Monitoring sensor data from factory machinery to predict part failure before it occurs.

- Dynamic Insurance Pricing: Adjusting premiums in real-time based on live telematics and historical driving records.

Evaluation criteria for buyers:

- Online vs. Offline Serving: The ability to serve features at sub-second latency for live models while storing petabytes for historical training.

- Point-in-Time Correctness: Automated handling of time-stamps to ensure models aren’t “seeing” the future during training (preventing leakage).

- Transformation Support: Whether the platform handles the compute (Spark/SQL/Python) or just the storage of the resulting data.

- Feature Discovery: The quality of the UI/Catalog for searching and understanding existing features across the company.

- Integration Ecosystem: Compatibility with existing data warehouses (Snowflake, BigQuery) and ML platforms (SageMaker, Vertex AI).

- Data Lineage: Tracking the origin of a feature back to its raw data source for debugging and compliance.

- Monitoring & Alerts: Detecting data drift or “staleness” in features before they impact model accuracy.

- Security & RBAC: Granular control over who can see or modify specific feature groups, especially for sensitive data.

- Scalability: Performance levels when handling billions of feature lookups per day.

- Open-Source vs. Managed: Choosing between the flexibility of open-source tools and the low overhead of fully managed SaaS platforms.

Best for: Data scientists and MLOps engineers building production-grade ML models that require consistent, high-velocity data inputs.

Not ideal for: Small teams with static datasets, one-off research projects, or organizations that do not have models running in a “live” production environment.

Key Trends in Feature Store Platforms

- GenAI & Vector Integration: Feature stores are increasingly supporting “embedding” storage, allowing them to serve vectors for Retrieval-Augmented Generation (RAG) and semantic search.

- Serverless Feature Engineering: A shift toward “declarative” features where users define logic in Python/SQL, and the platform automatically handles the underlying Spark/Flink infrastructure.

- Edge Feature Serving: Deploying lightweight feature caches closer to the user (at the edge) to reduce latency for mobile and IoT applications.

- Automated Feature Discovery: AI-driven tools that scan raw data warehouses and automatically suggest potential new features based on correlation analysis.

- Zero-Copy Feature Sharing: Technologies that allow features to be “shared” between different cloud regions or platforms without physically moving the massive underlying data.

- Real-time Aggregation Solvers: Specialized engines that can calculate complex “sliding window” aggregates (e.g., “count of logins in the last 30 seconds”) on the fly.

- Governance as Code: Integrating feature definitions directly into CI/CD pipelines so that every change is versioned, tested, and audited like software.

- Cross-Cloud Synchronization: The ability to keep online feature stores in sync across AWS, Azure, and GCP for global application availability.

How We Selected These Tools (Methodology)

Our selection of the top 10 feature store platforms is based on a rigorous evaluation of their operational maturity and technical innovation. We prioritized:

- Production Reliability: Tools proven to handle mission-critical, low-latency workloads at scale.

- End-to-End Functionality: Platforms that cover the full lifecycle from transformation and storage to serving and monitoring.

- Market Share & Community: A mix of industry-standard managed services and popular open-source projects.

- Architectural Flexibility: Software that can integrate with modern data stacks like Snowflake, Databricks, and various streaming engines.

- Governance & Compliance: Evaluation of built-in security, lineage, and auditing capabilities necessary for enterprise use.

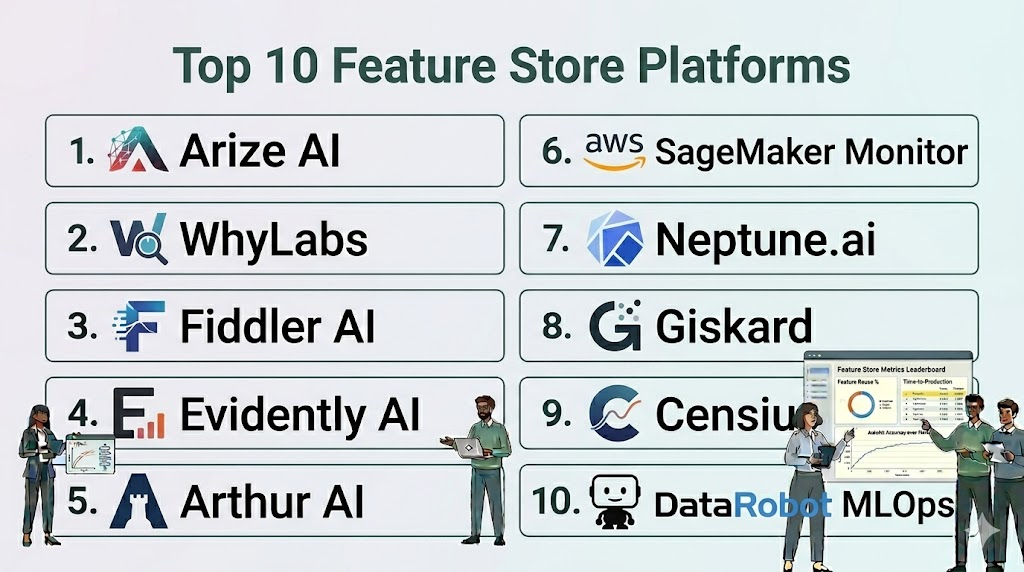

Top 10 Feature Store Platforms

#1 — Tecton

Short description: A fully managed, enterprise-grade feature store that automates the complete feature lifecycle. It is widely considered the pioneer in “feature platforms” rather than just stores.

Key Features

- Declarative Framework: Define features as code in Python, and Tecton handles the production-ready pipelines.

- Point-in-Time Joins: Automatically prevents data leakage by ensuring training data matches historical reality perfectly.

- On-Demand Transformations: Allows for compute-heavy transformations to happen at the exact moment of an inference request.

- Streaming & Batch Support: Seamlessly integrates with Kafka, Kinesis, Snowflake, and Databricks.

- Enterprise Security: Includes robust RBAC, SSO, and encryption protocols.

- Advanced Monitoring: Built-in tools for tracking feature staleness and data drift.

Pros

- Extremely high reliability for mission-critical, real-time applications.

- Eliminates the need for data scientists to manage complex data infrastructure.

Cons

- Premium pricing that may be prohibitive for startups or smaller teams.

- Tightly coupled with specific cloud data warehouses for optimal performance.

Platforms / Deployment

- AWS / Google Cloud

- Cloud (Managed)

Security & Compliance

- SOC 2 Type II, HIPAA, GDPR, ISO 27001.

- SSO/SAML, end-to-end encryption.

Integrations & Ecosystem

Tecton acts as the “intelligence layer” on top of modern data warehouses.

- Snowflake / Databricks

- AWS S3 / Redshift

- Spark / Kafka

- SageMaker / Vertex AI

Support & Community

Top-tier enterprise support with dedicated customer success managers and an active professional community via Slack.

#2 — Hopsworks

Short description: A full-stack MLOps platform that includes a powerful, open-source feature store known for its unique “Dual-Database” architecture.

Key Features

- HopsFS Integration: Uses a specialized file system to manage massive offline datasets efficiently.

- Online Feature Store: Utilizes RonDB (a managed version of MySQL Cluster) for sub-millisecond lookups.

- Python/Spark Support: Allows users to ingest data from Pandas, Spark, or on-demand via JDBC.

- Time Travel Queries: Backed by Apache Hudi, allowing users to query historical data as it existed at any point.

- Feature Validation: Built-in Great Expectations integration for validating data quality during ingestion.

- Collaborative Registry: A central UI for searching, versioning, and discussing features.

Pros

- High flexibility with support for both cloud and on-premises deployment.

- Excellent performance for streaming data applications.

Cons

- The broader platform can feel complex if you only need the feature store component.

- Requires management of underlying infrastructure unless using the cloud version.

Platforms / Deployment

- AWS / Azure / Google Cloud / On-prem

- Cloud / Hybrid / Self-hosted

Security & Compliance

- RBAC, multi-tenancy, encryption.

- SOC 2 compliance (Managed version).

Integrations & Ecosystem

Very strong in the open-source and big data ecosystem.

- Apache Spark / Flink

- Apache Kafka

- SageMaker / Azure ML

- Databricks

Support & Community

Active open-source community and professional support via Logical Clocks (the company behind Hopsworks).

#3 — Feast (Feature Store)

Short description: The leading open-source feature store, designed to be lightweight and vendor-agnostic. It is perfect for teams that want to avoid vendor lock-in.

Key Features

- Lightweight Architecture: Minimal infrastructure overhead; can be deployed via a Python library.

- Push/Pull Model: Ingest data from streaming sources (Push) or batch sources (Pull).

- Storage Independence: Works with a wide range of providers like Redis, Postgres, BigQuery, and Snowflake.

- CLI & Python SDK: Highly developer-friendly interface for managing feature definitions.

- Feature Ingestion: Supports on-demand transformations for real-time data processing.

- Local Testing: Allows developers to run a full feature store on their laptop for rapid iteration.

Pros

- Completely free and open-source under the Apache 2.0 license.

- Extremely portable; can run anywhere Kubernetes or Python is supported.

Cons

- Lacks the “out-of-the-box” automation for compute/transformations found in Tecton.

- Requires manual effort to set up and maintain the underlying databases and security.

Platforms / Deployment

- Any Cloud / Kubernetes

- Self-hosted

Security & Compliance

- Basic RBAC; security largely depends on the underlying storage providers used.

- N/A (Community driven).

Integrations & Ecosystem

Integrates with almost any tool in the modern data stack.

- Snowflake / BigQuery / Redshift

- Redis / DynamoDB / PostgreSQL

- Spark / Dask

- Kubeflow

Support & Community

Very large and active community on GitHub and Slack. Community-driven documentation and tutorials.

#4 — Databricks Feature Store

Short description: A feature store built directly into the Databricks Lakehouse, leveraging Delta Lake for unified batch and streaming data.

Key Features

- Unity Catalog Integration: Provides a single, centralized governance layer for all data and AI assets.

- Automatic Lineage: Automatically tracks features from source data to the final ML model.

- Consistency: Uses Delta Lake to ensure the same data is used for training and serving without drift.

- Online Store Support: Low-latency serving via integrations with DynamoDB or Azure Cosmos DB.

- Native MLflow Support: Features are automatically packaged with the model for seamless deployment.

- Searchable Registry: A built-in UI for discovering features across the organization.

Pros

- Zero additional infrastructure to manage for existing Databricks users.

- Unrivaled governance and lineage capabilities due to Unity Catalog.

Cons

- Tightly locked into the Databricks ecosystem.

- Online serving requires external database setup (though it’s automated).

Platforms / Deployment

- AWS / Azure / Google Cloud

- Cloud (Managed)

Security & Compliance

- Enterprise-grade ACLs, SSO, MFA, encryption.

- SOC 2, ISO 27001, HIPAA, GDPR.

Integrations & Ecosystem

Deeply integrated with the Databricks ecosystem and cloud providers.

- MLflow

- Delta Lake

- Apache Spark

- Azure / AWS / GCP data services

Support & Community

Standard Databricks enterprise support and a large community of Spark developers.

#5 — AWS SageMaker Feature Store

Short description: A fully managed, purpose-built repository within Amazon SageMaker to store, share, and manage features for ML models.

Key Features

- Unified Store: Provides an online store for real-time inference and an offline store for training.

- SageMaker Studio Sync: Features are easily discoverable and manageable within the SageMaker IDE.

- SQL Access: Query features using familiar SQL via Amazon Athena.

- Time Travel: Supports point-in-time queries to retrieve historical feature states.

- Glue Data Catalog: Automatically indexes feature groups for easy discovery.

- IAM Integration: Leverages standard AWS security and permissions models.

Pros

- Native integration with the entire SageMaker and AWS ecosystem.

- Pay-as-you-go pricing with no upfront commitments.

Cons

- AWS-only; not suitable for multi-cloud or hybrid-cloud strategies.

- Can be complex to configure outside of the SageMaker environment.

Platforms / Deployment

- AWS

- Cloud (Managed)

Security & Compliance

- AWS IAM, KMS encryption, VPC endpoints.

- SOC 1/2/3, ISO 27001, HIPAA, FedRAMP.

Integrations & Ecosystem

Focused strictly on the AWS universe.

- Amazon S3 / Redshift / Athena

- SageMaker Pipelines / Training / Serving

- AWS Glue / Lake Formation

Support & Community

Standard AWS support plans and a massive library of technical documentation and AWS-certified training.

#6 — Google Vertex AI Feature Store

Short description: A managed feature store on Google Cloud Platform that emphasizes high-scale serving and deep integration with BigQuery.

Key Features

- BigQuery Integration: Allows users to serve features directly from BigQuery tables for lower latency.

- Streaming Ingestion: Supports real-time updates via Pub/Sub or Dataflow.

- Managed Serving: Handles the scaling of compute resources for online feature lookups automatically.

- Search and Reuse: A central registry within the Google Cloud Console for feature discovery.

- Batch Serving: Efficiently exports massive historical datasets for model training.

- Monitoring: Integrated monitoring for feature drift and operational health.

Pros

- Best choice for organizations already utilizing BigQuery and Vertex AI.

- Scales to handle massive amounts of data with very little manual effort.

Cons

- Locked into the Google Cloud ecosystem.

- Pricing can be complex due to the combination of storage and serving costs.

Platforms / Deployment

- Google Cloud

- Cloud (Managed)

Security & Compliance

- VPC Service Controls, Cloud IAM, encryption at rest.

- SOC 2, ISO 27001, HIPAA, GDPR.

Integrations & Ecosystem

Optimized for the Google Data and AI stack.

- BigQuery / Bigtable

- Google Cloud Storage / Pub/Sub

- Vertex AI Studio / Model Registry

Support & Community

Google Cloud professional support and a growing community around Vertex AI.

#7 — Abacus.ai

Short description: An AI-powered feature store that automates feature engineering and monitoring using advanced machine learning.

Key Features

- AI-Assisted Engineering: Automatically suggests transformations and features based on raw data patterns.

- Hybrid Storage: Unified handling of real-time streaming data and historical batch data.

- SQL & Python Support: Define complex features using ANSI SQL or custom Python code.

- Feature Versioning: Immutable versioning for all feature groups and datasets.

- Streaming Sync: Deep integration with S3, Snowflake, and Salesforce for live updates.

- Automated Monitoring: Proactively detects data drift and fetching errors.

Pros

- Significantly reduces the manual labor of feature engineering through automation.

- Easy-to-use SaaS interface that is accessible to non-technical users.

Cons

- Proprietary platform; moving away can be difficult once deeply integrated.

- May offer less granular control than code-heavy platforms like Feast.

Platforms / Deployment

- AWS / GCP / Azure (via SaaS)

- Cloud (Managed)

Security & Compliance

- SSO, Encryption, RBAC.

- SOC 2 Type II compliant.

Integrations & Ecosystem

Integrates with popular SaaS and cloud data sources.

- Snowflake / Redshift / BigQuery

- AWS S3 / GCS

- Salesforce / Marketo

Support & Community

Strong customer success focus and professional support for enterprise users.

#8 — Qwak

Short description: A unified MLOps platform that offers a high-performance, real-time feature store focused on sub-second aggregations.

Key Features

- Real-time Aggregations: Specialized engine for calculating “on-the-fly” metrics like click-counts.

- Dual Storage: Columnar retrieval for offline training and row-oriented lookups for online serving.

- Declarative Definitions: Define feature logic in a central configuration file.

- Integrated MLOps: Seamlessly connects the feature store to Qwak’s model serving and monitoring tools.

- Low Latency: Optimized for high-throughput, millisecond-level feature serving.

- Automated Ingestion: Simplifies the connection between streaming brokers and the feature store.

Pros

- Excellent choice for teams looking for a single, unified platform for the entire ML lifecycle.

- Particularly strong in real-time, event-driven use cases.

Cons

- Newer entry in the market compared to giants like Tecton or SageMaker.

- Less flexibility if you only want to use the feature store part without the rest of Qwak.

Platforms / Deployment

- AWS / GCP

- Cloud (Managed)

Security & Compliance

- RBAC, SSO, encryption.

- SOC 2 compliant.

Integrations & Ecosystem

Focused on modern cloud-native ML stacks.

- AWS / GCP

- Kafka / Kinesis

- Snowflake

Support & Community

High-touch technical support and an active developer community on Slack.

#9 — Featureform

Short description: An “orchestration-centric” feature store that acts as a virtual layer over your existing data infrastructure.

Key Features

- Infrastructure Agnostic: Does not force you to move data; it orchestrates features in your existing DBs.

- Virtual Registry: A central place to define features that are then executed on Redis, Snowflake, or Postgres.

- Lineage & Versioning: Tracks exactly how a feature was created, even if the compute happens elsewhere.

- Standardized Definitions: Allows different teams to share a single source of truth for feature logic.

- Zero Migration: Uses your existing infrastructure, making it very fast to deploy.

- Support for Any Language: Primary focus is Python, but the orchestration layer is extensible.

Pros

- The most non-disruptive way to add feature store capabilities to a mature data stack.

- Avoids the cost and complexity of setting up a new, specialized feature database.

Cons

- Performance depends entirely on the underlying infrastructure you choose.

- Lacks the “all-in-one” managed experience of platforms like Tecton.

Platforms / Deployment

- Any (AWS, Azure, GCP, On-prem)

- Self-hosted / Cloud

Security & Compliance

- Dependent on your underlying infrastructure.

- N/A (Open-core model).

Integrations & Ecosystem

Works with almost any database or data warehouse.

- Snowflake / BigQuery / Redshift

- PostgreSQL / Redis / Cassandra

- Spark / Ray

Support & Community

Active open-source community and professional support via the Featureform enterprise offering.

#10 — Rasgo

Short description: A feature store optimized for teams that use SQL and dbt (data build tool) for their data engineering.

Key Features

- dbt Integration: Seamlessly syncs with dbt projects for feature engineering and documentation.

- Snowflake Optimized: Uses a “push-down” architecture to keep all compute within Snowflake.

- Low-Code UI: Allows for feature discovery and creation through an intuitive visual interface.

- Automated Backfills: Simplifies the process of creating historical features for training.

- Data Quality Profiling: Built-in checks to ensure features are accurate before use.

- Serving API: Provides an easy way to serve features to online models via a REST API.

Pros

- Best-in-class for teams that are “SQL-heavy” and already use dbt.

- Requires very little new technical knowledge for standard data engineers.

Cons

- Less focus on high-scale, real-time streaming compared to Tecton or Hopsworks.

- Primarily focused on the Snowflake ecosystem.

Platforms / Deployment

- AWS (primarily via Snowflake)

- Cloud (Managed)

Security & Compliance

- SOC 2 Type II compliant; GDPR and HIPAA ready.

- SSO support.

Integrations & Ecosystem

Deeply integrated with the modern data stack.

- Snowflake

- dbt

- Tableau / Power BI

Support & Community

High-touch customer success and a specialized community for analytics engineers.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| Tecton | Enterprise Real-time ML | AWS, Google Cloud | Managed | Point-in-time Correctness | 4.8/5 |

| Hopsworks | Large-scale Streaming | Multi-cloud, On-prem | Hybrid | Dual-Database (RonDB) | 4.7/5 |

| Feast | Open-source/Self-host | Any / Kubernetes | Self-hosted | Vendor Agnostic | 4.6/5 |

| Databricks | Existing Bricks Users | AWS, Azure, GCP | Managed | Unity Catalog Governance | 4.8/5 |

| AWS SageMaker | AWS-only Teams | AWS | Managed | IAM/Studio Integration | 4.4/5 |

| Vertex AI | GCP-only Teams | Google Cloud | Managed | BigQuery Integration | 4.5/5 |

| Abacus.ai | AI-Automated Teams | Multi-cloud | Managed | AI-Assisted Feature Eng | 4.7/5 |

| Qwak | Unified MLOps | AWS, GCP | Managed | Real-time Aggregations | 4.6/5 |

| Featureform | Existing Infra Users | Any Platform | Hybrid | Virtual Orchestration | 4.5/5 |

| Rasgo | dbt & SQL Users | Snowflake | Managed | dbt Workflow Sync | 4.6/5 |

Evaluation & Scoring of Feature Store Platforms

The scores below reflect a comparative analysis of how these platforms perform in a demanding production environment.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| Tecton | 10 | 8 | 9 | 10 | 10 | 10 | 6 | 8.80 |

| Hopsworks | 9 | 6 | 9 | 8 | 10 | 8 | 8 | 8.35 |

| Feast | 7 | 6 | 10 | 6 | 8 | 9 | 10 | 7.95 |

| Databricks | 9 | 9 | 8 | 10 | 9 | 9 | 7 | 8.60 |

| AWS SageMaker | 8 | 8 | 7 | 9 | 8 | 9 | 8 | 8.05 |

| Vertex AI | 8 | 8 | 8 | 9 | 8 | 8 | 8 | 8.05 |

| Abacus.ai | 9 | 9 | 8 | 9 | 8 | 8 | 7 | 8.35 |

| Qwak | 9 | 8 | 8 | 8 | 9 | 8 | 7 | 8.20 |

| Featureform | 8 | 7 | 10 | 7 | 8 | 7 | 9 | 8.05 |

| Rasgo | 7 | 9 | 8 | 8 | 7 | 8 | 8 | 7.75 |

How to interpret these scores:

A weighted total over 8.5 represents a “market leader” capable of handling the most complex enterprise scenarios. “Ease” scores inversely correlate with “Core” depth in some cases (e.g., Tecton is deep but has a high learning curve). “Value” is highest for open-source tools like Feast, while “Integrations” favor vendor-agnostic platforms.

Which Feature Store Platform Is Right for You?

Solo / Freelancer

For a single developer, Feast is the best choice. It is free, allows you to learn the core concepts of feature stores without a massive bill, and can run on your local machine.

SMB

Small businesses with limited engineering resources should look at Abacus.ai or Rasgo. These platforms prioritize ease of use and automation, allowing a small team to build production models quickly without hiring a dedicated MLOps team.

Mid-Market

Companies that have specialized data needs but aren’t yet at “Big Tech” scale should evaluate Qwak or Featureform. They offer a great balance of performance and flexibility while integrating into existing data stacks.

Enterprise

For large organizations with strict security, high-velocity data, and multiple data science teams, Tecton, Databricks, or Hopsworks are the primary options. They provide the level of governance, reliability, and support required for mission-critical AI.

Budget vs Premium

- Budget: Feast (Free), Featureform (Open-core).

- Premium: Tecton, Databricks, Abacus.ai.

Feature Depth vs Ease of Use

- Deep Depth: Tecton, Hopsworks, Databricks.

- Easy to Use: Abacus.ai, Rasgo.

Integrations & Scalability

- Top Integrations: Feast, Featureform.

- Top Scalability: Tecton, Hopsworks.

Security & Compliance Needs

Organizations in banking, healthcare, or government should prioritize Tecton, Databricks, or AWS SageMaker, as they come with the most robust, pre-audited compliance certifications.

Frequently Asked Questions (FAQs)

- What is the difference between a database and a feature store?

A database simply stores data, while a feature store manages the logic, versioning, and dual-serving (online/offline) specifically for ML features, ensuring consistency between training and production. - Why do I need a feature store for real-time AI?

Real-time models need access to data that changes rapidly (like “number of logins in the last hour”). A feature store automates the calculation and low-latency retrieval of these dynamic features. - What is “Training-Serving Skew”?This happens when the data used to train a model is different from the data it sees in production. A feature store prevents this by using a single definition for both phases.

- Do I need to change my existing data warehouse to use a feature store?

No. Most modern feature stores like Tecton or Feast sit “on top” of warehouses like Snowflake or BigQuery, acting as an orchestration and serving layer rather than a replacement. - Is it difficult to set up a feature store?

Open-source tools like Feast require manual infrastructure management, but managed SaaS platforms like Tecton or Abacus.ai can be set up in a few hours by connecting your data sources. - What role does a feature store play in MLOps?

It is the central source of truth for data in the MLOps lifecycle, providing the governance, monitoring, and sharing capabilities needed to scale from one model to hundreds. - How does a feature store prevent data leakage?

By using “point-in-time” correctness, the feature store ensures that when you generate historical training data, the model only “sees” data that was actually available at that specific time in the past. - Can I use a feature store for Generative AI?

Yes, modern feature stores are increasingly used to store and serve the “context” or “embeddings” needed for RAG (Retrieval-Augmented Generation) applications. - Does a feature store monitor model performance?

No, but it monitors the “features” themselves. It alerts you if the data entering the model changes (drift) or if the pipelines stop updating (staleness), which are leading indicators of model failure. - Is a feature store worth the cost for small projects?

For simple projects with static data, it might be overkill. However, if you plan to scale your AI efforts or run real-time models, the initial investment saves significant engineering time later.

Conclusion

The rise of the feature store marks a transition in the AI industry from “experimental” to “operational.” While Tecton and Databricks provide the most complete managed experiences for large-scale enterprise use, the open-source Feast remains the gold standard for flexibility and learning.Selecting the right platform is ultimately about your “time-to-value.” If you have a complex data stack and a large team, a virtual orchestration layer like Featureform or Hopsworks may be best. If you want to move as fast as possible with minimal engineering overhead, an automated platform like Abacus.ai is your destination.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals