Introduction

MLOps (Machine Learning Operations) platforms are integrated environments designed to manage the entire lifecycle of machine learning models, from data preparation and training to deployment and monitoring. Much like DevOps revolutionized traditional software development, MLOps brings discipline, automation, and reliability to AI. These platforms bridge the gap between data science and IT operations, ensuring that models are not just “lab experiments” but scalable, production-ready assets that provide consistent business value.

In , the complexity of managing large-scale AI—including LLMs and generative models—requires specialized infrastructure. MLOps platforms provide the necessary “scaffolding” to handle versioning for data and models, automate retraining loops, and monitor for “model drift,” where a model’s accuracy degrades over time due to changing real-world data. By providing a unified workspace, these platforms allow teams to collaborate effectively and maintain compliance in increasingly regulated AI environments.

Real-world use cases:

- Automated Retraining: Updating recommendation engines daily based on the latest user clickstream data without manual intervention.

- Predictive Maintenance: Managing thousands of models across different industrial sensors to predict equipment failure.

- Regulatory Compliance: Maintaining a full audit trail of which data was used to train a specific credit-scoring model.

- Generative AI Orchestration: Managing the fine-tuning and deployment of large language models for specialized customer support bots.

- Real-time Fraud Detection: Deploying and monitoring high-frequency models that score financial transactions in milliseconds.

Evaluation criteria for buyers:

- Experiment Tracking: Ability to log parameters, code versions, and results for every training run.

- Model Registry: A centralized hub for managing model versions, stages (dev, staging, prod), and metadata.

- Pipeline Automation: Support for building directed acyclic graphs (DAGs) to automate data and training steps.

- Deployment Flexibility: Support for high-speed APIs, batch scoring, and edge device deployment.

- Monitoring & Observability: Tools to track model accuracy, latency, and data drift in production.

- Feature Store: A centralized repository for sharing and managing “features” (input data) across different models.

- Scalability: How well the platform handles massive datasets and distributed training across GPUs.

- Collaboration Tools: Shared notebooks, project dashboards, and RBAC (Role-Based Access Control).

- Integration Ecosystem: Connectivity with data lakes (Snowflake, Databricks) and CI/CD tools (GitHub Actions).

- Governance: Support for bias detection, explainability, and security audits.

Best for: Data scientists, ML engineers, and IT managers looking to transition from manual “notebook-based” workflows to automated, production-grade AI systems.

Not ideal for: Organizations with only one or two static models, hobbyists working on small datasets, or companies that do not yet have a stable data collection strategy.

Key Trends in MLOps Platforms

- LLMOps Integration: Modern platforms are evolving into “LLMOps” hubs, offering specialized tools for prompt engineering, vector database management, and RAG (Retrieval-Augmented Generation) monitoring.

- Governance-by-Design: With global AI regulations (like the EU AI Act) in full force, platforms are embedding automated bias detection and compliance reporting directly into the deployment workflow.

- Serverless ML Pipelines: A move toward “zero-infrastructure” MLOps where compute resources scale to zero when models aren’t being trained, significantly reducing costs.

- Edge MLOps: Enhanced capabilities for pushing models to local devices (IoT, mobile) while maintaining a central monitoring “heartbeat” in the cloud.

- Automated Model Compression: Built-in tools for quantization and distillation to make heavy AI models run faster on cheaper hardware.

- Collaborative Feature Engineering: Shared feature stores that allow different teams to reuse data transformations, preventing “siloed” work.

- AI-Assisted Debugging: Using LLMs to analyze model failures and suggest fixes for code or data quality issues within the MLOps interface.

- Real-time Model Observability: Moving beyond basic “uptime” metrics to deep behavioral analysis of model outputs in real-time.

How We Selected These Tools (Methodology)

To identify the premier MLOps platforms, we conducted a technical review of the current market leaders based on:

- End-to-End Capability: We prioritized platforms that cover the full lifecycle rather than just a single niche (like just tracking).

- Enterprise Adoption: We evaluated which tools are being used by Fortune 500 companies to maintain high-availability AI.

- Developer Experience: We analyzed the quality of SDKs, CLI tools, and documentation.

- Resilience and Uptime: Only tools with proven track records in production environments were considered.

- Infrastructure Agnostic vs. Native: We included a mix of cloud-native (AWS, Google) and agnostic (Weights & Biases, Comet) tools.

- Security Posture: Evaluation of administrative controls, encryption, and data privacy features.

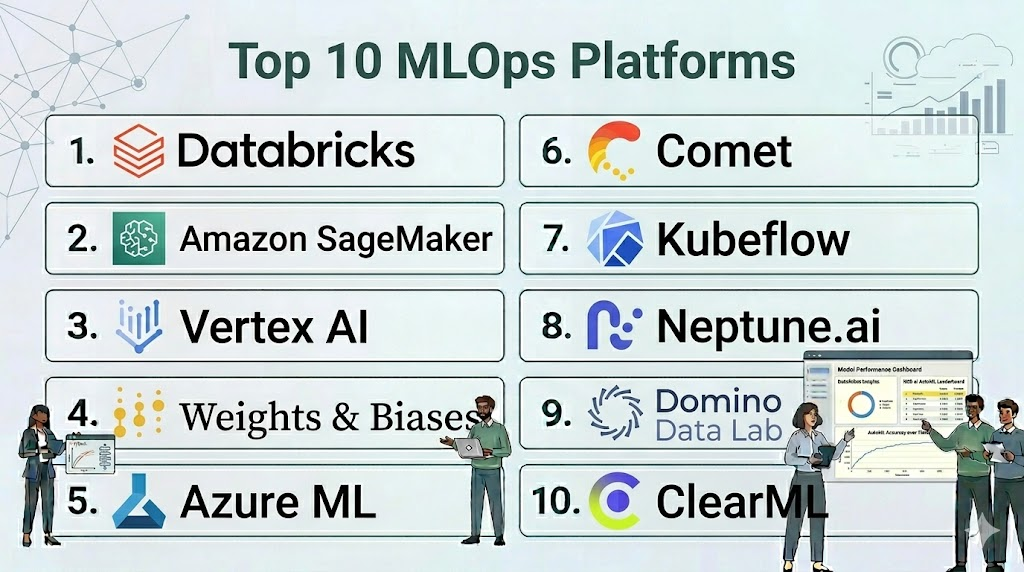

Top 10 MLOps Platforms

#1 — Databricks (Mosaic AI)

Short description: A unified Data and AI platform that offers comprehensive MLOps through its acquisition of MosaicML and its native integration with MLflow.

Key Features

- MLflow Integration: The industry-standard tool for experiment tracking and model management.

- Unity Catalog: Centralized governance for data, models, and features across the entire organization.

- Feature Store: Built-in repository for managing and serving features for both training and inference.

- Mosaic AI Model Serving: High-performance, serverless deployment for everything from small models to large LLMs.

- Delta Lake Foundations: Ensures that the data used for training is high-quality, versioned, and reliable.

- AutoML: Automated toolset for quickly finding the best model for a given dataset.

Pros

- Seamlessly bridges the gap between data engineering and machine learning.

- Best-in-class support for large-scale distributed training.

Cons

- Can be expensive for smaller teams due to its consumption-based pricing model.

- Steep learning curve for users not familiar with the Spark ecosystem.

Platforms / Deployment

- AWS / Azure / Google Cloud

- Cloud

Security & Compliance

- SSO, MFA, RBAC, Encryption at rest/transit.

- SOC 2, ISO 27001, HIPAA, GDPR.

Integrations & Ecosystem

Deeply integrated with the modern data lakehouse stack.

- Apache Spark

- Delta Lake

- Hugging Face

- GitHub / GitLab

Support & Community

Extensive professional support and a massive community of MLflow and Spark users.

#2 — Amazon SageMaker

Short description: A fully managed service that provides every developer and data scientist with the ability to build, train, and deploy ML models quickly.

Key Features

- SageMaker Pipelines: A purpose-built CI/CD service for machine learning.

- Model Monitor: Automatically detects deviations in model quality and data drift in production.

- Clarify: Provides tools to detect bias in datasets and models and explain model predictions.

- JumpStart: A hub for pre-trained models and solutions for common ML use cases, including GenAI.

- Feature Store: A fully managed repository to store, update, and share features.

- Training Compiler: Optimizes ML models to train up to 50% faster by streamlining the compute graph.

Pros

- Incredible breadth of features—virtually every MLOps need is covered in one console.

- Deeply integrated with the AWS ecosystem (S3, IAM, Lambda).

Cons

- The UI can be cluttered and overwhelming for new users.

- Proprietary nature can lead to AWS vendor lock-in.

Platforms / Deployment

- AWS

- Cloud / Edge (via SageMaker Edge Manager)

Security & Compliance

- VPC Endpoints, KMS Encryption, IAM roles.

- SOC 1/2/3, ISO 27001, FedRAMP, HIPAA.

Integrations & Ecosystem

Native integration with the entire AWS service catalog.

- Amazon S3 / Redshift

- AWS Glue

- Step Functions

- EKS (Kubernetes)

Support & Community

Massive documentation library and global professional services support.

#3 — Google Cloud Vertex AI

Short description: A unified AI platform that brings together Google Cloud’s ML services into a single UI and API for a streamlined MLOps experience.

Key Features

- Vertex AI Pipelines: Based on Kubeflow, it allows for building and running serverless ML workflows.

- Feature Store (Managed): A centralized repository for managing and serving ML features at scale.

- Model Registry: A single place to track all model versions and their deployment status.

- Vertex AI Search and Conversation: Specialized tools for building and deploying GenAI applications.

- Vizier: A black-box optimization service for tuning hyperparameters.

- Edge Manager: Tools to deploy and monitor models on edge devices.

Pros

- The best platform for teams focusing on Google’s advanced AI research (TensorFlow, JAX).

- High-performance infrastructure, including access to Google’s TPU (Tensor Processing Units).

Cons

- SDK and API changes can be frequent as the platform evolves.

- Limited to the Google Cloud ecosystem.

Platforms / Deployment

- Google Cloud

- Cloud / Edge

Security & Compliance

- VPC Service Controls, CMEK support.

- SOC 2, ISO 27001, HIPAA, GDPR.

Integrations & Ecosystem

Optimized for the Google Cloud data stack.

- BigQuery

- Google Cloud Storage

- Looker

- Colab Enterprise

Support & Community

Standard Google Cloud support and a strong community around the Kubeflow open-source project.

#4 — Weights & Biases (W&B)

Short description: A developer-first MLOps platform that focuses on experiment tracking, dataset versioning, and model management with a heavy emphasis on visualization.

Key Features

- Experiments: Automatically log all your metrics, hyperparameters, and code versions.

- Artifacts: Version your datasets and models to ensure complete reproducibility.

- Sweeps: Automate hyperparameter tuning with specialized visualization tools.

- Models: A registry for managing the lifecycle of your models from development to production.

- Reports: Collaborative dashboards to share insights and results with the wider team.

- Prompts: Specialized tools for tracking and visualizing LLM inputs and outputs.

Pros

- Extremely lightweight and easy to integrate into any existing codebase.

- Industry-leading visualizations that make debugging models much easier.

Cons

- Not a full-featured deployment platform; you still need a place to “run” your models.

- Pricing for enterprise teams can be significant.

Platforms / Deployment

- Windows / macOS / Linux / Cloud

- Cloud / Self-hosted / Hybrid

Security & Compliance

- SSO/SAML, RBAC, Data encryption.

- SOC 2 Type II.

Integrations & Ecosystem

Agnostic platform that works with almost every ML framework.

- PyTorch / TensorFlow / Keras

- Hugging Face

- Kubernetes

- AWS / GCP / Azure

Support & Community

Highly active developer community and excellent technical support for enterprise customers.

#5 — Azure Machine Learning

Short description: Microsoft’s enterprise-grade service for the end-to-end ML lifecycle, with a strong focus on security and integration with the Azure ecosystem.

Key Features

- Azure ML Pipelines: Build, track, and manage multi-step ML workflows.

- Managed Online Endpoints: Simplifies the deployment and scaling of models for real-time inference.

- Designer: A drag-and-drop interface for building ML models without writing code.

- Automated ML: Quickly identifies the best algorithms and hyperparameters for your data.

- Responsible AI Dashboard: Tools for model interpretability, fairness, and error analysis.

- Data Labeling: Built-in tools for managing large-scale data labeling projects.

Pros

- The best choice for organizations already committed to the Microsoft stack.

- Exceptional security and governance features suitable for highly regulated industries.

Cons

- Can feel slower to navigate than more lightweight platforms.

- The Python SDK has undergone major version changes that may require code updates.

Platforms / Deployment

- Azure

- Cloud / Edge / Hybrid (via Azure Arc)

Security & Compliance

- Azure Active Directory (SSO), Private Link, MFA.

- ISO 27001, SOC 2, FedRAMP, HIPAA.

Integrations & Ecosystem

Seamlessly connected to Microsoft’s data and productivity tools.

- Azure Data Lake / SQL DB

- Power BI

- Azure DevOps

- Microsoft Teams

Support & Community

Professional Microsoft support and a broad network of Azure certified partners.

#6 — Comet

Short description: An MLOps platform that allows data scientists and teams to track, compare, explain, and optimize experiments and models.

Key Features

- Experiment Tracking: Real-time logging of code, metrics, and environment details.

- Model Registry: Centralized management of model versions and deployment status.

- Comet MPP (Model Production Monitoring): Track model performance in production to detect drift.

- SDK-first approach: Designed to be easily integrated into any Python environment.

- Artifacts: Manage and version datasets across the entire ML pipeline.

- LLM SDK: Dedicated tools for debugging and monitoring large language models.

Pros

- Excellent collaboration features for data science teams.

- Very strong performance monitoring capabilities for models already in production.

Cons

- Like W&B, it is primarily a tracking and management layer, not a compute provider.

- Smaller community compared to the major cloud providers.

Platforms / Deployment

- Windows / macOS / Linux / Cloud

- Cloud / On-prem / Hybrid

Security & Compliance

- SSO, RBAC, Encryption.

- SOC 2 Type II.

Integrations & Ecosystem

Works across the entire ML toolchain.

- PyTorch / TensorFlow

- Scikit-learn

- FastAPI / Flask

- Kubernetes

Support & Community

Direct access to engineering support for enterprise clients and active Slack community.

#7 — Kubeflow

Short description: An open-source Kubernetes-native platform for developing, deploying, and managing portable, scalable ML workflows.

Key Features

- Kubeflow Pipelines: A platform for building and deploying multi-step ML workflows based on Docker containers.

- KFServing (KServe): High-performance model serving on Kubernetes.

- Katib: A Kubernetes-native project for automated hyperparameter tuning.

- Notebooks: Integrated Jupyter notebooks that run directly on your Kubernetes cluster.

- Central Dashboard: A unified UI for managing all components of the ML stack.

- Multi-tenancy: Strong isolation for different teams working on the same cluster.

Pros

- Completely free and open-source with no vendor lock-in.

- Infinite scalability—if you can scale your Kubernetes cluster, you can scale your ML.

Cons

- Extremely high operational complexity; requires dedicated DevOps/SRE expertise.

- The UI can be inconsistent as it is a collection of various open-source projects.

Platforms / Deployment

- Linux (on Kubernetes)

- Self-hosted / Any Cloud

Security & Compliance

- Varies (depends on your Kubernetes configuration and Istio setup).

Integrations & Ecosystem

The center of the open-source MLOps world.

- Docker / Kubernetes

- Argo Workflows

- Tekton

- TensorFlow / PyTorch

Support & Community

Massive open-source community with contributions from Google, IBM, and Red Hat.

#8 — Neptune.ai

Short description: A lightweight MLOps tool focused on experiment tracking and model registry, designed for teams that value simplicity and speed.

Key Features

- Metadata Store: A central place to store all ML-related information (metrics, images, files).

- Experiment Comparison: Side-by-side visualization of different training runs.

- Model Registry: Manage your model lifecycle and share models with stakeholders.

- Notebook Versioning: Track changes in Jupyter notebooks to ensure reproducibility.

- Flexibility: Does not force a specific structure; fits into your existing code.

- Scalable Logging: Built to handle millions of data points without slowing down.

Pros

- One of the cleanest and most intuitive user interfaces in the MLOps space.

- Very fast setup—you can start logging data in minutes.

Cons

- Focused primarily on metadata management; does not offer pipeline or deployment orchestration.

- Fewer built-in integrations for GenAI compared to W&B or Comet.

Platforms / Deployment

- Windows / macOS / Linux / Cloud

- Cloud / On-prem

Security & Compliance

- SSO, RBAC, Encryption.

- SOC 2 Type II.

Integrations & Ecosystem

Integrates with all major ML frameworks via a simple Python SDK.

- PyTorch Lightning

- XGBoost / LightGBM

- Optuna

- Airflow

Support & Community

Fast and responsive technical support and detailed documentation.

#9 — Domino Data Lab

Short description: An enterprise MLOps platform designed to provide a central “system of record” for data science teams in highly regulated industries.

Key Features

- Domino Cloud: Fully managed environment for training and deploying models.

- Reproducibility Engine: Automatically captures the entire environment (code, data, hardware) for every run.

- Model Monitoring: Integrated dashboards to track model health and data drift.

- Self-Service Infrastructure: Data scientists can spin up GPUs or large clusters without IT help.

- Governance & Audit: Full versioning and approval workflows for model deployment.

- Knowledge Center: A searchable repository of all past work to prevent “reinventing the wheel.”

Pros

- Exceptional for compliance and audit-heavy sectors like banking and pharma.

- Strong focus on data science team productivity and collaboration.

Cons

- Can be significantly more expensive than other platforms.

- The interface can feel more “corporate” and less “developer-focused” than tools like W&B.

Platforms / Deployment

- AWS / Azure / GCP / On-prem

- Cloud / Hybrid / Self-hosted

Security & Compliance

- SSO, RBAC, Air-gapped installation support.

- SOC 2, ISO 27001, HIPAA.

Integrations & Ecosystem

Broad support for enterprise data and IT tools.

- Snowflake / SAS

- Jupyter / RStudio

- Kubernetes

- Jira

Support & Community

Enterprise-grade professional support and a strong network of industry-specific consultants.

#10 — ClearML

Short description: An open-source, end-to-end MLOps suite that offers a unique combination of experiment tracking, orchestration, and data management.

Key Features

- Experiment Manager: Track every part of the ML process with zero code changes in many cases.

- Orchestration: Turn any machine into a worker and manage job queues via a web UI.

- Data Management: A specialized versioning system for large-scale datasets.

- Hyperparameter Optimization: Built-in support for various search algorithms.

- Model Serving: Simple, scalable deployment of models as REST APIs.

- App Store: Pre-built templates for common MLOps tasks.

Pros

- Very high value—the open-source version includes features often locked behind enterprise paywalls.

- The “Agent” based orchestration is incredibly easy to set up on local hardware.

Cons

- The UI can be complex due to the sheer number of features.

- The documentation can sometimes lag behind the rapid pace of development.

Platforms / Deployment

- Windows / macOS / Linux

- Cloud / Self-hosted / Hybrid

Security & Compliance

- SSO, RBAC (in Pro/Enterprise versions).

- SOC 2 (ClearML Cloud).

Integrations & Ecosystem

Works well with the modern containerized ML stack.

- Docker / Kubernetes

- PyTorch / TensorFlow

- Slack / Discord for alerts

- S3 / GCP / Azure Storage

Support & Community

Active Slack community and professional support available for enterprise tiers.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| Databricks | Data + AI Teams | AWS, Azure, GCP | Cloud | MLflow Integration | 4.7/5 |

| Amazon SageMaker | AWS Ecosystem | AWS | Hybrid | Clarify (Bias Detect) | 4.5/5 |

| Vertex AI | Google Research/TPUs | Google Cloud | Cloud | Pipelines (Kubeflow) | 4.6/5 |

| Weights & Biases | Tracking & Viz | Multi-Platform | Hybrid | Experiment Reports | 4.8/5 |

| Azure ML | Microsoft Stack | Azure | Hybrid | Responsible AI Dashboard | 4.4/5 |

| Comet | Production Monitoring | Multi-Platform | Hybrid | MPP (Drift Detect) | 4.6/5 |

| Kubeflow | Open-source/K8s | Multi-Platform | Self-hosted | Kubernetes Native | 4.2/5 |

| Neptune.ai | Metadata Storage | Multi-Platform | Hybrid | Clean UI/UX | 4.7/5 |

| Domino Data Lab | Regulated Industries | Multi-Platform | Hybrid | Reproducibility Engine | 4.4/5 |

| ClearML | Open-source/Orchestration | Multi-Platform | Hybrid | Integrated Agent | 4.5/5 |

Evaluation & Scoring of MLOps Platforms

The scores below represent how each platform performs against the rigorous demands of a 2026 enterprise AI pipeline.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| Databricks | 10 | 6 | 10 | 9 | 10 | 9 | 7 | 8.75 |

| Amazon SageMaker | 10 | 5 | 9 | 10 | 9 | 9 | 8 | 8.50 |

| Vertex AI | 9 | 6 | 9 | 9 | 10 | 8 | 8 | 8.40 |

| Weights & Biases | 7 | 10 | 10 | 9 | 9 | 9 | 8 | 8.55 |

| Azure ML | 9 | 6 | 9 | 10 | 8 | 9 | 7 | 8.20 |

| Comet | 8 | 8 | 9 | 8 | 8 | 8 | 8 | 8.10 |

| Kubeflow | 9 | 2 | 8 | 7 | 9 | 6 | 10 | 7.40 |

| Neptune.ai | 6 | 10 | 9 | 8 | 8 | 8 | 8 | 7.90 |

| Domino Data Lab | 8 | 6 | 8 | 10 | 8 | 9 | 6 | 7.75 |

| ClearML | 9 | 7 | 8 | 7 | 9 | 7 | 9 | 8.15 |

Scoring Interpretation:

- Weighted Total: A score above 8.5 indicates a “Tier 1” platform that provides a complete, high-performance ecosystem.

- Core vs. Ease: Platforms like Kubeflow have high core functionality but low ease of use, reflecting the technical expertise required.

- Value: This considers the balance of features vs. price; open-source options like ClearML and Kubeflow score highly here.

Which MLOps Platform Tool Is Right for You?

Solo / Freelancer

If you are working on your own, Weights & Biases or Neptune.ai are the best choices. They are easy to set up, have generous free tiers, and provide the visualization tools you need to understand your models without requiring you to manage complex infrastructure.

SMB

Small and medium businesses should consider ClearML or a managed cloud service like Vertex AI. ClearML offers an incredible range of features for free or low cost, while managed cloud services allow you to start small and scale without hiring a dedicated DevOps team.

Mid-Market

Companies with established ML teams should look at Databricks or Comet. These platforms offer the governance and collaborative features needed to manage multiple projects and models across a growing team while maintaining high performance.

Enterprise

Large enterprises, especially those in finance, healthcare, or insurance, should prioritize Domino Data Lab, Amazon SageMaker, or Azure ML. These platforms offer the “gold standard” in security, compliance, and long-term reproducibility required for critical business operations.

Budget vs Premium

- Budget Choice: Kubeflow (Open-source), ClearML (Free tier).

- Premium Choice: Databricks, Amazon SageMaker, Domino Data Lab.

Feature Depth vs Ease of Use

- Maximum Depth: Kubeflow, SageMaker.

- Maximum Ease: Neptune.ai, Weights & Biases.

Integrations & Scalability

- Best for Scaling: Databricks, Vertex AI.

- Best for Integrations: Weights & Biases, MLflow (via Databricks).

Security & Compliance Needs

Organizations requiring “air-gapped” or highly audited environments should prioritize Domino Data Lab or Azure Machine Learning.

Frequently Asked Questions (FAQs)

1. What is the difference between DevOps and MLOps?

DevOps focuses on traditional software (code), while MLOps handles the unique challenges of machine learning, including data versioning, model drift, and retraining loops.

2. Do I need a platform to do MLOps?

While you can build your own tools, MLOps platforms provide pre-built, integrated solutions that save time, reduce errors, and allow data scientists to focus on modeling rather than infrastructure.

3. What is model drift and why should I care?

Model drift occurs when the data your model sees in the real world changes over time, causing accuracy to drop. MLOps platforms monitor for this and can trigger automated retraining.

4. Can I use these platforms for Large Language Models (LLMs)?

Yes, most modern platforms have added “LLMOps” features, including prompt tracking, fine-tuning orchestration, and specialized monitoring for generative AI outputs.

5. How much do MLOps platforms typically cost?

Costs vary widely, from free open-source tools (Kubeflow) to consumption-based cloud services (SageMaker) and enterprise licenses (Domino) that can cost thousands per month.

6. Do these platforms require me to use a specific ML framework?

Most platforms are framework-agnostic, meaning they work with PyTorch, TensorFlow, Scikit-learn, and even proprietary frameworks through standard Python SDKs.

7. What is a Feature Store and is it necessary?

A feature store is a central place to store and share data transformations. It’s not strictly necessary for a single model, but it is critical for teams looking to scale AI across the enterprise.

8. Is Kubeflow the same as MLOps?

No, Kubeflow is an open-source project used to build an MLOps platform on Kubernetes. MLOps is the overall practice; Kubeflow is one way to implement it.

9. How do these platforms handle data privacy?

Enterprise-grade platforms include features like VPC isolation, data encryption, and role-based access to ensure that sensitive training data is protected throughout the lifecycle.

10. Can I run MLOps on-premises?

Yes, platforms like Domino Data Lab, ClearML, and Kubeflow can be installed in your own data center to meet strict data sovereignty or security requirements.

Conclusion

The transition from experimental AI to operational AI is the defining challenge of 2026. While Weights & Biases and Neptune.ai have perfected the “inner loop” of experiment tracking, platforms like Databricks and Amazon SageMaker have built the robust “outer loop” necessary for reliable production.Your choice should be dictated by your existing data infrastructure and your team’s technical maturity. If you are already “all-in” on a specific cloud, their native MLOps service is usually the best starting point. However, if you value flexibility and developer experience above all else, the agnostic tools provide a level of focus and clarity that is hard to match.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals