Introduction

Model monitoring and drift detection tools are specialized software platforms designed to track the performance, health, and reliability of machine learning models after they have been deployed to production. Unlike traditional software monitoring, which focuses on system metrics like CPU and memory, ML monitoring focuses on data integrity and statistical consistency. These tools detect “drift”—the phenomenon where a model’s predictive accuracy degrades over time because the statistical properties of the input data or the relationship between variables have changed.

In the AI-driven landscape of , a model is only as good as its last prediction. Factors such as changing consumer behavior, evolving market conditions, or even sensor degradation can cause a model to become “stale.” Without robust monitoring, businesses risk making decisions based on “hallucinating” or inaccurate models. Drift detection tools provide the early warning system necessary to trigger retraining, ensuring that AI systems remain fair, accurate, and aligned with real-world reality.

Real-world use cases:

- Credit Scoring: Detecting when a sudden shift in the economy makes a model’s risk assessment of loan applicants outdated.

- E-commerce Recommendations: Identifying when a new cultural trend makes historical user data a poor predictor of current shopping intent.

- Healthcare Diagnostics: Monitoring medical imaging models to ensure they remain accurate when deployed on new types of scanning hardware.

- Autonomous Vehicles: Detecting “concept drift” when a vehicle encounters weather conditions or road signs not present in its training data.

- Predictive Maintenance: Ensuring that sensor-based models in manufacturing accounts for the natural wear and tear of machinery over time.

Evaluation criteria for buyers:

- Drift Detection Methods: Support for statistical tests like Kolmogorov-Smirnov (K-S), Population Stability Index (PSI), and Kullback-Leibler (KL) divergence.

- Alerting & Integration: The speed and flexibility of notifications via Slack, PagerDuty, or email when performance dips.

- Data Integrity Checks: Ability to catch missing values, type mismatches, or outliers in the production data stream.

- Explainability (XAI): Tools that show why a model is drifting, not just that it is drifting.

- Bias & Fairness Tracking: Monitoring for disparate impact across protected demographic groups.

- Scalability: The ability to monitor thousands of models across multiple environments without high latency.

- Dashboarding: Quality of visual interfaces for both data scientists and business stakeholders.

- Automated Retraining Triggers: Integration with CI/CD pipelines to start retraining jobs automatically upon drift detection.

- Deployment Flexibility: Support for cloud-native, on-prem, or hybrid-cloud environments.

- Storage Efficiency: How the tool manages the historical “ground truth” data required for performance comparison.

Best for: MLOps engineers, data scientists, AI product managers, and compliance officers responsible for the long-term governance of production AI systems.

Not ideal for: Organizations in the research phase with no deployed models, or simple heuristic-based systems that do not rely on statistical learning.

Key Trends in Model Monitoring & Drift Detection

- Real-time Drift Correction: Advanced platforms are moving beyond detection to “hot-patching” models in real-time while a full retrain is underway.

- Unstructured Data Monitoring: The rise of LLMs has forced a shift toward monitoring embeddings and semantic drift in text, images, and audio.

- Privacy-Preserving Monitoring: Using federated learning or differential privacy to monitor models on sensitive edge devices without moving the raw data.

- Causal Drift Analysis: New tools that identify the “root cause” of drift—distinguishing between a sensor failure and a genuine shift in market behavior.

- Governance as Code: Monitoring policies are increasingly defined in code, ensuring that compliance and fairness checks are automatically applied to every new model.

- LLM Guardrails: Specialized monitoring for Generative AI that checks for toxicity, hallucination rates, and prompt injection in real-time.

- Continuous Feedback Loops: Direct integration with labeling services to acquire “ground truth” data faster for performance validation.

- Resource-Aware Monitoring: Tools that adjust monitoring frequency based on the model’s business criticality to save on cloud compute costs.

How We Selected These Tools (Methodology)

To identify the top 10 tools for model monitoring and drift detection, we followed a rigorous evaluation process:

- Statistical Robustness: We prioritized tools that offer a wide array of scientifically validated drift detection algorithms.

- Enterprise Adoption: We focused on platforms used by Fortune 500 companies in highly regulated sectors like finance and healthcare.

- Toolchain Compatibility: Preference was given to tools that integrate seamlessly with popular ML stacks like SageMaker, Databricks, and Vertex AI.

- Bias Mitigation: We looked for platforms that include proactive fairness monitoring as a core feature rather than an afterthought.

- Developer Experience: We evaluated the ease of SDK integration and the quality of API documentation.

- Operational Resilience: We analyzed how these tools handle “cold start” problems and high-volume inference streams.

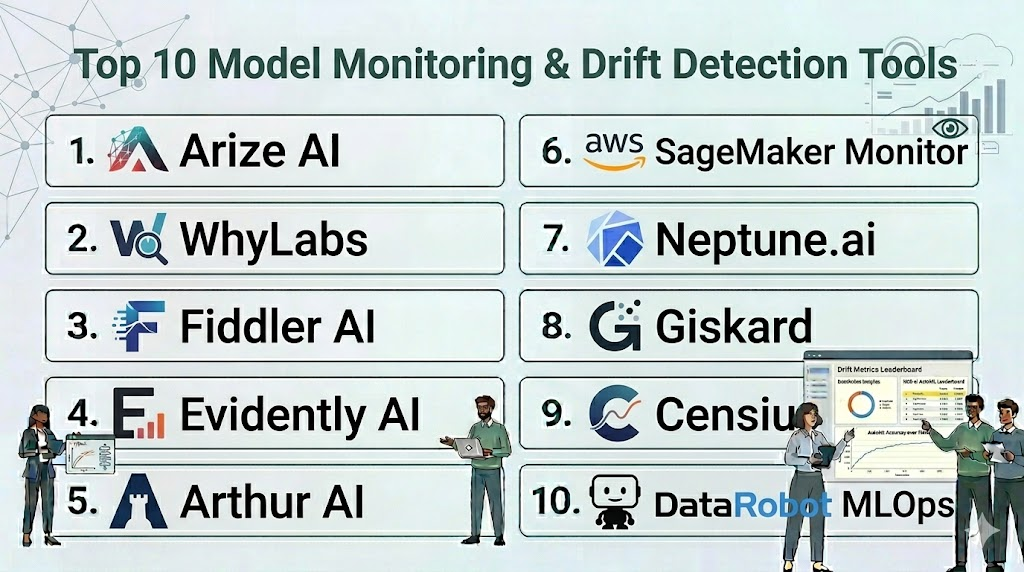

Top 10 Model Monitoring & Drift Detection Tools

#1 — Arize AI

Short description: A leading observability platform that helps teams troubleshoot model performance, visualize high-dimensional data, and detect drift across the entire ML lifecycle.

Key Features

- Embedding Visualization: Uses UMAP and t-SNE to visualize semantic drift in unstructured data like text and images.

- Root Cause Analysis: Powerful “slice-and-dice” capabilities to find exactly which sub-population is causing a performance drop.

- Fairness Monitoring: Built-in checks for disparate impact and various fairness metrics.

- Automated Monitors: No-code setup for standard drift and performance monitors.

- Data Quality Validation: Real-time checks for schema changes and data distribution shifts.

- Integration with LLMs: Specialized tools for monitoring large language models for hallucination and retrieval quality.

Pros

- Exceptional at handling unstructured data and complex embedding spaces.

- Provides a very intuitive interface for non-technical stakeholders to view model health.

Cons

- Can be expensive for organizations with very high-volume, low-criticality models.

- Primarily a cloud-focused solution; limited options for purely air-gapped environments.

Platforms / Deployment

- AWS / Azure / GCP

- Cloud / Hybrid

Security & Compliance

- SSO/SAML, MFA, RBAC, Encryption.

- SOC 2 Type II, HIPAA, GDPR.

Integrations & Ecosystem

Integrates with nearly all major data and ML tools.

- Databricks / Snowflake

- PyTorch / TensorFlow

- Slack / PagerDuty

- DVC / MLflow

Support & Community

Excellent white-glove support and a very active “Observability” community on Slack.

#2 — WhyLabs

Short description: An enterprise observability platform built on the “whylogs” open-source library, focusing on data privacy and scalable monitoring.

Key Features

- Privacy-Preserving Sketches: Uses “whylogs” to create statistical summaries (profiles) so raw data never leaves your environment.

- Anomaly Detection: Automated detection of outliers and distribution shifts.

- Integration with CI/CD: Proactively test data and models during the build phase.

- Zero-Copy Monitoring: Does not require moving or storing copies of production data.

- Constraint Checking: Define hard rules for data quality (e.g., “age must be > 0”) that trigger alerts.

- Multi-Cloud Support: Works consistently across different cloud providers and local environments.

Pros

- Strongest choice for highly sensitive data due to its “summary-only” monitoring approach.

- Extremely lightweight and fast; minimal impact on inference latency.

Cons

- The UI is slightly more technical compared to Arize.

- Deep troubleshooting sometimes requires manual inspection of the generated profiles.

Platforms / Deployment

- Windows / macOS / Linux / AWS / Azure / GCP

- Cloud / Hybrid / Self-hosted

Security & Compliance

- MFA, SSO, RBAC.

- SOC 2, ISO 27001 (WhyLabs Cloud).

Integrations & Ecosystem

Broad support for the modern data stack.

- Apache Spark / Ray

- Kafka / Kinesis

- Airflow / Prefect

- S3 / GCS

Support & Community

Strong backing of the whylogs open-source project and dedicated enterprise support.

#3 — Fiddler AI

Short description: An enterprise ML observability platform specializing in model explainability and trust, helping teams understand why models behave the way they do.

Key Features

- XAI (Explainable AI): Uses SHAP and Integrated Gradients to provide feature-level explanations for drift.

- Bias Detection: Identifies bias in model predictions across different demographic segments.

- Custom Drift Metrics: Ability to define proprietary drift detection logic beyond standard statistical tests.

- Model Service Monitoring: Tracks system-level metrics alongside ML performance metrics.

- Centralized Governance: Provides a “Model Registry” style view of all monitored assets.

- LLM Robustness: Specific features for monitoring prompt-response quality and safety.

Pros

- Unrivaled for regulatory compliance due to its heavy focus on explainability.

- High-quality reporting features for executive and risk management teams.

Cons

- Explainability calculations can be computationally expensive for very large models.

- The initial setup for complex, custom models can be time-consuming.

Platforms / Deployment

- AWS / Azure / GCP

- Cloud / Hybrid / On-prem

Security & Compliance

- SSO, RBAC, end-to-end encryption.

- SOC 2, ISO 27001, HIPAA.

Integrations & Ecosystem

Focuses on deep integration with enterprise ML platforms.

- Amazon SageMaker

- Google Vertex AI

- Azure ML

- Kubeflow

Support & Community

Excellent professional services and a strong presence in the “Responsible AI” space.

#4 — Evidently AI

Short description: An open-source Python library and platform for evaluating, testing, and monitoring ML models throughout their lifecycle.

Key Features

- Interactive Reports: Generates visual HTML reports for data drift, target drift, and regression/classification performance.

- Monitoring Dashboards: A lightweight web UI to track metrics over time.

- JSON Profiles: Export monitoring results in machine-readable formats for automated pipelines.

- Pre-defined Tests: Dozens of built-in statistical tests for different data types.

- Integration with Airflow: Easily add monitoring steps to existing data orchestration workflows.

- Evidently Cloud: A managed version that offers collaborative features and long-term data storage.

Pros

- Extremely easy for Python developers to get started with just a few lines of code.

- Open-source core is free forever and very customizable.

Cons

- The open-source version requires manual management of data storage for historical trends.

- Lacks the advanced enterprise governance features found in Fiddler or Arize.

Platforms / Deployment

- Windows / macOS / Linux

- Self-hosted / Cloud

Security & Compliance

- Dependent on local setup (Open-source); RBAC/SSO available in Cloud version.

- SOC 2 (Cloud version).

Integrations & Ecosystem

Works wherever Python runs.

- MLflow

- Streamlit

- Prefect / Dagster

- Pandas / Scikit-learn

Support & Community

Vibrant Discord community and active GitHub development.

#5 — Arthur AI

Short description: A model monitoring and governance platform designed to improve model performance and ensure responsible AI deployment.

Key Features

- Dynamic Alerting: Intelligent alerting that adapts to the natural variance in your data.

- Fairness Tooling: Advanced metrics to measure and mitigate bias in real-time.

- Query-able Data Store: Search through your production inference data like a database.

- Compute Cost Tracking: Monitors the efficiency and cost of running your models.

- Multi-tenant Support: Designed for large organizations with multiple independent data science teams.

- LLM Monitoring: Specialized features for detecting hallucination in generative models.

Pros

- Very strong focus on fairness and enterprise governance.

- Excellent dashboarding for non-technical risk managers.

Cons

- Primarily focused on the enterprise market; may be “overkill” for startups.

- Can have a higher learning curve for initial configuration.

Platforms / Deployment

- AWS / GCP / Azure

- Cloud / On-prem / VPC

Security & Compliance

- MFA, SSO, RBAC, Audit logs.

- SOC 2, ISO 27001.

Integrations & Ecosystem

Standard ML stack integrations.

- SageMaker / Vertex AI

- Snowflake

- Slack

- Python SDK

Support & Community

Professional enterprise support and a focus on high-touch customer success.

#6 — Amazon SageMaker Model Monitor

Short description: A built-in service for AWS users that automatically monitors ML models in production and alerts when there are deviations in data quality or model performance.

Key Features

- Data Quality Monitoring: Detects outliers and distribution shifts in real-time.

- Model Explainability: Integrated with SageMaker Clarify to monitor for bias and explainability drift.

- Automated Scheduling: Set up monitoring jobs to run hourly or daily with a few clicks.

- Integration with S3: Stores all monitoring reports and baselines directly in your buckets.

- CloudWatch Integration: Uses standard AWS alerting and logging mechanisms.

- No-Code Baseline: Automatically generates baseline statistics from your training data.

Pros

- Zero extra infrastructure to manage for existing AWS customers.

- Lowest total cost of ownership if you are already using SageMaker for training and hosting.

Cons

- Limited to the AWS ecosystem; not suitable for multi-cloud strategies.

- Visualizations are not as sophisticated as specialized tools like Arize or Fiddler.

Platforms / Deployment

- AWS

- Cloud

Security & Compliance

- IAM, KMS, VPC endpoints, CloudTrail.

- SOC 1/2/3, ISO 27001, FedRAMP, HIPAA.

Integrations & Ecosystem

Deeply tied to the AWS universe.

- Amazon S3

- Amazon CloudWatch

- SageMaker Pipelines

- AWS Lambda

Support & Community

Standard AWS professional support and an immense library of documentation.

#7 — Neptune.ai

Short description: A flexible metadata store for MLOps that tracks both experimentation and production model performance in a centralized dashboard.

Key Features

- Live Dashboards: Visualize performance metrics, drift, and data quality in real-time.

- Model Registry: Keep track of model versions alongside their monitoring data.

- Flexible Metadata: Store any type of data, from images and audio to hardware metrics.

- Query-able API: Retrieve monitoring data to trigger custom retraining workflows.

- Collaboration: Share monitoring views with team members through secure links.

- Comparison Views: Compare production performance against training-time baselines.

Pros

- Highly flexible; can be used for everything from hyperparameter tuning to production monitoring.

- One of the best user interfaces for data scientists.

Cons

- Requires more “manual” setup for drift detection compared to automated platforms like Arize.

- Not a specialized drift tool; more of a general-purpose ML metadata platform.

Platforms / Deployment

- Windows / macOS / Linux / AWS / Azure / GCP

- Cloud / On-prem

Security & Compliance

- SSO, RBAC, Project-level permissions.

- SOC 2 Type II (Cloud).

Integrations & Ecosystem

Integrates with nearly everything in the ML world.

- PyTorch / Keras / LightGBM

- Optuna / Ray Tune

- Jupyter / Google Colab

- GitHub Actions

Support & Community

Active user community and high-quality technical documentation.

#8 — Giskard

Short description: An open-source collaborative platform specifically designed for testing and monitoring the quality and safety of AI models, with a focus on LLMs.

Key Features

- Automated Scanning: Automatically finds vulnerabilities like bias, toxicity, and hallucinations.

- Collaborative Debugging: Allow non-technical users to “red-team” models through a simple UI.

- CI/CD Integration: Run quality tests on models before every deployment.

- Adversarial Testing: Built-in tools for testing model robustness against prompt injections.

- LLM Monitoring: Specialized real-time monitoring for prompt and response drift.

- Domain-Specific Tests: Templates for testing models in specific sectors like finance or legal.

Pros

- Excellent for teams focused on Generative AI and LLM safety.

- The “Scanner” feature saves hours of manual testing.

Cons

- Younger tool with a smaller community compared to Evidently.

- Less focused on traditional tabular data drift than Arize or WhyLabs.

Platforms / Deployment

- Windows / macOS / Linux

- Self-hosted / Cloud

Security & Compliance

- RBAC and encryption for self-hosted versions.

- Not publicly stated.

Integrations & Ecosystem

Focused on the modern AI stack.

- Hugging Face

- LangChain

- OpenAI / Anthropic

- MLflow

Support & Community

Very active Discord community and rapid open-source development.

#9 — Censius

Short description: An AI observability platform that helps teams ensure high-performing models through automated monitoring, drift detection, and data quality checks.

Key Features

- Automated Drift Detection: Monitors for feature, label, and concept drift using multiple statistical tests.

- Segment Monitoring: Track performance on specific slices of data (e.g., “New York users”).

- Activity Logs: Full audit trail of all model predictions and metadata changes.

- Performance Comparison: Side-by-side comparison of different model versions in production.

- Custom Alerts: Sophisticated alerting logic with multi-channel notification support.

- Onboarding Wizard: Guided setup for different types of ML models (regression, classification, etc.).

Pros

- Very balanced feature set for both tabular and image-based models.

- Good balance between technical depth and ease of use.

Cons

- Smaller market presence; fewer third-party community resources.

- Dashboard customization can be limited compared to Arize.

Platforms / Deployment

- AWS / Azure / GCP

- Cloud / Hybrid

Security & Compliance

- MFA, SSO, RBAC.

- SOC 2.

Integrations & Ecosystem

Standard MLOps tool support.

- Python SDK

- Airflow

- Slack / Teams

- S3 / BigQuery

Support & Community

Responsive support teams and clear onboarding documentation.

#10 — DataRobot MLOps

Short description: A comprehensive enterprise platform that provides end-to-end management, monitoring, and governance for all models, regardless of where they were trained.

Key Features

- Challenger Models: Run shadow models in production to compare new versions against the current champion.

- Automated Retraining: Trigger retraining pipelines automatically based on drift thresholds.

- Governance & Compliance: Full lifecycle tracking and documentation generation for regulatory needs.

- Service Health: Monitors system uptime, latency, and throughput.

- Deployment Center: A centralized hub for managing models across multiple clouds and on-prem clusters.

- Humble AI: Allows models to “flag” high-uncertainty predictions for human review.

Pros

- The most complete “all-in-one” solution for enterprise MLOps.

- Excellent for organizations with hundreds of models in diverse environments.

Cons

- High entry price point; geared toward large enterprises.

- Can be overly complex for teams only needing simple drift detection.

Platforms / Deployment

- AWS / Azure / GCP / Bare Metal

- Cloud / Hybrid / On-prem

Security & Compliance

- Role-based access, SSO, end-to-end encryption, Full audit logs.

- SOC 2, ISO 27001, FedRAMP, HIPAA.

Integrations & Ecosystem

Extremely broad compatibility.

- Snowflake / Databricks

- Kubernetes / OpenShift

- Tableau / Power BI

- Standard ML libraries (Scikit, XGBoost, etc.)

Support & Community

Global professional services, extensive training (DataRobot University), and strong community forums.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| Arize AI | Unstructured Data / LLM | AWS, Azure, GCP | Hybrid | Embedding Visualization | 4.8/5 |

| WhyLabs | Data Privacy | Multi-platform | Hybrid | whylogs Privacy Profiles | 4.7/5 |

| Fiddler AI | Explainability / Risk | AWS, Azure, GCP | Hybrid | Deep XAI Integration | 4.6/5 |

| Evidently AI | Open-source / Python | Multi-platform | Self-hosted | Interactive HTML Reports | 4.7/5 |

| Arthur AI | Fairness / Governance | AWS, Azure, GCP | Hybrid | Fairness Tooling | 4.5/5 |

| SageMaker Monitor | AWS Ecosystem | AWS | Cloud | CloudWatch Integration | 4.4/5 |

| Neptune.ai | Research & Metadata | Multi-platform | Hybrid | Flexible Metadata Store | 4.8/5 |

| Giskard | LLM Safety / Red-teaming | Multi-platform | Hybrid | Automated LLM Scanner | 4.6/5 |

| Censius | All-around Observability | AWS, Azure, GCP | Cloud | Segment Monitoring | 4.3/5 |

| DataRobot MLOps | Enterprise Governance | Multi-platform | Hybrid | Challenger Models | 4.6/5 |

Evaluation & Scoring of Model Monitoring Tools

The scores below reflect a comparative assessment of how these tools perform in modern enterprise MLOps environments.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total |

| Arize AI | 10 | 8 | 9 | 9 | 10 | 9 | 7 | 8.75 |

| WhyLabs | 8 | 9 | 10 | 10 | 10 | 8 | 9 | 8.95 |

| Fiddler AI | 10 | 7 | 8 | 9 | 8 | 9 | 7 | 8.20 |

| Evidently AI | 7 | 10 | 9 | 6 | 9 | 7 | 10 | 8.05 |

| Arthur AI | 9 | 7 | 8 | 9 | 8 | 9 | 7 | 7.95 |

| SageMaker Monitor | 7 | 9 | 8 | 10 | 8 | 9 | 8 | 8.05 |

| Neptune.ai | 8 | 10 | 10 | 8 | 9 | 9 | 8 | 8.65 |

| Giskard | 8 | 8 | 8 | 7 | 9 | 8 | 9 | 8.15 |

| Censius | 8 | 7 | 8 | 9 | 8 | 8 | 8 | 7.85 |

| DataRobot MLOps | 10 | 6 | 9 | 10 | 9 | 9 | 6 | 8.05 |

How to Interpret These Scores:

- Weighted Total: A score above 8.5 indicates a high-value tool for modern production environments.

- Performance vs. Security: Tools like WhyLabs lead because they provide high performance (via profiling) without compromising data security.

- Ease vs. Depth: Evidently AI is the easiest for developers, but specialized enterprise tools like Arize offer more technical depth for complex troubleshooting.

Which Model Monitoring & Drift Detection Tool Is Right for You?

Solo / Freelancer

For an individual data scientist, Evidently AI is the best choice. It is free, runs locally in a Jupyter notebook, and provides beautiful visualizations that can be shared with clients or managers immediately.

SMB

Small and medium businesses should look at WhyLabs or Neptune.ai. WhyLabs is particularly effective because its open-source “whylogs” library allows you to start monitoring for free and then upgrade to their cloud platform as your model count grows.

Mid-Market

Companies with multiple models in production should evaluate Arize AI or Censius. Arize is particularly strong if you are working with non-tabular data (images, text, embeddings), while Censius offers a great “middle-ground” for traditional ML monitoring.

Enterprise

For large organizations in regulated industries (Banking, Health), Fiddler AI or DataRobot MLOps are the gold standards. Their focus on explainability, bias mitigation, and compliance reporting is essential for meeting legal and risk management requirements.

Budget vs Premium

- Budget Choice: Evidently AI (Open-source), WhyLabs (Free tier).

- Premium Choice: Arize AI, DataRobot MLOps, Arthur AI.

Feature Depth vs Ease of Use

- High Depth: Fiddler AI (XAI Focus), Arize AI (Embedding focus).

- High Ease: SageMaker Model Monitor, Evidently AI.

Integrations & Scalability

- Top Integrations: Neptune.ai, WhyLabs.

- Top Scalability: Amazon SageMaker, WhyLabs.

Security & Compliance Needs

Organizations with strict data privacy needs should prioritize WhyLabs due to its profiling architecture. Those needing deep regulatory reporting should prioritize Fiddler AI.

Frequently Asked Questions (FAQs)

- What is the difference between Data Drift and Concept Drift?

Data drift occurs when the input features change (e.g., users are getting older), whereas Concept drift occurs when the relationship between input and target changes (e.g., users’ tastes change).

- How often should I monitor for drift?

For high-volume models, real-time monitoring is best. For lower-volume or batch models, running a drift check once a day or once a week is usually sufficient.

- Can I monitor a model if I don’t have the “Ground Truth” yet?

Yes. You can monitor “Data Drift” (changes in inputs) and “Prediction Drift” (changes in outputs) immediately, even if it takes weeks to find out if the model was actually correct.

- Do these tools automatically retrain my models?

Some (like DataRobot and SageMaker) can trigger retraining pipelines, but most tools focus on detecting the need for retraining and alerting a human engineer.

- Is it expensive to run these monitoring tools?

It depends on the volume. Using profiling-based tools like WhyLabs is very cheap, whereas calculating complex explainability (SHAP) for every inference can be very expensive.

- How do I monitor a Large Language Model (LLM) for drift?

Monitoring LLMs involves tracking “Embedding Drift” (semantic shifts), hallucination rates, toxicity scores, and user feedback (e.g., thumbs up/down).

- What is the “Kolmogorov-Smirnov” test?

It is a common statistical test used by these tools to determine if two data distributions (e.g., training data vs. production data) are significantly different.

- Can I use my existing DevOps tools (like Datadog) for ML monitoring?

Datadog is great for system health (latency, CPU), but it cannot detect if your model’s accuracy is dropping or if your data distributions are shifting.

- What is “Disparate Impact” in model monitoring?

It is a metric used to detect bias, showing if a model’s decisions are significantly different for a protected group (e.g., gender or race) compared to others.

- Do I need to change my model code to use these tools?

Usually, you only need to add a few lines of code to your inference script to “log” the data to the monitoring platform or generate a profile.

Conclusion

Model monitoring and drift detection are no longer “nice-to-have” features; they are the critical final step in the machine learning lifecycle. While Evidently AI provides the perfect entry point for developers, enterprise platforms like Arize AI and WhyLabs offer the scalability and privacy required for large-scale operations.The key to success is building a culture of observability—where a model is not considered “done” until its monitoring dashboard is live. By implementing these tools, you ensure that your AI remains a reliable asset that adapts to the world as it changes, rather than a black-box liability.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals